New book: I. M. Wright’s “Hard Code”: A Decade of Hard-Won Lessons from Microsoft, Second Edition

We’re very happen to announce that the second, newly expanded edition of I. M. Wright’s “Hard Code”: A Decade of Hard-Won Lessons from Microsoft, by Eric Brechner is available for purchase. (Print ISBN 9780735661707; Page Count 448).

We’re very happen to announce that the second, newly expanded edition of I. M. Wright’s “Hard Code”: A Decade of Hard-Won Lessons from Microsoft, by Eric Brechner is available for purchase. (Print ISBN 9780735661707; Page Count 448).

Here is an excerpt from Chapter 5, “Software Quality—More Than a Dream”.

Chapter 5

Software Quality—More Than a Dream

Some people mock software development, saying if buildings were built like

software, the first woodpecker would destroy civilization. That’s quite funny, or

disturbing, but regardless it’s misguided. Early buildings lacked foundations. Early

cars broke down incessantly. Early TVs required constant fiddling to work properly.

Software is no different.

At first, Microsoft wrote software for early adopters, people comfortable replacing

PC boards. Back then, time to market won over quality, because early adopters

could work around issues, but they couldn’t slow the clock. Shipping fastest meant

coding quickly and then fixing just enough to make it work.

Now our market is consumers and the enterprise, who value quality over the

hassles of experimentation. The market change was gradual, so Microsoft’s initial

response was simply to fix more bugs. Soon bug fixing was taking longer than

coding, an incredibly slow process. The fastest way to ship high quality is to trap

errors early, coding it right the first time and minimizing rework. Microsoft has

been shifting to this quality upstream approach over the time I’ve been writing

these columns. The first major jolt that drove the company-wide change was a

series of Internet virus attacks in late 2001.

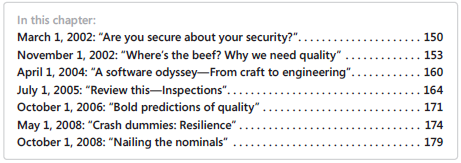

In this chapter, I. M. Wright preaches quality to the engineering masses. The first

column evaluates security issues. The second analyzes why quality is essential and

how you get it. The third column explains an engineering approach to software that

dramatically reduces defects. The fourth talks about design and code inspections.

The fifth describes metrics that can predict quality issues before customers experience them.

The sixth focuses on techniques to make software resilient. And

the chapter aptly finishes by emphasizing the five basics of software quality.

While all these columns provide an interesting perspective, the second one,

“Where’s the beef? Why we need quality” stands out as an important turning point.

When I wrote it few inside or outside Microsoft believed we were serious about

quality. Years later, many of the concepts are taken for granted. It took far more

than an opinion piece to drive that change, but it’s nice to call for action and have

people respond.

May 1, 2008: “Crash dummies: Resilience”

I heard a remark the other day that seemed stupid on the surface,

I heard a remark the other day that seemed stupid on the surface,

but when I really thought about it I realized it was completely idiotic

and irresponsible. The remark was that it’s better to crash and let

Watson report the error than it is to catch the exception and try to

correct it.

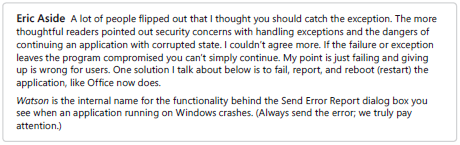

From a technical perspective, there is some sense to the strategy of allowing the crash to

complete and get reported. It’s like the logic behind asserts—the moment you realize you

are in a bad state, capture that state and abort. That way, when you are debugging later

you’ll be as close as possible to the cause of the problem. If you don’t abort immediately, it’s

often impossible to reconstruct the state and identify what went wrong. That’s why asserts

are good, right? So, crashing is sensible, right?

Oh please. Asserts and crashing are so 1990s. If you’re still thinking that way, you need to

shut off your Walkman and join the twenty-first century, unless you write software just for

yourself and your old-school buddies. These days, software isn’t expected to run only until its

programmer got tired. It’s expected to run and keep running. Period.

Struggle against reality

Hold on, an old-school developer, I’ll call him Axl Rose, wants to inject “reality” into the

discussion. “Look,” says Axl, “you can’t just wish bad machine states away, and you can’t fix

every bug no matter how late you party.” You’re right, Axl. While we need to design, test,

and code our products and services to be as error free as possible, there will always be bugs.

What we in the new century have realized is that for many issues it’s not the bugs that are

the problem—it’s how we respond to those bugs that matters.

Axl Rose responds to bugs by capturing data about them in hopes of identifying the cause.

Enlightened engineers respond to bugs by expecting them, logging them, and making their

software resilient to failure. Sure, we still want to fix the bugs we log because failures are

costly to performance and impact the customer experience. However, cars, TVs, and networking

fail all the time. They are just designed to be resilient to those failures so that crashes

are rare.

Perhaps be less assertive

“But asserts are still good, right? Everyone says so,” says Axl. No. Asserts as they are implemented

today are evil. They are evil. I mean it, evil. They cause programs to be fragile instead

of resilient. They perpetuate the mindset that you respond to failure by giving up instead of

rolling back and starting over.

We need to change how asserts act. Instead of aborting, asserts should log problems and

then trigger a recovery. I repeat—keep the asserts, but change how they act. You still want

asserts to detect failures early. What’s even more important is how you respond to those failures,

including the ones that slip through.

If at first you don’t succeed

So, how do you respond appropriately to failure? Well, how do you? I mean, in real life, how

do you respond to failure? Do you give up and walk away? I doubt you made it through the

Microsoft interview process if that was your attitude.

When you experience failure, you start over and try again. Ideally, you take notes about what

went wrong and analyze them to improve, but usually that comes later. In the moment, you

simply dust yourself off and give it another go.

For web services, the approach is called the five Rs—retry, restart, reboot, reimage, and

replace. Let’s break them down:

■ Retry First off, you try the failed action again. Often something just goofed the first

time and will work the second time.

■ Restart If retrying doesn’t work, restarting often does. For services, this often means

rolling back and restarting a transaction or unloading a DLL, reloading it, and performing

the action again the way Internet Information Server (IIS) does.

■ Reboot If restarting doesn’t work, do what a user would do, and reboot the machine.

■ Reimage If rebooting doesn’t work, do what support would do, and reimage the

application or entire box.

■ Replace If reimaging doesn’t do the trick, it’s time to get a new device.

Welcome to the jungle

Much of our software doesn’t run as a service in a datacenter, and contrary to what Google

might have you believe, customers don’t want all software to depend on a service. For client

software, the five Rs might seem irrelevant to you. Ah, to be so naïve and dismissive.

The five Rs apply just as well to client and application software on a PC or a phone. The key

most engineers miss is defining the action, the scope of what gets retried or restarted.

On the web it’s easier to identify—the action is usually a transaction to a database or a GET

or POST to a page. For client and application software, you need to think more about what

action the user or subsystem is attempting.

Well-designed software will have custom error handling at the end of each action, just like

I talked about in my column “A tragedy of error handling” (which appears in Chapter 6).

Having custom error handling after actions makes applying the five Rs much simpler.

Unfortunately, lots of throwback engineers, like Axl Rose, use a Routine for Error Central

Handling (RECH) instead, as I described in the same column. If your code looks like Axl’s,

you’ve got some work to do to separate out the actions, but it’s worth it if a few actions harbor

most crashes and you aren’t able to fix the root cause.

Just like starting over

Let’s check out some examples of applying the five Rs to client and application software:

■ Retry PCs and devices are a bit more predictable than web services, so failed operations

will likely fail again. However, retrying works for issues that fail sporadically, like

network connectivity or data contention. So, when saving a file, rather than blocking

for what seems like an eternity and then failing, try blocking for a short timeout and

then trying again—a better result for the same time or less. Doing so asynchronously

unblocks the user entirely and is even better, but it might be tricky.

■ Restart What can you restart at the client level? How about device drivers, database

connections, OLE objects, DLL loads, network connections, worker threads, dialogs, services,

and resource handles. Of course, blindly restarting the components you depend

upon is silly. You have to consider the kind of failure, and you need to restart the full

action to ensure that you don’t confuse state. Yes, it’s not trivial. What kills me is that as

a sophisticated user, restarting components is exactly what I do to fix half the problems

I encounter. Why can’t the code do the same? Why is the code so inept? Wait for it, the

answer will come to you.

■ Reboot If restarting components doesn’t work or isn’t possible because of a serious

failure, you need to restart the client or application itself—a reboot. Most of the

Office applications do this automatically now. They even recover most of their state as a

bonus. There are some phone and game applications that purposely freeze the screen

and reboot the application or device in order to recover (works only for fast reboots).

■ Reimage If rebooting the application doesn’t work, what does product support

tell you to do? Reinstall the software. Yes, this is an extreme measure, but these days

installs and repairs are entirely programmable for most applications, often at a component

level. You’ll likely need to involve the user and might even have to check online for

a fix. But if you’re expecting the user to do it, then you should do it.

■ Replace This is where we lose. If our software fails to correct the problem, the customer

has few choices left. These days, with competitors aching