Lync client refuses to connect to a peer to peer voice conversation

Ok, so had a very strange case, which was resolved.

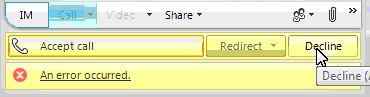

The customer had a user which could not connect to a peer to peer conversation using voice. The call would terminate immediately with an error - "An error occurred"

Even when the user was called the call would terminate when the user attempted to make the connection with the same error.

Ok, that was a descriptive error. The usual checks would be the SIP stack , Communicator ETL and the media stack ETL.

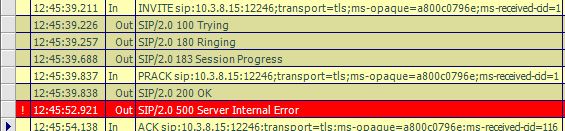

Looking at the SIP stack we could see the error 500 being sent from the failing client after the out 200OK in response to the inbound PRACK. Ok a picture and all that jazz..

Great, we have a SIP 500 error. Now to look at the diagnostics : ms-client-diagnostics: 52001;reason="Client side general processing error." The client generated this, but why?

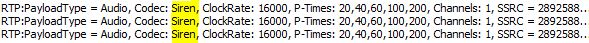

Looking at the media ETL we can see this detail around the codec's being used.

Component: (Shared)

Level: TL_ERROR

Flag: STREAM_GENERIC

Function: CopyCodecsCollectionToCodecsSet

Source: RtpStream.cpp(821)

Local Time: 04/11/2012-12:45:52.919

Sequence# : 00000230

CorrelationId : 0x00000000

ThreadId : 16C8

ProcessId : 2250

CpuId : 2

Original Log Entry :

TL_ERROR(STREAM_GENERIC) [2]2250.16C8::04/11/2012-11:45:52.919.00000230 ((Shared),CopyCodecsCollectionToCodecsSet:RtpStream.cpp(821))[0x00000000]Atleast one codec must be enabled, hr: c0042004

The key here for us is the explanation - Atleast one codec must be enabled. It looks like the client has a problem with codecs? We checked the codecs and all was well. The media player report screen showed the codecs were installed correctly. So it must be something else.

This started to puzzle me. We checked the usual suspects, that there wasn't an over agressive anti-virus or firewall agent that was fooling around with the streaming of the audio. The next step was to take a network trace. The user created the trace and sent it to us for analysis. We used netmon for capture and its great for capturing all data moving around all the network adaptors on the machine. Plus we hoped to see the RTP traffic showing the encoded conversation, from that it will tell us the codec being used.

We got the trace and had a look. There was voice traffic eminating from the failing user's client. The RTP packets were here and were showing a payload type of audio but a codec of Siren.

Back to the SIP stack. A colleague of mind pointed out that within the SDP there is a parameter that sets the bandwidth for that call. So we looked in the SIP 183 (since the peer was reacting to the invite) and saw that b=CT:1, which is strange since its usually set to 99980. Something must be setting the bandwidth to a very small setting. Looking at the ETL for the media stack we saw that bw=405 and that Siren was being used.

A device or driver on the users machine was preventing the bandwidth from being detected as a high value, thus restricting the users client to use Siren and even then at a very small amount which would throw an error.

We asked the customer to disable all network adaptors except the LAN and the wireless cart. Sometimes the WiFi virtual host adaptor can create a peer 2 peer network which will report back that the bandwidth is low. The user had a WiFI enabled mouse. Once this was disabled then it allowed Lync to create voice and now video calls.

So the lesson learnt is that an external device could cause issues, the INVITE and / or the 183 SESSION PROGRESS SIP messages can really help in cutting down the network. Network traces show you the symptoms in greater detail.