GesturePak v2 simplifies creation of gesture-controlled apps

What do you do after you’ve built a great app? You make it even better. That’s exactly what Carl Franklin, a Microsoft Most Valuable Professional (MVP), did with GesturePak. Actually, GesturePak is both a WPF app that lets you create your own gestures (movements) and store them as XML files, and a .NET API that can recognize when a user has performed one or more of your predefined gestures. It enables you to create gesture-controlled applications, which are perfect for situations where the user is not physically seated at the computer keyboard.

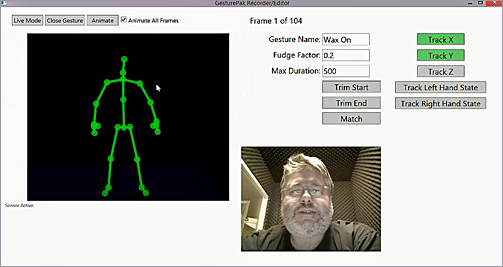

GesturePak v2 simplifies the creation of gesture-controlled apps. This image shows the app

in edit mode.

Franklin’s first version of GesturePak was developed with the original Kinect for Windows sensor. For GesturePak v2, he utilized the Kinect for Windows v2 sensor and its related SDK 2.0 public preview, and as he did, he rethought and greatly simplify the whole process of creating and editing gestures. To create a gesture in the original GesturePak, you had to break the movement down into a series of poses, then hold each pose and say the word “snapshot,” during which a frame of skeleton data was recorded. This process continued until you captured each pose in the gesture, which could then be tested and used in your own apps.

GesturePak v2 works very differently. You merely tell the app to start recording (with speech recognition), then you perform the gesture, and then tell it to stop recording. All of the frames are recorded. This gives you a way to play an animation of the gesture for your users.

GesturePak v2 still uses the same matching technology as version 1, relying on key frames (called poses in v1) that the user matches in series. But with the new version, once you've recorded the entire gesture, you can use the mouse wheel to "scrub" through the movement and pick out key frames. You also can select which joints to match simply by clicking on them. It's a much easier and faster way to create a gesture than the interface of GesturePak v1, which required you to select poses by using voice and manual commands.

Carl Franklin offered these words of technical advice for devs who arewriting WPF apps:

|

Another big change is the code itself. GesturePak v1 is written in VB.NET. GesturePak v2 was re-written in C#. (Speaking of coding, see the green box above for Franklin’s advice to devs who are writing WPF apps.)

Franklin was surprised by how easy it was to adapt GesturePak to Kinect for Windows v2. He acknowledges there were some changes to deal with—for instance, “Skeleton” is now “Body” and there are new JointType additions—but he expected that level of change. “Change is the price we pay for innovation, and I don't mind modifying my code in order to embrace the future,” Franklin says.

He finds the Kinect for Windows v2 sensor improved in all categories. “The fidelity is amazing. It can track your skeleton in complete darkness. It can track your skeleton from 50 feet away (or more), and with a much wider field of vision. It can tell whether your hands are open, closed, or pointing,” Franklin states, adding, “I took full advantage of the new hand states in GesturePak. You can now make a gesture in which you hold out your open hand, close it, move it across your chest, and open it again.” In fact, Franklin credits the improvements in fidelity with convincing customers who had been on the fence. “They’re now beating down my door, asking me to build them a next-generation Kinect-based app.”

The Kinect for Windows Team

Key links