Autoscaling Azure–Virtual Machines

This post will demonstrate autoscaling in Azure virtual machines.

Background

While I spend most of my time working with PaaS (platform as a service) components of Azure such as Cloud Services and Websites, I frequently need to help customers with solutions that require IaaS (infrastructure as a service) virtual machines. A topic that comes up very regularly in both of those conversations is how autoscaling works.

The short version is that you have to pre-provision virtual machines, and autoscale turns them on or off according to the rules you specify. One of those rules might be queue length, enabling you to build a highly scalable solution that provides cloud elasticity.

Autoscaling Virtual Machines

Let’s look at autoscaling virtual machines. To autoscale a virtual machine, you need to pre-provision the number of VMs and add them to an availability set. Using the Azure Management Portal to create the VM, I choose a Windows Server 2012 R2 Datacenter image and provide the name, size, and credentials.

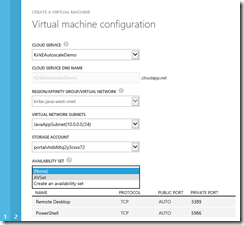

The next page allows me to specify the cloud service, region or VNet, storage account, and an availability set. If I don’t already have an availability set, I can create one. I already created one called “AVSet”, so I add the new VM to the existing availability set.

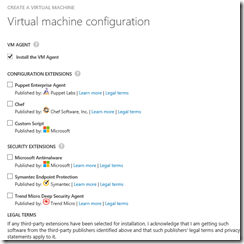

Finally add the extensions required for your VM and click OK to create the VM. Make sure to enable the VM Agent, we’ll use that later.

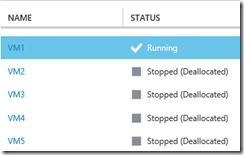

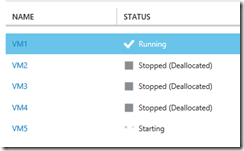

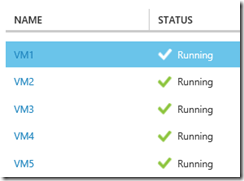

You can see that I’ve created 5 virtual machines.

I’ve forgotten to place VM2 in the availability set. No problem, I can go to it’s configuration and add it to the availability set.

This is the benefit of autoscaling and the cloud. I might use the virtual machine for a stateless web application where it’s unlikely that I need all 5 virtual machines running constantly. If I were running this on-premises, I would typically just leave them running, consuming resources that I don’t actually utilize (overprovisioned). I can reduce my cost by running them in the cloud and only utilize the resources that I need when I need them. Autoscale for virtual machines simply turns some of the VMs on or off depending on rules that I specify.

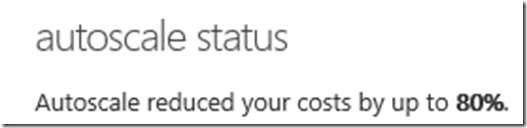

To show this. let’s configure autoscale for my availability set. Once VM5 is in the Running state, I go to the Cloud Services tool in the portal and then navigate to my cloud service’s dashboard. On the dashboard I will see a section for autoscale status:

It says that it can save up to 60% by configuring autoscale. Click the link to configure autoscale. This is the most typical demo that you’ll see, scaling by CPU. In this screenshot, I’ve configured autoscale to start at 1 instance. The target CPU range is between 60% and 80%. If it exceeds that range, then we’ll scale up 2 more instances and then wait 20 minutes for the next action. If the target is less than that range, we’ll scale down by 1 instance and wait 20 minutes.

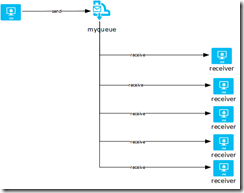

Easy enough to understand. A lesser known but incredibly cool pattern is scaling by queues. In a previous post, I wrote about Solving a throttling problem with Azure where I used a queue-centric work pattern. Notice the Scale by Metric option provides Queue as an option:

That means we can scale based on how many messages are waiting in the queue. If the messages are increasing, then our application is not able to process them fast enough, thus we need more capacity. Once the number of messages levels off, we don’t need the additional capacity, so we can turn the VMs off until they are needed again.

I changed my autoscale settings to use a Service Bus queue, scaling up by 1 instance every 5 minutes and down by 1 instance every 5 minutes.

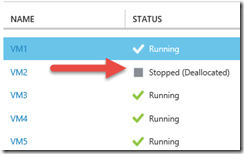

After we let the virtual machines run for awhile, we can see that all but one of them were turned off due to our autoscale rules.

Just a Little Code

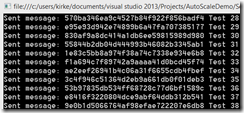

Virtual machines need something to do, so we’ll create a simple solution that sends and receives messages on a Service Bus queue. On my laptop, I have an application called “Sender.exe” that sends messages to a queue. Each virtual machine has an application on it that I’ve written called Receiver.exe that simply receive messages from a queue. We will have up to 5 receivers working simultaneously as a competing consumer of the queue.

The Sender application sends a message to the queue once every second.

Sender

- using Microsoft.ServiceBus.Messaging;

- using Microsoft.WindowsAzure;

- using System;

- namespace Sender

- {

- class Program

- {

- static void Main(string[] args)

- {

- string connectionString =

- CloudConfigurationManager.GetSetting("Microsoft.ServiceBus.ConnectionString");

- QueueClient client =

- QueueClient.CreateFromConnectionString(connectionString, "myqueue");

- int i = 0;

- while(true)

- {

- var message = new BrokeredMessage("Test " + i);

- client.Send(message);

- Console.WriteLine("Sent message: {0} {1}",

- message.MessageId,

- message.GetBody<string>());

- //Sleep for 1 second

- System.Threading.Thread.Sleep(TimeSpan.FromSeconds(1));

- i++;

- }

- }

- }

- }

The Receiver application reads messages from the queue once every 3 seconds. The idea is that the sender will send the messages faster than 1 machine can handle, which will let us observe how autoscale works.

Receiver

- using Microsoft.ServiceBus.Messaging;

- using Microsoft.WindowsAzure;

- using System;

- using System.Collections.Generic;

- using System.Linq;

- using System.Text;

- namespace Receiver

- {

- class Program

- {

- static void Main(string[] args)

- {

- string connectionString =

- CloudConfigurationManager.GetSetting("Microsoft.ServiceBus.ConnectionString");

- QueueClient client =

- QueueClient.CreateFromConnectionString(connectionString, "myqueue");

- while (true)

- {

- var message = client.Receive();

- if(null != message)

- {

- Console.WriteLine("Received {0} : {1}",

- message.MessageId,

- message.GetBody<string>());

- message.Complete();

- }

- //Sleep for 3 seconds

- System.Threading.Thread.Sleep(TimeSpan.FromSeconds(3));

- }

- }

- }

- }

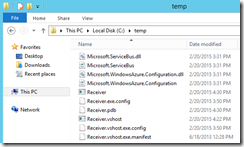

I built the Receiver.exe application in Visual Studio then copied all of the files in the bin/debug folder to the c:\temp folder on each virtual machine.

Running Startup Tasks with Autoscale

As each virtual machine is started, I want the Receiver.exe code to execute upon startup. I could go into each machine and set a group policy to assign computer startup scripts, but since we are working with Azure, we have the ability to use the custom script extension which will run each time the machine is started. When I created the virtual machine earlier, I enabled the Azure VM Agent on each virtual machine, so we can use the custom script extension.

We need to upload a PowerShell script to be used as a startup task to execute the Receiver.exe code that is already sitting on the computer. The code for the script is stupid simple:

Startup.ps1

- Set-Location "C:\temp"

- .\Receiver.exe

This script is uploaded from my local machine to Azure blob storage as a block blob using the following commands:

Upload block blob

- $context = New-AzureStorageContext -StorageAccountName "kirkestorage" -StorageAccountKey "QiCZBIREDACTEDuYcqemWtwhTLlw=="

- Set-AzureStorageBlobContent -Blob "startup.ps1" -Container "myscripts" -File "c:\temp\startup.ps1" -Context $context -Force

I then set the custom script extension on each virtual machine.

AzureVMCustomScriptExtension

- $vms = Get-AzureVM -ServiceName "kirkeautoscaledemo"

- foreach($vm in $vms)

- {

- Set-AzureVMCustomScriptExtension -VM $vm -StorageAccountName "kirkestorage" -StorageAccountKey "QiCZBIREDACTEDuYcqemWtwhTLlw==" –ContainerName "myscripts" –FileName "startup.ps1"

- $vm | Update-AzureVM

- }

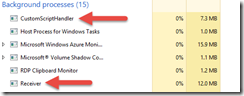

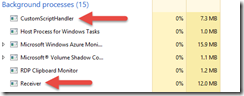

Once updated, I can see that the Receiver.exe is running on the one running virtual machine:

Testing It Out

The next step is to fire up my Sender and start sending messages to it. The only problem is that I haven’t done a good job in providing any way to see what is going on, how many messages are in the queue. One simple way to do this is to use the Service Bus Explorer tool, a free download. Simply enter the connection string for your Service Bus queue and you will be able to connect to see how many messages are in the queue. I can send a few messages, then stop the sender. Refresh the queue, and the number of messages decreases once every 3 seconds.

OK, so our queue receiver is working. Now let’s see if it makes Autoscale work. I’ll fire up the Sender and let it run for awhile.

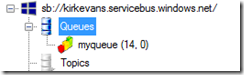

The number of messages in the queue continues to grow…

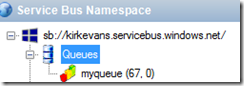

And after a few minutes one of the virtual machines is automatically started.

I check and make sure that Receiver.exe is executing:

Waiting for awhile (I lost track of time, guessing 30 minutes or so) you can see that all of the VMs are now running because the number of incoming messages outpaced the ability for our virtual machines to process the messages.

Once there are around 650 messages in queue, I turn the queue sender off. The number of messages starts to drop quickly. Since we are draining messages out of the queue, we should be able to observe autoscale shutting things down. About 5 minutes after the number of queue messages drained to zero, I saw the following:

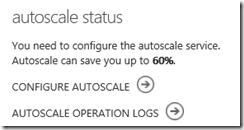

Go back to the dashboard for the cloud service, and once autoscale shuts down the remaining virtual machines (all but one, just like we defined) you see the following:

Monitoring

I just showed how to execute code when the machine is started, but is there any way to see in the logs when an autoscale operation occurs? You bet! Go to the Management Services tool in the management portal:

Go to the Operation Logs tab, and take a look at the various ExecuteRoleSetOperation entries.

Click on the details for one.

Operation Log Entry

- <SubscriptionOperation xmlns="https://schemas.microsoft.com/windowsazure"

- xmlns:i="https://www.w3.org/2001/XMLSchema-instance">

- <OperationId>REDACTED</OperationId>

- <OperationObjectId>/REDACTED/services/hostedservices/KirkEAutoscaleDemo/deployments/VM1/Roles/Operations</OperationObjectId>

- <OperationName>ExecuteRoleSetOperation</OperationName>

- <OperationParameters xmlns:d2p1="https://schemas.datacontract.org/2004/07/Microsoft.WindowsAzure.ServiceManagement">

- <OperationParameter>

- <d2p1:Name>subscriptionID</d2p1:Name>

- <d2p1:Value>REDACTED</d2p1:Value>

- </OperationParameter>

- <OperationParameter>

- <d2p1:Name>serviceName</d2p1:Name>

- <d2p1:Value>KirkEAutoscaleDemo</d2p1:Value>

- </OperationParameter>

- <OperationParameter>

- <d2p1:Name>deploymentName</d2p1:Name>

- <d2p1:Value>VM1</d2p1:Value>

- </OperationParameter>

- <OperationParameter>

- <d2p1:Name>roleSetOperation</d2p1:Name>

- <d2p1:Value><?xml version="1.0" encoding="utf-16"?>

- <z:anyType xmlns:i="https://www.w3.org/2001/XMLSchema-instance"

- xmlns:d1p1="https://schemas.microsoft.com/windowsazure"

- i:type="d1p1:ShutdownRolesOperation"

- xmlns:z="https://schemas.microsoft.com/2003/10/Serialization/">

- <d1p1:OperationType>ShutdownRolesOperation</d1p1:OperationType>

- <d1p1:Roles>

- <d1p1:Name>VM3</d1p1:Name>

- </d1p1:Roles>

- <d1p1:PostShutdownAction>StoppedDeallocated</d1p1:PostShutdownAction>

- </z:anyType>

- </d2p1:Value>

- </OperationParameter>

- </OperationParameters>

- <OperationCaller>

- <UsedServiceManagementApi>true</UsedServiceManagementApi>

- <UserEmailAddress>Unknown</UserEmailAddress>

- <ClientIP>REDACTED</ClientIP>

- </OperationCaller>

- <OperationStatus>

- <ID>REDACTED</ID>

- <Status>Succeeded</Status>

- <HttpStatusCode>200</HttpStatusCode>

- </OperationStatus>

- <OperationStartedTime>2015-02-20T20:47:54Z</OperationStartedTime>

- <OperationCompletedTime>2015-02-20T20:48:38Z</OperationCompletedTime>

- <OperationKind>ShutdownRolesOperation</OperationKind>

- </SubscriptionOperation>

Notice on line 26 that the operation type is “ShutdownRolesOperation”, and on line 28 the role name is VM3. That entry occurred after VM3 was automatically shut down.

Summary

This post showed a demonstration of Autoscale in Azure turning virtual machines on and off according to the number of messages in a queue. This pattern can be hugely valuable to build scalable solutions while taking advantage of the elasticity of cloud resources. You only pay for what you use, and it’s in your best interest to design solutions to take advantage of that and avoid over-provisioning resources.

For More Information

Solving a throttling problem with Azure

Automating VM Customization tasks using Custom Script Extension