Another round of accessibility testing

Last fall I wrote about one of our Accessibility testing tasks. We just finished another round and since I saw this request on our discussion groups, I thought I should follow up.

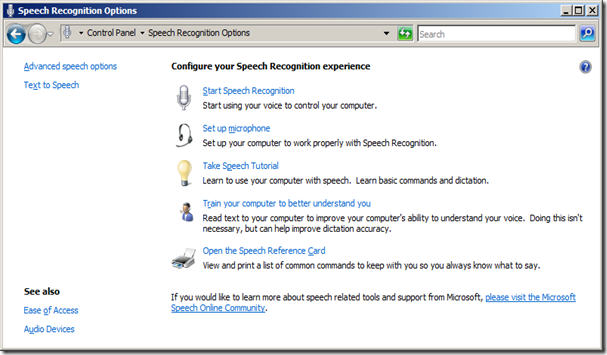

Last fall, we took a programmatic view of accessibility testing. WE checked the properties of all the elements we have in OneNote to ensure screen readers and such software can (literally) tell users what the control is and what it does. This round was a next step - use the built in voice recognition software to manipulate OneNote. If you are using Vista and have a microphone handy, you can do this yourself. Here's a bare bones quickstart:

-

- Make sure your microphone is properly selected:

- Start | Control Panel | Hardware and Sound

- Audio Devices and Themes (on my machine, this was called "Manage Audio Devices"

- Make sure your microphone is set as the default

- If not, right-click | Set as Default

- Make sure your microphone is properly selected:

-

- Test your features for accessibility

- Make sure OneNote is active when beginning

- Say "start listening" to enable speech

- Say "stop listening" to disable speech

- To drop a menu, say the menu name, e.g. "file" drops the File menu

- Say the menu item to access the desired menu item

- Say "show numbers" to have Vista display numbers for all accessible UI elements

- Say the number of the UI item you want to access

- Say OK to select the item

- Say "What can I say" to see a list of commands

- Test your features for accessibility

-

- When you start testing, disconnect the mouse and keyboard.

That last step is a doozy - manipulating controls on a computer with no mouse and keyboard is a completely different experience. The toughest part for me was the "Scroll up" and "Scroll down" commands. For some reason, they did not click in my mind when I went through the training and I struggled until I used the "Speech Reference Card" available.

The good news is everything I set out to do (cut and paste between other apps and OneNote, manipulate my features in OneNote, share a notebook, etc…) were all possible. We did find a few bugs across the team we are planning on fixing, but I was very happy to see the results for this testing.

It's pretty hard to describe what you have to do with only audio input to a computer. Even if you don't have a microphone, I suggest working through the Speech Tutorial to see how a user with no mouse or keyboard uses a computer.

Questions, comments, concerns and criticisms always welcome,

John