V is for… Velocity

Velocity is the code name (and a cool code name at that) for a highly-scalable, in-memory cache currently in a Community Technology Preview (CTP) stage. The objective of Velocity is to increase performance by enabling your applications to grab data from the cache versus needing to make expensive calls back to the data source, whether that be a traditional relational database management system or an on-premises or cloud-hosted service.

Velocity Physical and Logical Models

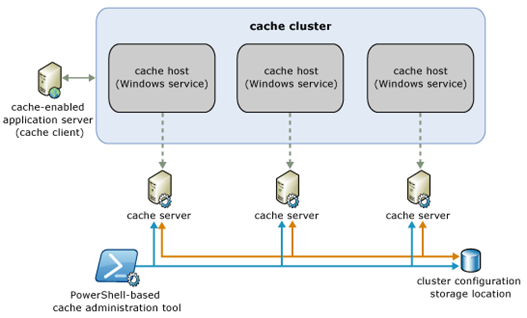

The physical configuration of Velocity is as a service (the cache host service) running on one or more servers, known as cache servers. Multiple cache servers are arranged in a ring, the cache cluster, communicating among each other to handle requests for retrieving, storing, and distributing data. The cluster configuration itself may be stored in SQL Server or in a file share.

To the consumer of the cache, the cache cluster is the single entry point, and both the number of servers involved and the actual distribution of data among those servers is immaterial. The image below shows the relationship of the client to the cache cluster and servers; note there are also a PowerShell administration tool and cmdlets provided to configure the cluster.

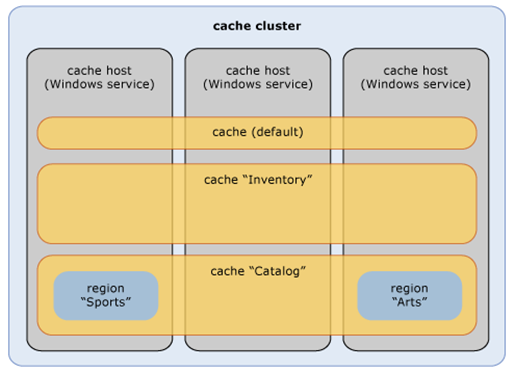

The logical model of a cache cluster comprises three parts

- named cache

- region

- cache item

A named cache is a logical grouping of data, somewhat like a database, and is defined by a name, expiration and eviction policy (defining when items expire and how are they removed from the cache). You can also enable high-availability, which automatically engages secondary copies of your objects. The secondary objects are kept in sync (strong consistency) and can assume a primary role if the cache host on which the primary object is located fails.

A region is a logical subset of a named cache, something like a table within a database. Regions, which are optional, enable richer retrieval functionality by allowing data to be tagged with one or more descriptive strings (in addition to the unique key for that data). Regions, however, are stored on a single node in the cluster and not load-balanced, so there is a tradeoff between functionality and scalability.

A cache item is any serializable descendant of System.Object. Associated with each cache item is a key and other metadata, including version, creation timestamp, time to live (TTL), etc.

Data Taxonomy

When considering caching technology, it’s important to understand the nature of the data that you are caching. One possible classification is three bins

- Reference data

- Activity data

- Resource data

Reference data are things like tax tables or a product catalog. The data is shared among multiple clients, but it’s essentially immutable, and when it does change the change affects everyone – an updated product list, for instance. Such data lends itself quite well to partitioning, and in Velocity, you can use local cache as well (cache managed within the application process) to get even better performance for frequently accessed items.

Activity data are items that result from the processes that your application initiates. If you’re running an e-commerce site, for instance, the activity data would be things like a shopping cart or customer order. The data here is mutable, but it’s only accessible by a single user. The single access point still allows for partitioning of the data; however, here high-availability is generally required since you don’t want to risk losing information about an activity while that activity is in progress.

Resource data is data that is shared in both a read and write fashion by multiple clients. Inventory data is a classic example – you’re booking a flight via an on-line service, for instance. As you view the flight options, someone else may be booking the last seat on that flight, so in these scenarios options for resolving concurrency issues are paramount. Velocity does support both optimistic concurrency (via version metadata) and pessimistic concurrency (via a locking mechanism).

The nature of the data to be cached will lead you toward specific configuration options for your caches.

Client API

Using Velocity within your own applications is fairly straightforward. There are four assemblies that contain the various classes required by the Microsoft.Data.Caching namespace:

- CASBase.dll

- FabricCommon.dll

- CacheBaseLibrary.dll

- ClientLibrary.dll

When building client applications, you’ll need to add references to the last two of these assemblies within your Visual Studio project. You also need to add some configuration information to the client config file (or programmatically) to specify the location of a cache host (any one in the cluster will do) as well as whether you want the client to act as a simple client or a routing client.

A routing client maintains a lookup table mapping data items to the specific cache host where the object is physically located, so the data is directly retrieved from that server.

A simple client connects to only a single cache host and makes all requests through that host. As a result, the host needs to make additional requests to get to the actual cache host physically storing the data. Simple clients should be use only if there are topology issues such that a client does not have visibility to all the cache hosts in the cluster – generally a rare scenario.

Within the client configuration file, you can also indicate whether a local cache should be maintained. The local cache is stored in the address space of the client application and should be used only to store rarely (if ever) changed data - to reduce the occurrence of stale data.

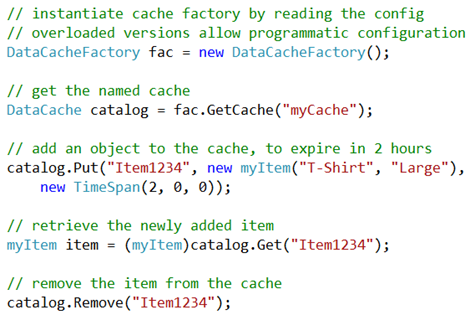

Within your client code, the access pattern looks something like this:

The code above does not use regions; however, there are overloads of Get and Put that address regions as well. There are also ‘locking’ versions of Get and Put to provide for pessimistic concurrency, where the object is locked from access by other clients.

Also available are callback actions to which a client can subscribe to be informed of additions, modifications, and deletions of items and regions as well as logging capabilities with levels of error, warning, information and verbose.

References

MSDN Data Platform Developer Center

Community Technology Preview (CTP) 3 download

Sample Code (CTP 2 – needs modification to work with CTP 3)