Realtime Brainwave Data with WPF

Update: Code is posted here.

A wonderful thing happened since my last post on this project. The friendly folks at Emotiv listened to their devoted users and opened up the raw electrode data from their amazing EPOC neuroheadset (just $299). That’s a 14-channel fire hose of brainwave data, streaming via Bluetooth at a sampling rate of 128Hz. I wanted to see if I could whip up a quick WPF control that that would display all that data in realtime.

As it turned out, “quick” wasn’t in the cards -- delivering new Visual Studio 2010 content took priority -- but finally, I have a working prototype that shows it’s doable. The code’s not quite ready for public consumption, but here’s a teaser.

I wrote a managed wrapper in C#, named EmoEngineClient, that polls the neuroheadset for state and also collects the electrode data. That was the easy part. Much more challenging was designing the rendering pipeline. A couple of seconds of data generate several thousand points, and painting all of those within the 7.8ms frame rate is not straightforward. For highest performance, you’d probably write a pixel shader and do everything in the GPU, but I’m not a Shader Language jock, and I want to use native WPF features. The next best thing is to draw each channel’s data trace with a Polyline, and as long as I do very little clipping, it should render very quickly.

Even so, that’s too much data for the UI thread to render and still remain responsive to user input. The trick is to render each Polyline on its own thread, which is simpler than you might think, but still tricky.

Fortunately, Dwayne Need showed us how to do this in Multithreaded UI: HostVisual. Each signal trace is a Polyline inside a custom control, named TimeSeriesControl. Using Dwayne’s code, each TimeSeriesControl instance is created on its own thread, then attached to the visual tree. By using Dwayne’s shim classes, these controls fully participate in the layout system, even though they render on separate threads. This works remarkably well, and it turns out to be fast enough to keep up with the electrode data in realtime.

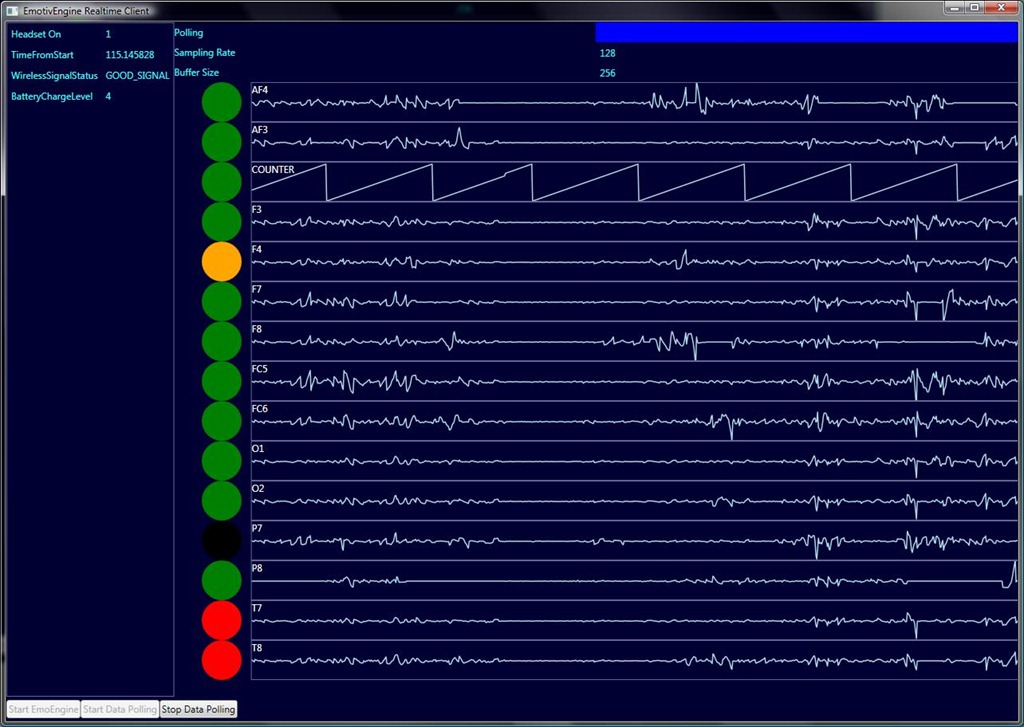

Here’s the test app as it appears currently.

Realtime brainwave data from the EPOC neuroheadset. Electrode contact quality for each channel is shown as a colored circle.

Straightforward WPF databinding connects hardware status data to UI elements. Signal traces are fully repainted each time a new data frame is pushed by the EmoEngine. There’s probably room for optimization here; instead of repainting the whole trace each frame, I could paint only the new frame data, but this would add some complexity. My ultimate goal is to achieve the dead-smooth scrolling you see in medical devices, but I’m not quite there yet. Still, all of WPF’s nice styling and layout features apply to the realtime data stream – I especially like the anti-aliased lines in the signal traces.

Because I plan to drive various visualizations with realtime brainwave data, I need to do some fairly heavy signal conditioning, which means I need to transform these time-series signals into frequency space. I looked around and found a good Fast Fourier Transform implementation in Math.NET, so EmoEngineClient provides FFT and Inverse FFT services. But that’s a story for another blog post.

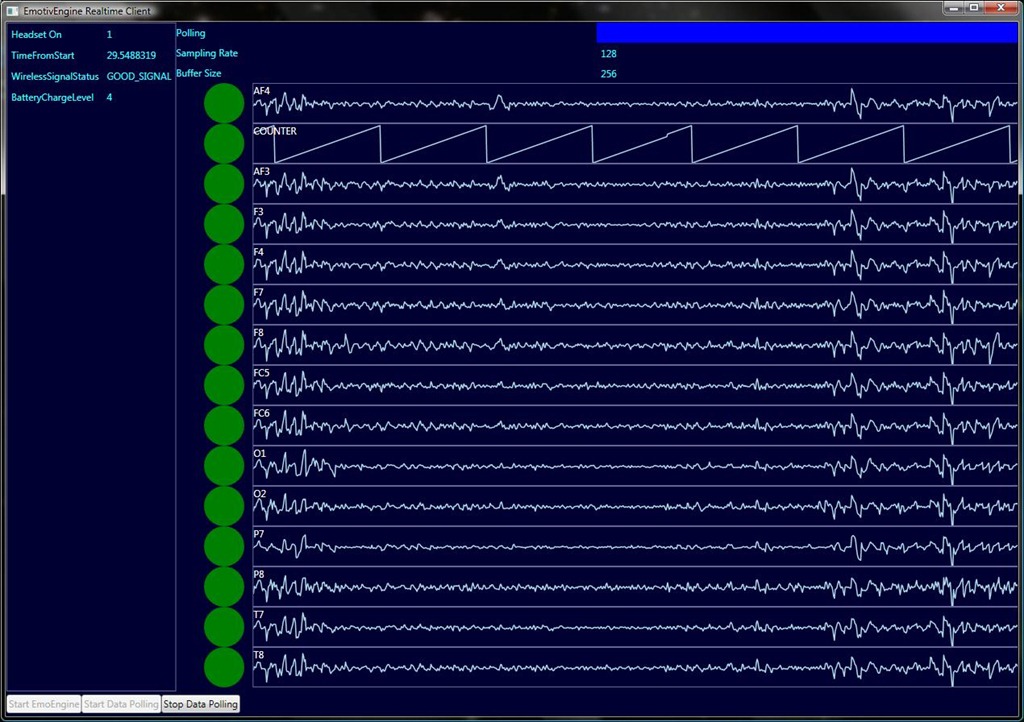

Here’s some more gratuitous brainwave data.

Realtime brainwave data from the EPOC neuroheadset.

Technorati Tags: EEG,.NET Framework,WPF,brainwave,chaos