Principles 4: End-to-End Development Process

Series Index

- Principles 1: The Essence of Driving – A Crash Course in Project Management

- Principles 2: Principles of Software Testing

- Principles 3: Principles of Software Development

- Principles 4: End-to-End Development Process

- Principles 5: End-to-End Development Process (for Large Projects)

+++

This fourth post discusses an end-to-end development process. Obviously, there are many ways to develop software. While a lot of the basic principles apply almost universally, every sufficiently autonomous organization needs to figure out what works for itself, and then progressively refine it.

It is important to note that this process is the same process we used to manage several projects and has resulted in delivery of high-quality products, in a controlled manner, on time (actually ahead of time in a few cases). In other words, this is a real, proven process, not a theoretical construct. I’ll actually show two versions of the process – a simple one - for smaller orgs, and a more involved one - for larger orgs.

Guiding Principles

Before going further, I want to once again reiterate the Principles of Software Development. These principles are the underlying “Constitution”. In summary, they are:

- Everybody on the team fully understands the plan.

- Deliver features in a gradual, close-looped manner.

- Define release criteria at the start of the cycle. Track visibly.

- Exercise defect prevention – whenever possible.

- Have a stable, fully-transparent, committed schedule from the start.

- No code while planning the “What”. No code without spec.

- Fully working and timed setup on day 1 of M0.

- Strict and reliable reverse- and forward-integration gates from day 1 of M0.

- Start together, work together, finish together.

- No work happens outside of the main development cycle.

- Every major system has an owner.

Goals

At the high level, we want to be able to produce high-quality, compelling software, in a well-organized, intentional, cost-effective, and predictable manner. In order to pull that off, we need the following:

- Commitment, at all levels of the organization, to “do the right thing”. Full management sponsorship.

- A well-designed organization;

- A well-designed “business cycle”;

- A well-designed development process;

- A well-designed and working “engineering factory”;

- A high-powered and high-morale team;

This post talks about “D”, but it goes without saying that “A” to “F” represent a system of connected vessels and need to be designed, implemented, and refined together.

This post also makes the assumption that you have a working “Planning Process” that generates compelling product value propositions. Clearly, without a compelling product that addresses a customer need, it doesn’t matter how excellent you are in delivery. There are various ways to plan a product. At Microsoft, we use a bunch of different approaches, with a lot of folks now gravitating towards the so called “Sinofsky model”. At any rate, this post does not discuss the planning process (I may do this in a future post).

In order to deliver a high-quality product, you need to plan and design before you code. On the other hand, you don’t want to get stuck in planning for a prolonged period of time, because time may erode the value prop of your product and because you need fast and frequent customer feedback – you may be working in the wrong direction. Clearly, we need to “marry” these two competing priorities and clearly this is an optimization problem. What follows is our solution to the optimization problem, but solutions will vary based on specific product, business, industry and team considerations. Treat this as a starting point – take it and make it your own.

Process 1 – Simple Projects

We used this simple version of the process to develop TestApi – the API library for test and tool developers. TestApi is a relatively small and simple project. With this process, we released five versions of the library and we released all of them on time or ahead of time.

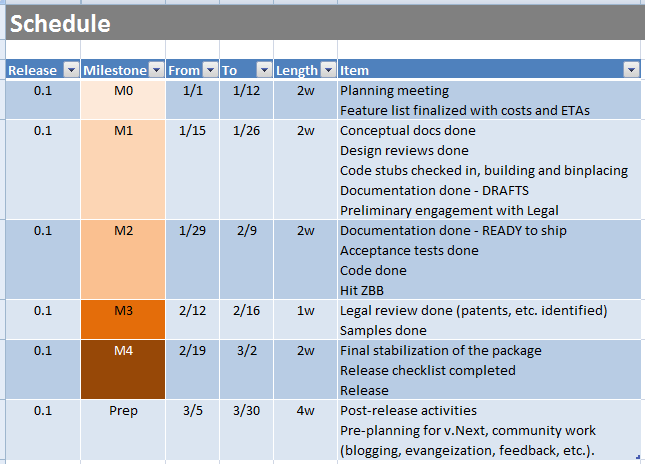

The development schedule looks as follows:

Figure 1. Simple development schedule

The schedule consists of 5 major milestones:

- M0 – Planning – in this phase, we finalize the list of features we’d like to deliver in the release. Every feature has an owner. All owners understand the schedule. The necessary prototyping is done. At the end of M0 we have a backlog for the release, to which we have committed. Once we commit to the backlog. We do not allow feature creep after the end of M0.

- M1 – Design – in this phase every owner delivers a “conceptual document” that explains the usage of their feature accompanied if necessary by a design document. In the case of an API library such as TestApi, this is a API usage document and API design document. The usage and design gets reviewed and approved by the Architecture review body (3 people in the case of TestApi). Once the design has been approved, the owner checks in the empty stubs (e.g. of the API) and the corresponding acceptance tests, together with an initial version of the XML documentation and gets stubs, tests and documentation to build cleanly. The documentation (both docs generated from XML comments as well as the conceptual documentation) gets sent to the Documentation team for editing. The Legal team (LCA) gets engaged.

- M2 – Implementation – in this phase, the owner implements the tests, code, fixes the documentation (based on editorial feedback from the Docs team) and drives to ZBB (zero bugs)

- M3 – LCA and Samples – in this phase the owner implements stand-alone samples (if applicable) and discussed the product with LCA (legal) if applicable. Legal signs off.

- M4 – Stabilization and Release – final stabilization, release checklist (we have a predefined checklist) and final release.

- Prep – At the end of every release cycle, we have about 4 weeks of “padding”. During that period, we do various post release activities (blogging, etc.), we invest in our engineering “factory” and we engage on preliminary planning for the next release (identifying possible participants, defining dates, etc.) This is a fairly relaxed period and that is by design.

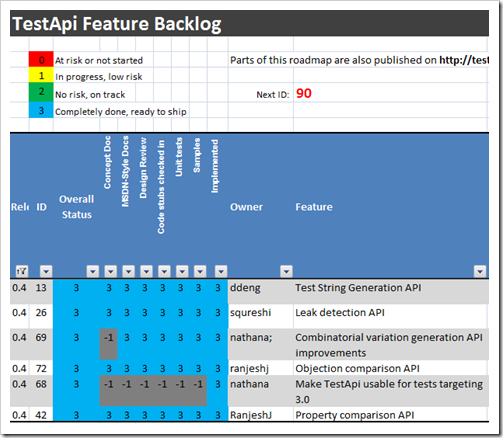

All features associated with a release are tracked in a table similar to the TestApi Feature Backlog table presented in this post:

Figure 2. Feature readiness checklist

All source code is static analysis clean by default (FxCop, StyleCop, etc.) – checking in a violation results in a build break. There is a FAQ for commonly asked questions (e.g. “I can’t be done with a feature on time, what do I do?”, etc.) that evolves with every release.

As a general rule, the timelines above are rigorously adhered to. If you “fall off the train” (meaning – fail to meet a deadline), you don’t get back on the train until the next version (which is always no more than 3 months away, and of course you tend to deliver larger payload for it). We have a dedicated project manager who drives every release (for TestApi that was Alexis Roosa).

The tasks associated with every milestone are done in the order in which they are presented here. For example, we write the acceptance tests against the empty stubs before we write the actual code. Another thing that should be obvious is that we spend a lot of effort on planning and design. We are extremely rigorous in our design review, we look at the API from various different angles (we consider different options, we discuss naming, usage, simplicity, statelessness, future evolveability, etc.) employing both scenario-based design and producing various short-lived and long-lived documents. We have found that this saves us a lot of time during development and significantly increases the confidence of our dates.

Simple, Predictable, Agile

The simplicity and predictability of this process have a lot of positive effects – from team morale and cohesion to delivery on time with high quality. To date, we have always been done with M4 on time or ahead of time! The process does, however, have a waterfallish feel, so every once in a while I get questions about agility. Put simply, we believe that design and planning is important, but so is time to market and getting frequent customer feedback. We resolve that tension by having short release cycles (3 months or less). There is noting that is preventing us from making these cycles even shorter, but we have found that 3 months works well for us.

My experiences shows that you can’t achieve agility by cutting proven engineering practices such as solid design and code reviews – that only leads to fire-drills, death marches and low quality. You achieve agility by having a reasonably rapid development cycle, aligned to solid business considerations.

To Be Continued…

In my next post, I will introduce a process that is suitable for larger, more complex products and organizations.