Azure ARM: SQL Server High-Availability and Multi-Datacenter Disaster Recovery with Internal Load Balancers (ILB)

Introduction

I already posted in my blog several articles related to high-availability (HA) and disaster recovery (DR) for SQL Server in Azure Virtual Machines (VM), what I’m going to discuss in this new article is the Azure Resource Manager (ARM) evolution of the same HA/DR architecture based on AlwaysOn Availability Groups (AG) and Azure Internal Load Balancer (ILB). The Azure Service Management (ASM) version of this article is contained in my blog post below:

SQL Server 2014 High-Availability and Multi-Datacenter Disaster Recovery with Multiple Azure ILBs

ARM presents lots of great improvements, relaxed limitations and new features compared to legacy ASM, then it is definitely the API you should use going forward. The most important feature ARM provides here, in this specific context, is the possibility to have 3 “Fault Domains” (FD) for each “Availability Set” (AS): this means that if you have 3 cluster nodes, maybe 2 SQL instances and 1 Cluster node as witness, they will be evenly distributed in 3 different Azure racks, thus only 1 Cluster VM will be down in case of single Azure (rack) failure. It is worth remembering that previously Azure Service Management (ASM) only provided 2 FDs. Still today, if you want high availability for SQL Server deployed in Azure VMs, you have to use AlwaysOn Availability Group (AG) since SQL Mirroring is a deprecated feature and Failover Clustering (AlwaysOn FCI) is not supported yet, due to the lack of shared storage being possible in Azure VMs. Please also note that Azure Files (SMB Share) is *not* supported as Cluster Fileshare Witness.For more information on supportability, you can read the article below:

Microsoft server software support for Microsoft Azure virtual machines

https://support.microsoft.com/en-us/kb/2721672

IMPORTANT: Cloud Service is a deprecated concept and no longer supported in Azure ARM API:

Azure Subscription and Service Limits, Quotas, and Constraints

https://azure.microsoft.com/en-us/documentation/articles/azure-subscription-service-limits

ARM Architecture

Internal Load Balancer (ILB) was also available with ASM API, but ARM brought Azure networking part several steps forward, including full automation and scripting support for Azure Virtual Network (VNETs) and VPN tunnel creations. For complex/advanced VPN topologies,

see my blog post below:

Hub&Spoke, Daisy-Chain and Full-Mesh VNET topologies in Azure ARM using VPN

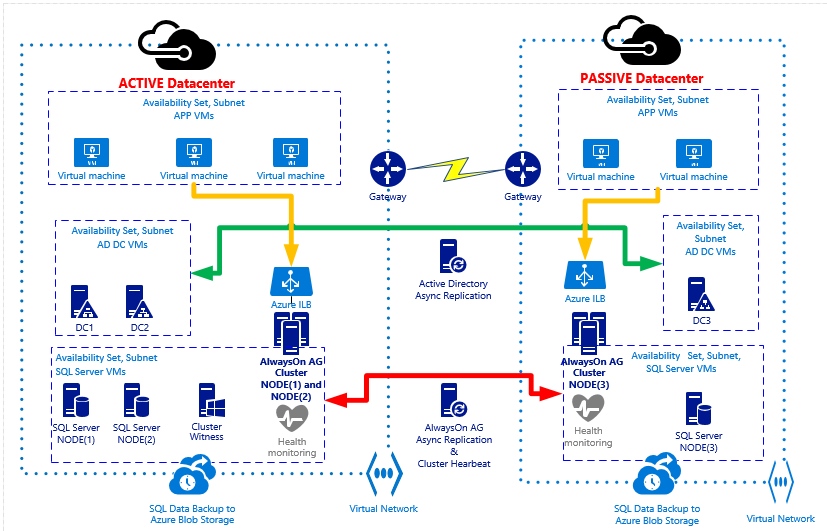

In the diagram below I reported the general architecture of the HA/DR solution for SQL Server, using two different Azure datacenters and Internal Load Balancers (ILBs) in ARM mode:

Let me recap here the main architectural choices and points of attentions in Azure:

- At the time of writing this blog post, only one ILB is supported per single “Availability Set” (AS), then only one AG Listener (if ILB is used).

- No public endpoint exposed over the Internet for SQL Server: in both the primary and the secondary sites I used Azure ILB (1 for each site), then only accessible from services and VMs in the same VNETs used here.

- I created one VNET in the primary Azure datacenter and one VNET in the secondary Azure datacenter, then I connected them using Azure VPN.

- In this specific scenario, high-performance Azure VPN Gateway has been used since it is more than enough in term of supported bandwidth, be sure to review its characteristics before deciding to adopt it:

Azure Virtual Network Gateway Improvements

http://azure.microsoft.com/blog/2014/12/02/azure-virtual-network-gateway-improvements

-

- If this VPN Gateway will not satisfy your bandwidth requirements, you need to consider Azure Express Route as indicated in the link below:

ExpressRoute or Virtual Network VPN – What’s right for me?

http://azure.microsoft.com/blog/2014/06/10/expressroute-or-virtual-network-vpn-whats-right-for-me

- In the primary Azure datacenter, I installed two SQL Server 2014 VMs in the same “Availability Set” (AS) to ensure 99,95% HA as requested by Azure SLA. I also installed a third Cluster Node VM, using minimal resources with no SQL Server installed since its purpose is only to provide an odd quorum majority. I also installed them in the same VNET and same subnet. Each SQL VM uses static IP addresses inside the VNET.

Manage the availability of virtual machines

http://azure.microsoft.com/en-us/documentation/articles/virtual-machines-manage-availability

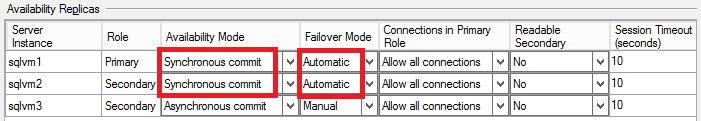

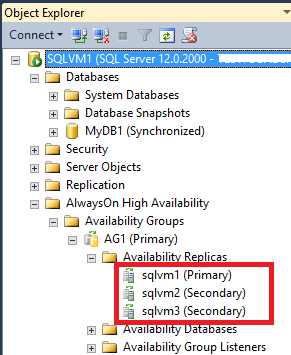

- For the above SQL Server instances, I configured synchronous data replication with automatic failover: in this way, in case of a single SQL VM failure, AlwaysOn AG will take over and failover to the second SQL Server VM with no data loss and no manual intervention:

NOTE: there is nothing specific here preventing you to allow readable secondaries, you can change this AlwaysOn configuration setting, if required by your specific scenario.

- In the secondary Azure datacenter, I installed a third single SQL Server VM in its own “Availability Set” (AS), VNET and subnet, using a static IP address: this is the minimum requirement, to have an effective DR solution, but if you want to have more protection you can also install a fourth SQL VM here to maintain HA also in the case of complete primary datacenter loss.

- IMPORTANT: Be aware that you can only specify AS at VM creation time, then I recommend you to use it even if you are going to install only a single VM.

- As you can see in the picture above, I used asynchronous data replication for the third SQL instance for the following reasons:

- SQL Server 2014 allows only 3 sync replicas (1 primary + 2 secondaries) but only 2 instances for automatic failover;

- Enabling synchronous data replica between remote datacenter will hurt the database performances on the primary instance since each transaction should be also committed by the remote SQL Server instance;

- IMPORTANT: Async data replication means possible data loss (RPO>0) in case of a complete primary datacenter loss. If you want zero data loss (RPO=0), you should configure synchronous data replication also for the SQL Server instance in the DR site, but it’s highly recommended to test the performance impact of network latency between the two remote Azure datacenters. Be also aware that automatic failover between datacenters is not possible today with SQL Server 2014 (RTO>0).

- All the above SQL Server instances are part of the same AlwaysOn AG and Cluster. Since it is a requirement for Cluster and AlwaysOn AG, all the VMs in both datacenters are part of the same Active Directory (AD) Domain: for this reason, I installed two DCs in the primary datacenter (same Availability Set, VNET and subnet) and one DC in the secondary datacenter (separate VNET, subnet and Availability Set). Each DC is also a DNS Server and uses static IP addresses inside the VNET. The DNS zone used by Active Directory should be “Active Directory Integrated”, that is the DNS zone data will be replicated using Active Directory replication to all DCs/DNS.

- In order to avoid the “Island” problem in DNS replication and name resolution, I strongly recommend you to adopt the following settings for DNS clients on all the VM, including the DCs:

- For all the VMs in the primary VNET:

- Primary DNS Server = the first DC/DNS in the primary VNET;

- Secondary DNS Server = the second DC/DNS in the primary VNET;

- For all the VMs in the secondary VNET:

- Primary DNS Server = one of the DC/DNS in the primary VNET;

- Secondary DNS Server = the DC/DNS in the secondary VNET;

- For all the VMs in the primary VNET:

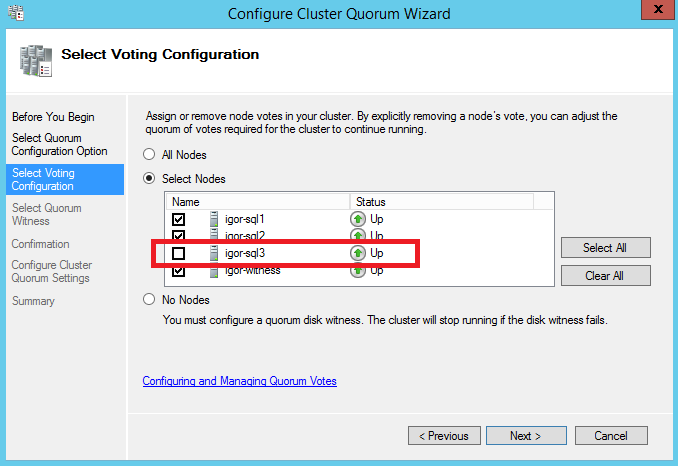

- To complete the Cluster configuration required for this scenario, I removed the quorum vote for the SQL Server VM in the secondary DR site and created a new VM in the primary datacenter (same VNET, subnet and Availability Set as for SQL Server VMs): no SQL Server installed in this VM, its only purpose is to be the Cluster Witness (“Node Majority Only” quorum) and provide a cluster vote to reach the quorum and then ensure Cluster healthy state in case of secondary datacenter loss.

Configure and Manage the Quorum in a Windows Server 2012 Failover Cluster

http://technet.microsoft.com/en-us/library/jj612870.aspx

What's New in Failover Clustering in Windows Server

https://technet.microsoft.com/en-us/library/dn265972.aspx

- Finally, I used Network Security Groups (NSG) to harden the security configuration and have strict control over the possible network communications between different subnets in both VNETs:

Network Security Groups

http://azure.microsoft.com/blog/2014/11/04/network-security-groups

- Azure Storage accounts are an important part here since all the VM OS disks and additional data disks must reside on persistent Azure Blob storage. If for some (or all) VMs you are going to use multiple disks, as it’s likely to happen for SQL Server VMs, it’s highly

recommended to do not use Azure geo-replication for used storage accounts since not supported. Each disk is a blob and storage replication in Azure is asynchronous and can ensure write ordering (then consistency) only at the single blob level, not between multiple blobs (= disks). Please note that geo-replication (GRS) is enabled by default when creating a new Azure storage account, be sure to use “Locally Redundant” (LRS).

Azure Storage Redundancy Options

http://msdn.microsoft.com/en-us/library/azure/dn727290.aspx

- In addition to Azure storage account type, you also need to carefully consider how many storage accounts you need to use. For Azure Standard Storage, there is a global limit of 20K IOPS, this means a maximum number of 40 disks 1TB each (500 IOPS per disk). Depending on the VM size, you can use up to a certain amount of disks, then since you have to accommodate at least two SQL Server VMs, in addition to the Domain Controllers and the Witness VM, be sure to do your maths correctly and eventually use more than one storage account in each Azure datacenter deployment. In the first link below, Azure Premium Storage is also mentioned: with this option, you can have up to 5K IOPS per single disk and up to 80K IOPS per VM (GS-SERIES).

Azure Storage Scalability and Performance Targets

https://azure.microsoft.com/en-us/documentation/articles/storage-scalability-targets

Sizes for virtual machines

https://azure.microsoft.com/en-us/documentation/articles/virtual-machines-size-specs

- Inside SQL Server VMs, I used the maximum amount of disks permitted by the specific VM size, then I used Windows Server 2012 R2 (Guest OS) “Storage Pool” technology to group together all the physical disks and present a unique logical volume with increased IOPS, more details are reported at the link below:

Best Practices & Disaster Recovery for Storage Spaces and Pools in Azure

Multiple ILBs Configuration

Even if ILB exists in both ASM and ARM API, the syntax is totally different, that’s why I wrote an article on how to create a *single* ILB for SQL Server AlwaysOn AG Listener, using ARM PowerShell cmdlets:

Configure an ILB listener for SQL Server AlwaysOn Availability Groups in Azure ARM

As you can see in the diagram picture above, you need *two* distinct ILBs, one in the primary datacenter, and one in the secondary datacenter. Each ILB will use static internal IP addresses (DIPs) in the same subnets as SQL Server VMs in their specific datacenter. These IPs will be manually inserted in the AG Listener network name cluster resource dependencies (OR relationship) to ensure valid IP addressing scheme in both datacenters. In order to set up the multi-datacenter configuration with two ILBs, you need to execute the following steps, based on the article I just mentioned above:

1. Create first ILB in the Primary VNET using PowerShell script contained in the section “Create ILB with Load Balancer Rule & Health-Probe” from the article above.

2. Create second ILB in the Secondary VNET using PowerShell script contained in the section “Create ILB with Load Balancer Rule & Health-Probe” from the article above.

3. Prepare to execute steps in the “Create the Availability Group Listener” section from the article above, but be careful with some important differences outlined in the next points.

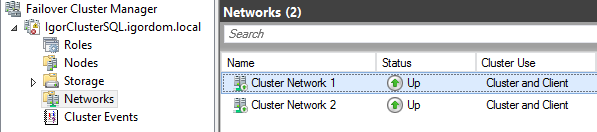

4. You will see two distinct networks since Cluster nodes (VMs) come from two distinct Azure VNETs. Please take notes of both network names, they will be used to assign values to “ $ClusterNetworkName” parameter in the script contained in STEP[10] in the article above.

5. The CAP cluster resource will have two dependencies related to two underlying IP resources, that is one for each Cluster Network/VNET. These dependencies will be in OR relationship. Please take notes of both resource names, they will be used to assign values to “ $IPResourceName” parameter in the script contained in STEP[10] in the article above.

6. Retrieve the IP addresses you previously used to create your ILBs in both Primary and Secondary VNETs, they will be used to assign values to “ $ILBIP” parameter in the script contained in STEP[10] in the article above.

7. Execute a first time the script contained in STEP[10] with parameter values related to the Primary VNET and ILB ( "Cluster Network 1", ILB IP in Primary VNET, "IP Address 10.1.2.0").

8. Execute a second time the script contained in STEP[10] with parameter values related to the Secondary VNET and ILB ( "Cluster Network 2", ILB IP in Secondary VNET, "IP Address 10.2.2.0").

9. Execute instructions outlined in section “Bring the listener online” in the article above.

10. If necessary, execute steps contained in section “Follow-up Items” in the article above.

That’s all folks! Hope you enjoyed this new content series on Azure ARM and SQL Server. Let me know if you have any feedback or question, as usual you can follow me on Twitter ( @igorpag). Regards.