Where is the table cardinality taken from when that information doesn’t match in the different statistics associated to an object?

My colleague Tony O’Grady asked last week the following question, which I think is interesting to share here.

<MESSAGE>

I have been testing working with an export of statistics when performance tuning and have come across the following scenario:

- Exported statistics (histogram) and table definition from Adventure works

- The table is the Sales.SalesOrderDetail from 2008

- Created a new database and scripted the table definition / index and statistics (histogram) (not the data)

- Disabled auto update and auto create statistics

- Added a single row to the table

I ran this query against the new database:

SELECT UnitPrice FROM Sales.SalesOrderDetail WHERE UnitPrice < $5.00

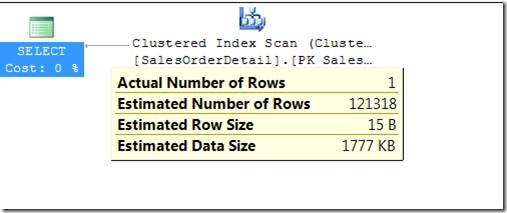

And got the results I was expecting from the query plan:

I then added a non-clustered index, which created a statistics histogram based on the one row in the table:

CREATE NONCLUSTERED INDEX [new_index1] ON [Sales].[SalesOrderDetail] ([UnitPrice] ASC) INCLUDE ( [OrderQty], [ProductID], [LineTotal]) WITH (PAD_INDEX = OFF, STATISTICS_NORECOMPUTE = OFF, SORT_IN_TEMPDB = OFF, IGNORE_DUP_KEY = OFF, DROP_EXISTING = OFF, ONLINE = OFF, ALLOW_ROW_LOCKS = ON, ALLOW_PAGE_LOCKS = ON) ON [PRIMARY]

I then went ahead and ran the query again. The optimizer used the new index but still had the old values for the number of rows:

StmtText

SELECT [UnitPrice] FROM [Sales].[SalesOrderDetail] WHERE [UnitPrice]<@1

|--Index Seek(OBJECT:([AdventureWorksSample].[Sales].[SalesOrderDetail].[new_index1]), SEEK:([AdventureWorksSample].[Sales].[SalesOrderDetail].[UnitPrice] < [@1]) ORDERED FORWARD)

The conclusion is that the optimizer used the statistics from the original clustered key? Is there any way of telling which statistics are getting used and does anyone have any explanation for this behavior?

</MESSAGE>

During query compilation, one of the steps the compiler takes is loading all known metadata associated to every table referenced by the query tree whose execution plan is being compiled. Part of that metadata are the indexes attached to the object. While it loops through the list of indexes to load its metadata, it checks whether it is a heap, a clustered index or a non-clustered index whose metadata it is about to load. When, in that loop, it refers to the existing clustered index (or, in its absencem the heap), it uses the cardinality information contained in the statistics attached to that data structure to populate the cardinality vectors of the whole table.

And that’s the reason why the estimated rows in Tony’s scenario equals the number of rows persisted in the stats of the clustered index, not in the ones associated to the non-clustered he created later on.

![clip_image002[5] clip_image002[5]](https://msdntnarchive.blob.core.windows.net/media/MSDNBlogsFS/prod.evol.blogs.msdn.com/CommunityServer.Blogs.Components.WeblogFiles/00/00/00/54/98/metablogapi/2570.clip_image0025_thumb_34B6B80A.jpg)

![clip_image002[7] clip_image002[7]](https://msdntnarchive.blob.core.windows.net/media/MSDNBlogsFS/prod.evol.blogs.msdn.com/CommunityServer.Blogs.Components.WeblogFiles/00/00/00/54/98/metablogapi/7875.clip_image0027_thumb_56DE9DC3.jpg)

![clip_image002[9] clip_image002[9]](https://msdntnarchive.blob.core.windows.net/media/MSDNBlogsFS/prod.evol.blogs.msdn.com/CommunityServer.Blogs.Components.WeblogFiles/00/00/00/54/98/metablogapi/8468.clip_image0029_thumb_7C57F557.jpg)