VS2010 Beta 2 Concurrency Visualizer Parallel Performance Tool Improvements

Hi,

I'm very excited about the release of Visual Studio 2010 Beta 2 that is going to be available to MSDN subscribers today and to the general public on 10/21. This release includes significant improvements in many areas that I'm sure you'll love. But, as the Architect of the Concurrency Visualizer tool in the VS2010 profiler, I'm extremely thrilled to share with you the huge improvements in the user interface and usability of our tool. Our team has done an outstanding job in listening to feedback and making innovative enhancements that I'm sure will please you, our customers. Here is a brief overview of some of the improvements that we've made:

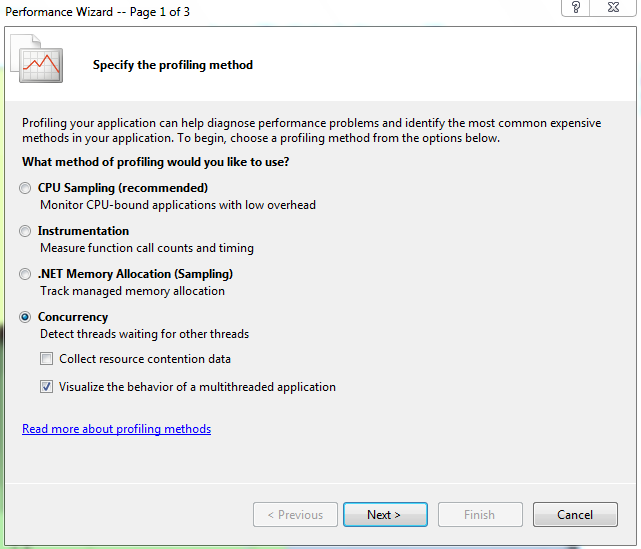

Before we start, I'll remind you again that the tool that I'm describing here is the "visualize the behavior of a multithreaded application" option under the concurrency option in the Performance Wizard accessible through the Analyze Menu. I've described how the tool can be run in a previous post. Here's a screenshot of the performance wizard with the proper selection to use our tool:

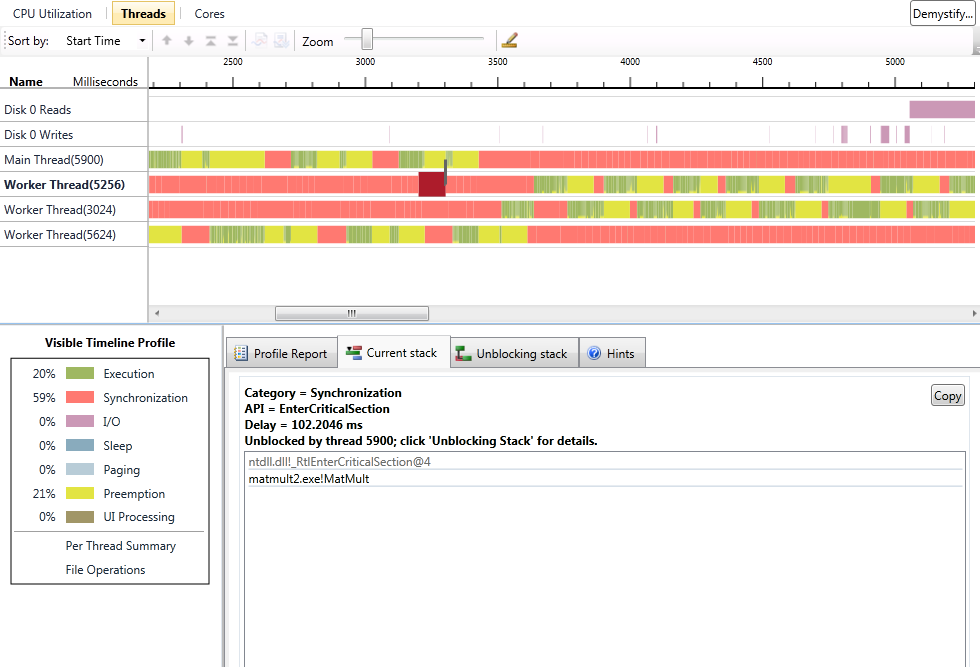

Ok, now let's start going over the changes. First, we've slightly changed the names of the views for our tool. We now have "CPU Utilization", "Threads", and "Cores" views. These views can be accessed either through the profiler toolbar's Current View pull-down menu, through bitmap buttons at the top of the summary page, or through links in our views as you'll see in the top left of the next screenshot.

You'll notice that the user interface has gone through some refinement since Beta 1 (see my earlier posts for a comparison) Let's go over the features quickly:

1. We've added an active legend in the lower left. The active legend has multiple features. First, for every thread state category, you can click on the legend entry to get a callstack based report in the Profile Report tab summarizing where blocking events occured in your application. For the execution category, you get a sample profile that tells you what work your application performed. As usual, all of the reports are filtered by the time range that you're viewing and the threads that are enabled in the view. You can change this by zooming in or out and by disabling threads in the view to focus your attention on certain areas. The legend also provides a summary of where time was spent as percentages shown next to the categories.

2. When you select an area in a thread's state, the "Current Stack" tab shows where your thread's execution stopped for blocking categories, or the nearest execution sample callstack within +/- 1ms of where you clicked for green segments.

3. When you select a blocking category, we also try to draw a link (dark line shown in the screenshot) to the thread that resulted in unblocking your thread whenever we are able to make that determination. In addition, the Unblocking Stack tab shows you what the unblocking thread was doing by displaying its callstack when it unblocked your thread. This is a great mechanism to understand thread-to-thread dependencies.

4. We've also improvement the File Operations summary report that is accessible from the active legend by also listing file operations performed by the System process. Some of those accesses are actually triggered on behalf of your application, so we list them but clearly mark them as System accesses. Some of those accesses may not be related to your application.

5. The Per Thread Summary report is the same bar graph breakdown of where each thread's time was spent that used to show up by default in Beta 1, but can now be accessed from the active legend. This report is a guide that helps you understand improvements/regressions from one run to another and serves as a guide to help focus your attention on the threads and types of delay that are most important in your run. This is valuable for filtering threads/time and prioritizing your tuning effort.

6. The profile reports now have two additional features. By default, we now filter out the callstacks that contribute < 2% of blocking time (or samples for execution reports) to minimize noise. You can change the noise reduction percentage yourself. We also allow you to remove stack frames that are outside your application from the profile reports. This can be valuable in certain cases, but it is left off by default because blocking events usually do not occur in your code, so filtering that stuff out may not help you figure out what's going on.

7. We added significant help content to the tool. You'll notice the Hints tab that was added and it includes instructions about features of the view as well as links to two important help items. One is a link to our Demystify feature, which is a graphical way to get contextual help. This is also accessible through the button on the top right hand side of the view. Unfortunately, the link isn't working in Beta 2, but we are working on hosting an equivalent web-based feature on the web to assist you and get feedback before the release is finalized. I'll communicate this information in a subsequent post. The other link is to a repository of graphical signatures for common performance problems. This can be an awesome way of building a community of users and leveraging the experiences of other users and our team to help you identify potential problems.

8. The UI has been improved to preserve details when you zoom out by allowing multiple colors to reside within a thread execution region when the same pixel in the view corresponds to multiple thread states. This was the mechanism that we chose to always report the truth and give the users a hint that they need to zoom in to get more accurate information.

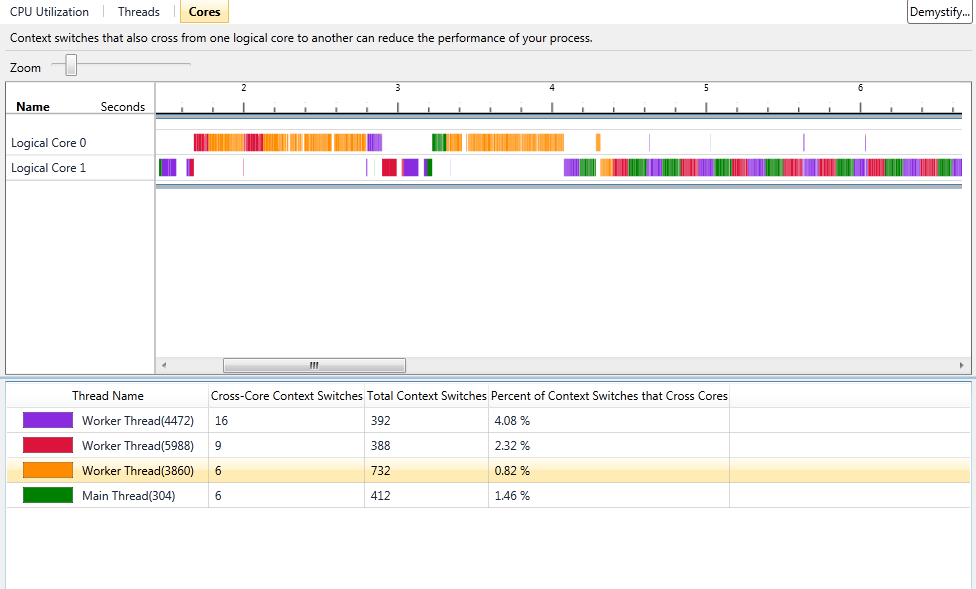

The next screenshot shows you a significantly overhauled "Cores" view:

The Cores view has the same functionality; namely, understanding how your application threads were scheduled on the logical cores in your systems. The view leverages a new compression scheme to avoid loss of data when the view is zoomed out. It has a legend that was missing in Beta 1. It also has clearer statistics for each thread: the total number of context switches, the number of context switches resulting in core migration, and the percentage of total context switches resulting in migration. This can be very valuable when tuning to reduce context switches or cache/NUMA memory latency effects. In addition, the visualization can easily illustrate thread serialization on cores that may result from inappropriate use of thread affinity.

This is just a short list of the improvements that we've made. I will be returning soon with another post about new Beta 2 features, so please visit again and don't be shy to give me your feedback and ask any questions that you may have.

Cheers!

-Hazim