Building a simple executive toy using Kinect for Windows v2 and Unity3D

Abstract

This article will look into how to leverage the new Kinect for Windows 2.0 device to create a simple executive toy using Unity and the Unity plugin. The aim is to demonstrate how easy it is to leverage Kinect in your Unity projects and how to build some thing simple quickly. This is a step by step guide on how to great the Kinect Wall app and how it works.

Keywords: Unity3D, Kinect, Shaders, Cg, Geometry Shaders, Vertex Shaders, Fragment Shaders, Textures

Introduction

Research Method

I am taking a tutorial approach for this article where I will show you step by step how to build create the application. The application source code will be available on Codeplex free to use in anyway you like.

Requirements

- Unity 3d Pro – yes unfortunately because we are accessing unsafe code we need Pro

- Kinect SDK

- Kinect Unity Plugin

Tutorial

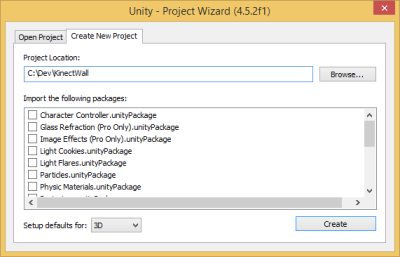

The first step is to start Unity and create a new Project. Give it a name and click create.

The next step is to do some minor admin in my project. The main thing I do here is create the following folders so I can manage my project a little neater:

- Materials

- Scripts

- Shaders

- Scenes

- Textures

Now we begin to setup some of the items we need to create to make this all work. We will need the following:

- Empty Material

- Scene – Main one with our camera, lights, etc

- Textures – These are textures we are going to use for our bricks

- Shader – This does all the depth and body index process as well as all the geometry generation

- Main Script – This spins up the Kinect device and passes information through to the Shader for processing.

We now need to add the Kinect for Windows v2 assets by going to the Assets –> Import Package –> Custom Package option and selecting the Kinect v2 Unity package. This will import all the required assets into the project so we can access the sensor. I need to enable unsafe code, the way that I do this is by adding a file called smcs.rsp to my Assets folder. This file is a simple text file with the single line inside of –unsafe.

Next we create the mainscript file and retrieve all the information from the sensor and pass it to the Shader. Inside the MainScript there are a number of default methods. Start which is used for initialisation and Update which is called as part of the update thread. Inside the Start function we are going to initialise the sensor and some of the storage structure for the stream of information. Firstly I create some local variables to store the sensor states:

1: private KinectSensor sensor;

2: private DepthFrameReader depthreader;

3: private BodyIndexFrameReader bodyreader;

4: private byte[] depthdata;

5: private byte[] bodydata;

The KinectSensor object gives us access to the various data streams from the device. Because we want the outline of the player and the depth information we need to access to the DepthFrame and BodyIndex information.

1: depthreader = sensor.DepthFrameSource.OpenReader();

2: bodyreader = sensor.BodyIndexFrameSource.OpenReader();

We get the information from the reader in the update loop in the following manner

1: var depthframe = depthreader.AcquireLatestFrame();

2:

3: if (depthframe != null)

4: {

5: fixed (byte* pData = depthdata)

6: {

7: depthframe.CopyFrameDataToIntPtr(new System.IntPtr(pData), (uint)depthdata.Length);

8: }

9: depthframe.Dispose();

10: depthframe = null;

11:

12: DepthTexture.LoadRawTextureData(depthdata);

13: DepthTexture.Apply();

14: }

This will read the information into a byte array that I can then send to the Shader either through a Texture of via a ComputeBuffer. To kick start the shader I need to set all the parameters and then tell it to start drawing using the following:

1: ShaderMaterial.SetTexture("_MainTex", MainTexture);

2: ShaderMaterial.SetTexture("_DepthTex", DepthTexture);

3: ShaderMaterial.SetFloat("_DepthWidth", DepthWidth);

4: ShaderMaterial.SetFloat("_DepthHeight", DepthHeight);

5: ShaderMaterial.SetBuffer("_BodyIndexBuffer", bodyIndexBuffer);

6: ShaderMaterial.SetPass(0);

7:

8: Graphics.DrawProcedural(MeshTopology.Points, WallWidth * WallHeight, 1);

We now have everything in the MainScript we need, to see the complete source code with both methods of getting data to a Shader as well as getting bodyindex information have a look at the codeplex source where the complete project is available.

Remember to save your scene scene into the scene folder. I then add some items to my scene the first is a few lights to provide a clean effect for the wall. The next is an empty game object (GameObject –> Create Empty /Ctrl-Shift-N). I now add my main script into my Scripts folder and select the mainScriptObject and drag the script onto it.

What I need to do now is create a shader and a material that will use this shader. Creating the shader first and then the material I then drag the material on to the mainScriptObject shader material property. Now we start looking at the shader. The shader will handle all the tile generation for my scene. I will be leveraging a geometry shader to perform all the object handling. The reason for this is mainly performance and processing for the textures will take too long outside of the shader.

The shader itself is below and I have commented as much of the code as possible to make it easy to understand. Currently the depth from the depth buffer isn't functioning as I had hoped and will post an update as soon as I have tweaked it.

1: Shader "Custom/WallShader" {

2: Properties {

3: _MainTex ("Main Brick Texture", 2D) = "white" {}

4: _DepthTex ("Kinect Depth Image", 2D) = "white" {}

5: _DepthImageWidth("Depth Image Width",Float) = 100

6: _DepthImageHeight("Depth Image Height",Float) = 50

7: }

8: SubShader {

9: Tags { "LightMode" = "ForwardBase" }

10: Pass

11: {

12: Cull Off

13: LOD 200

14:

15: CGPROGRAM

16: #pragma vertex VS_Main

17: #pragma geometry GS_Main

18: #pragma fragment FS_Main

19: #include "UnityCG.cginc"

20:

21: uniform fixed4 _LightColor0;

22: #define TAM 36

23:

24: float MinDepthMM = 500.0;

25: float MaxDepthMM = 8000.0;

26:

27: float _DepthImageWidth;

28: float _DepthImageHeight;

29:

30: float4 _MainTex_ST;

31: sampler2D _MainTex;

32:

33: StructuredBuffer<float> _BodyIndexBuffer;

34: StructuredBuffer<float> _DepthBuffer;

35:

36: // Structs for passing information from item to item

37: struct EMPTY_INPUT

38: {

39: };

40:

41: struct POSCOLOR_INPUT

42: {

43: float4 pos : POSITION;

44: float4 color : COLOR;

45: float2 uv_MainTex : TEXCOORD0;

46: float3 normal : NORMAL;

47: };

48:

49: // Depth Helper function

50: float DepthFromPacked4444(float4 packedDepth)

51: {

52: // convert from [0,1] to [0,15]

53: packedDepth *= 15.01f;

54:

55: // truncate to an int

56: int4 rounded = (int4)packedDepth;

57:

58: return rounded.w * 4096 + rounded.x * 256 + rounded.y * 16 + rounded.z;

59: }

60:

61:

62: EMPTY_INPUT VS_Main()

63: {

64: return (EMPTY_INPUT)0;

65: }

66:

67:

68: [maxvertexcount(TAM)]

69: void GS_Main(point EMPTY_INPUT p[1], uint primID : SV_PrimitiveID, inout TriangleStream<POSCOLOR_INPUT> triStream)

70: {

71: float4 currentCoordinates = float4(primID % 100, (primID / 100), 0, 1.0);

72:

73: float4 offset = float4(0,0,0,0);

74: float4 scale = float4(1,1,1,0);

75: float4 curcolor = float4(1,1,1,1);

76:

77: float depth = 0;

78:

79: //TODO - Figure out best way to handle this // I suspect a compute shader would work

80: float4 textureCoordinates = float4(currentCoordinates.x/100 * 512/4,currentCoordinates.y/50 * 424,0,0);

81:

82: int index = (int)(textureCoordinates.x) + ((int)textureCoordinates.y * 512/4);

83: int depthindex = (int)(textureCoordinates.x) + ((int)textureCoordinates.y * 512);

84: float player = _BodyIndexBuffer[index];

85: uint depthinfo = (uint)_DepthBuffer[depthindex] >> 3;

86:

87: // Get more depth information

88: if(player > 0 && player < 1)

89: {

90: depth = -3;

91: depth -= depthinfo * 3;

92: }

93:

94:

95: float f = 1.0;

96: //Construct a cube

97:

98: const float4 vc[TAM] = {

99: float4( -f, f, f, 1.0f), float4( f, f, f, 1.0f), float4( f, f, -f, 1.0f), //Top

100: float4( f, f, -f, 1.0f), float4( -f, f, -f, 1.0f), float4( -f, f, f, 1.0f), //Top

101:

102: float4( f, f, -f, 1.0f), float4( f, f, f, 1.0f), float4( f, -f, f, 1.0f), //Right

103: float4( f, -f, f, 1.0f), float4( f, -f, -f, 1.0f), float4( f, f, -f, 1.0f), //Right

104:

105: float4( -f, f, -f, 1.0f), float4( f, f, -f, 1.0f), float4( f, -f, -f, 1.0f), //Front

106: float4( f, -f, -f, 1.0f), float4( -f, -f, -f, 1.0f), float4( -f, f, -f, 1.0f), //Front

107:

108: float4( -f, -f, -f, 1.0f), float4( f, -f, -f, 1.0f), float4( f, -f, f, 1.0f), //Bottom

109: float4( f, -f, f, 1.0f), float4( -f, -f, f, 1.0f), float4( -f, -f, -f, 1.0f), //Bottom

110:

111: float4( -f, f, f, 1.0f), float4( -f, f, -f, 1.0f), float4( -f, -f, -f, 1.0f), //Left

112: float4( -f, -f, -f, 1.0f), float4( -f, -f, f, 1.0f), float4( -f, f, f, 1.0f), //Left

113:

114: float4( -f, f, f, 1.0f), float4( -f, f, f, 1.0f), float4( -f, -f, f, 1.0f), //Back

115: float4( f, -f, f, 1.0f), float4( -f, -f, f, 1.0f), float4( -f, f, f, 1.0f) //Back

116: };

117:

118:

119: const float2 UV1[TAM] = {

120: float2( 0.0f, 1.0f ), float2( 1.0f, 1.0f ), float2( 1.0f, 0.0f ),

121: float2( 1.0f, 0.0f ), float2( 0.0f, 0.0f ), float2( 0.0f, 1.0f ),

122:

123: float2( 0.0f, 1.0f ), float2( 1.0f, 1.0f ), float2( 1.0f, 0.0f ),

124: float2( 1.0f, 0.0f ), float2( 0.0f, 0.0f ), float2( 0.0f, 1.0f ),

125:

126: float2( 0.0f, 1.0f ), float2( 1.0f, 1.0f ), float2( 1.0f, 0.0f ),

127: float2( 1.0f, 0.0f ), float2( 0.0f, 0.0f ), float2( 0.0f, 1.0f ),

128:

129: float2( 0.0f, 1.0f ), float2( 1.0f, 1.0f ), float2( 1.0f, 0.0f ),

130: float2( 1.0f, 0.0f ), float2( 0.0f, 0.0f ), float2( 0.0f, 1.0f ),

131:

132: float2( 0.0f, 1.0f ), float2( 1.0f, 1.0f ), float2( 1.0f, 0.0f ),

133: float2( 1.0f, 0.0f ), float2( 0.0f, 0.0f ), float2( 0.0f, 1.0f ),

134:

135: float2( 0.0f, 1.0f ), float2( 1.0f, 1.0f ), float2( 1.0f, 0.0f ),

136: float2( 1.0f, 0.0f ), float2( 0.0f, 0.0f ), float2( 0.0f, 1.0f )

137:

138: };

139:

140: const int TRI_STRIP[TAM] = { 0, 1, 2, 3, 4, 5,

141: 6, 7, 8, 9,10,11,

142: 12,13,14, 15,16,17,

143: 18,19,20, 21,22,23,

144: 24,25,26, 27,28,29,

145: 30,31,32, 33,34,35

146: };

147:

148:

149: POSCOLOR_INPUT v[TAM];

150: int i = 0;

151:

152: currentCoordinates.z = depth;

153:

154: float3 lightdirection = normalize(_WorldSpaceLightPos0.xyz);

155: float3 lightcolor = _LightColor0;

156: // Assign new vertices positions

157: for (i=0;i<TAM;i++) {

158: float4 npos = float4(currentCoordinates.x, 50 - currentCoordinates.y,depth,1.0) * scale + offset;

159: v[i].pos = npos + vc[i];

160: v[i].color = curcolor;

161: v[i].uv_MainTex = TRANSFORM_TEX(UV1[i],_MainTex);

162: v[i].normal = float3(0,0,0);

163: v[i].pos = mul(UNITY_MATRIX_MVP, v[i].pos);

164: }

165:

166: // Build the cube tile by submitting triangle strip vertices

167: for (i=0;i<TAM/3;i++)

168: {

169: //Calculate the normal of the triangle

170: float4 U = v[TRI_STRIP[i*3+1]].pos - v[TRI_STRIP[i*3+0]].pos;

171: float4 V = v[TRI_STRIP[i*3+2]].pos - v[TRI_STRIP[i*3+0]].pos;

172: float3 normal = normalize(cross(U,V));

173: float3 normaldirection = normalize( mul(float4(normal,1.0), _World2Object).xyz);

174: float4 ncolor = float4(_LightColor0.xyz * v[TRI_STRIP[i*3+0]].color.rgb * max(0.0, dot(normaldirection, lightdirection)),1.0);

175:

176: //Set the normal and the color based on the color

177: v[TRI_STRIP[i*3+0]].normal = normal;

178: v[TRI_STRIP[i*3+0]].color = ncolor;

179: v[TRI_STRIP[i*3+1]].normal = normal;

180: v[TRI_STRIP[i*3+1]].color = ncolor;

181: v[TRI_STRIP[i*3+2]].normal = normal;

182: v[TRI_STRIP[i*3+2]].color = ncolor;

183:

184: triStream.Append(v[TRI_STRIP[i*3+0]]);

185: triStream.Append(v[TRI_STRIP[i*3+1]]);

186: triStream.Append(v[TRI_STRIP[i*3+2]]);

187:

188: triStream.RestartStrip();

189: }

190: }

191:

192: //Fragment Shader - with Lighting

193: float4 FS_Main(POSCOLOR_INPUT input) : COLOR

194: {

195: half4 texcol = tex2D (_MainTex, input.uv_MainTex);

196:

197: return texcol * input.color;

198: }

199:

200: ENDCG

201: }

202: }

203: FallBack "Diffuse"

204: }

With that all done you can now run your scene with the Kinect v2 Running and you should see the wall pushing out when you walk in front of it.

Video Link - https://www.youtube.com/watch?v=cFsPluBZn1s

Conclusion

Using Kinect v2 with Unity3D is extremely easy and the API’s are pretty much identical to the .Net API. Probably the biggest lesson is the benefit of using Shaders to handle the large volume of information between the various image streams you are receiving from the device.

Edit: I have uploaded the zip file of the complete project to codeplex.

Source Code

https://sharpflipwall.codeplex.com

References

https://wiki.unity3d.com/index.php/Getting_Started_with_Shaders

https://www.bricksntiles.com/textures/

https://answers.unity3d.com/questions/744622/

https://johannes.gotlen.se/blog/2013/02/realtime-marching-cubes/