Phone Call Transcription and Insights with Video Indexer and Dynamics 365 (Part 1)

In many contact centers, the telephone continues to be one of the primary channels of engagement for customers. Frequently, the only insights we will have about the nature of a call that has taken place will be based on the typed notes or other data manually entered by the contact center agent.

By leveraging Microsoft’s Cognitive Services, we can greatly enhance the insights we can derive from phone call recordings in an automated way. We can leverage the investments Microsoft has been making in machine learning and artificial intelligence to automatically extract metadata from audio and video files, including conversation keywords, a sentiment timeline, a call transcript, and more.

This post will be the first in a series in which we explore how we can leverage the new Video Indexer service (currently in preview), along with the robust workflow capabilities of Dynamics 365 for Customer Engagement, to automate the indexing of phone call recordings, augment our Phone Call records with metadata about the contents of the call, and provide users with an interactive experience within Dynamics to gain more insights into the conversation.

This first post will focus on creating Custom Workflow Activities that interact with the Video Indexer API to upload and index call recordings, then using those activities within a Workflow process to automatically update our Phone Call record with insightful metadata.

In this post, we will build on the sample code available in these two resources:

- Create a custom workflow activity in the Dynamics 365 documentation, and the associated code samples in the D365 SDK

- The Video Indexer How-to Guide for Uploading and Indexing Videos in the Cognitive Services documentation

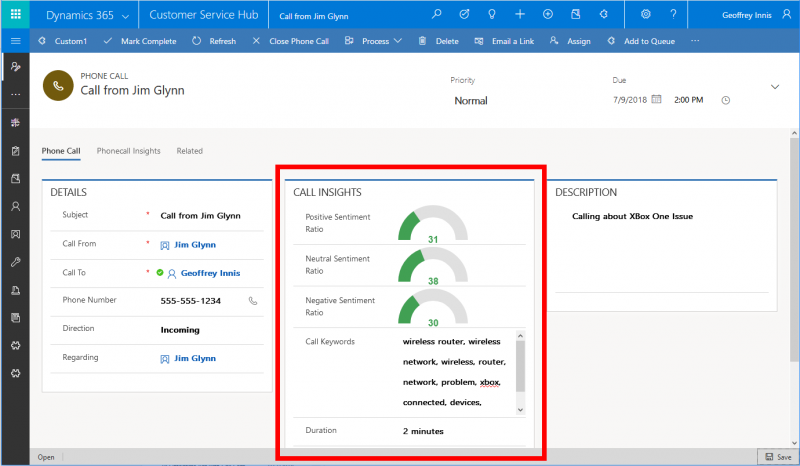

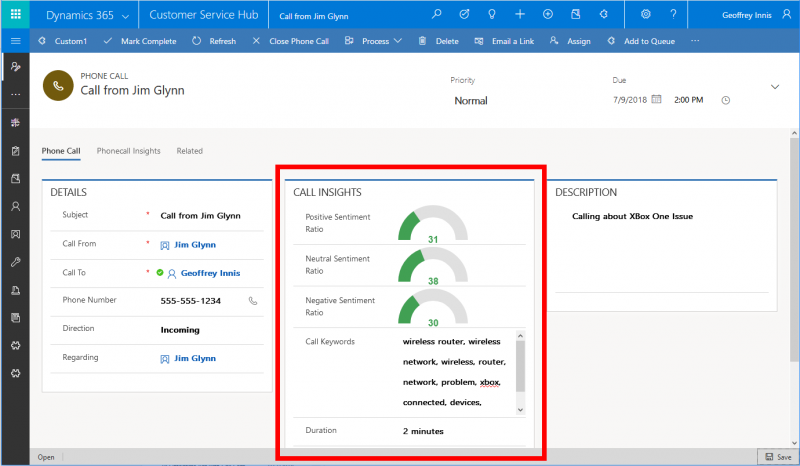

By the end of our first post, we will be able to view some derived insight metadata about our recorded phone call in Dynamics:

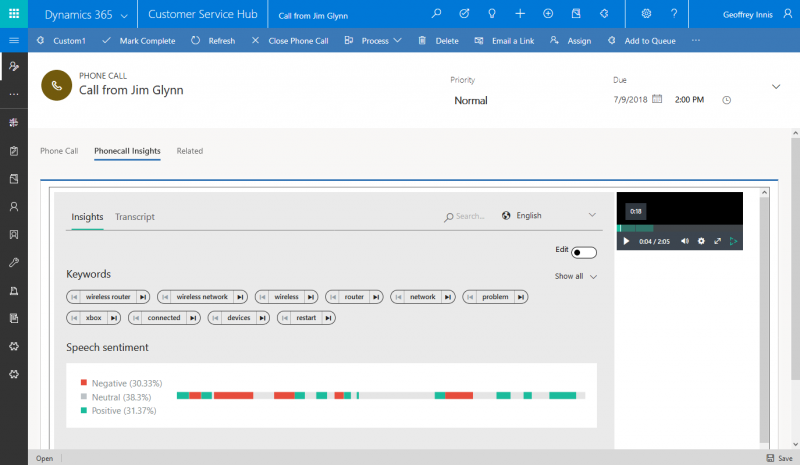

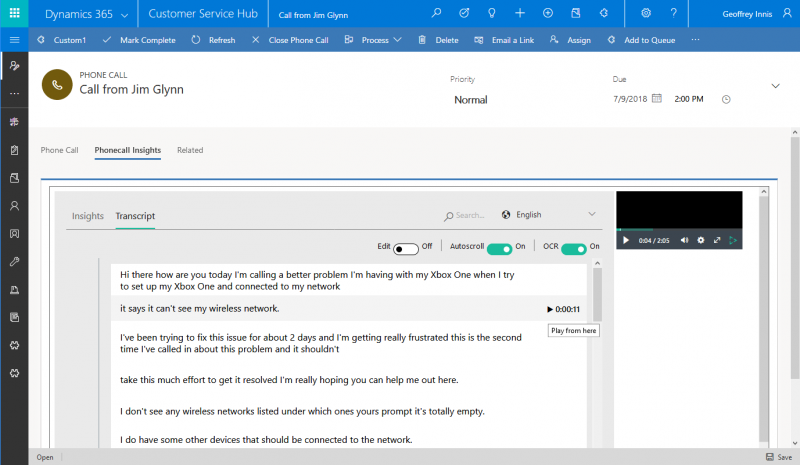

And by the end of our series, we will also be able to leverage a rich Insights and Transcript viewer, with the ability to view our full transcript, visualize our sentiment timeline, and search the call, with an integrated media player allowing us to jump to and listen to key moments in the call:

Insights Widget, including interactivity with Audio Widget

Transcript Widget, including interactivity with Audio Widget

Notes:

- In this post, we will assume that we have access to a recording of the telephone call, and that the Phone Call record in Dynamics 365 will be updated with a URL where the audio recording can be retrieved. Many telephony providers offer call recording capabilities, and this data can typically be captured through basic CTI integration.

- The Video Indexer service brings together a number of cognitive capabilities into a readily-consumable service, minimizing development. We will use the Video Indexer service in this post, but for those seeking greater control over the underlying cognitive capabilities being leveraged, Azure Media Services can enable developers to achieve similar functionality.

Prerequisites

The prerequisites for building and deploying our custom workflow activity include:

- An instance of Dynamics 365 for Customer Service (Online)

- You can request a trial of Dynamics 365 for Customer Service here

- Visual Studio 2015 or higher, with Windows Workflow Foundation installed

- Microsoft .NET Framework 4.5.2

- The Dynamics 365 Developer Tools

- A subscription for the Cognitive Services Video Indexer API

- As of the time of writing, you can obtain a free subscription to this preview service API following the instructions found here.

- Follow the instructions in the above link to obtain your Account ID, Primary Key and Location parameter (‘trial’ for trial accounts, but may differ if you connect your account to Azure)

Adding Custom Fields to Phone Call Entity

Before we create our workflow to obtain insights about phone call recordings, we will add some custom fields to the Phone Call entity. Many of these will be updated with insights from the Video Indexer service. These fields include:

- Call Recording URL (Single Line of Text): to store the URL where our call recording is stored; it is assumed this will be programmatically set by the telephony provider

- Video Indexer ID (Single Line of Text): to store the ID of our call index in the Video Indexer service

- Call Keywords (Multiple Lines of Text): to store a comma-separated list of keywords from the Video Indexer insights

- Negative Sentiment Ratio (Decimal Number, minimum 0, maximum 100): to store a value representing the percentage of the call recording in which negative sentiment was detected

- Positive Sentiment Ratio (Decimal Number, minimum 0, maximum 100): to store a value representing the percentage of the call recording in which positive sentiment was detected

- Neutral Sentiment Ratio (Decimal Number, minimum 0, maximum 100): to store a value representing the percentage of the call recording in which neutral sentiment was detected

For a detailed walkthrough of adding new fields to D365 for Customer Engagement, see here.

Building our Custom Workflow Activities

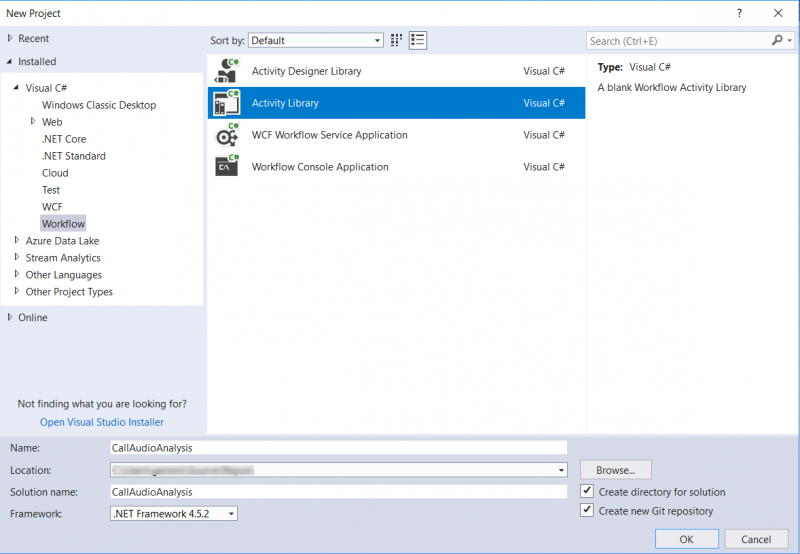

In Visual Studio, we will first create a new project by selecting Workflow under Visual C# in the Installed Templates pane, and then select Activity Library. We will name our project CallAudioAnalysis.

In our Project Properties, on the Application tab, we specify .NET Framework 4.5.2 as the target framework.

Adding References

We add references to our project by right-clicking the CallAudioAnalysis project in the Solution Explorer, and adding the following:

- Microsoft.Xrm.Sdk

- Microsoft.Xrm.Sdk.Workflow

- System.Net

- System.Net.Http

- System.Runtime.Serialization

Note that the Microsoft.Xrm.Sdk and Microsoft.Xrm.Sdk.Workflow assemblies can be retrieved via NuGet, by obtaining the following packages using the NuGet Package Manager Console:

- https://www.nuget.org/packages/Microsoft.CrmSdk.Workflow

- https://www.nuget.org/packages/Microsoft.CrmSdk.CoreAssemblies

Adding a Data Contract for the Video Indexer API

To facilitate interacting with the Video Indexer Service, we add a new item to our project: a Visual C# class which we will name VideoIndexer.JSON.cs. We will populate this file with JSON data contracts for the data that comes back in the responses for the Upload Video operation, and the Get Video Index operation.

Data Contract Code Excerpt Due to Length; for full Data Contract, click here

[snippet slug=video-indexer-json-data-contract line_numbers=false lang=c-sharp height=500]

Adding Our C# Code - UploadVideo.cs

We can delete the Activity1.xaml file in the project, and Add a new Class to the project, which we name UploadVideo.cs. This custom action will be responsible for uploading our call recording to the service.

To our new class, we:

- add some using statements

- make the class inherit from the CodeActivity class and give it a public access modifier

- add functionality to the class by adding an Execute method

We define our input and output parameters for our custom activity:

- viAccountId as an input parameter: this is the Account ID of our Video Indexer API account, retrieved in our prerequisites

- viApiKey as an input parameter: this is the Primary Key for our Video Indexer API account

- viApiLocationString as an input parameter: this is the Location parameter for our Video Indexer API account

- videoUrl as an input parameter: this is the publicly available URL from which the audio file can be retrieved

- videoId as an output parameter: this is the ID of the media, after it has been indexed by the service

- processStatus as an output parameter: this is an indicator of the success of the video upload

In our Execute method, we:

- Create our Tracing service for debugging, and our Context

- Obtain our input parameters

- Prepare and issue an HTTP request to the Video Indexer Authorization API, with our API Key, to obtain an ‘Account Level’ Access Token

- Prepare and issue an HTTP request to the Video Indexer Operations API, with our Access Token, to upload our audio file

- Read the response, serialize it into an UploadVideoResponse object, and retrieve the ID of our uploaded media

- Set our processStatus and videoId output parameters

[snippet slug=uploadvideo-cs-custom-action line_numbers=false lang=c-sharp]

Adding to Our C# Code - GetVideoIndex.cs

Just as with our previous custom action, we Add another new Class to the project, which we name GetVideoIndex.cs. This custom action will be responsible for retrieving the insights from our call recording once it has been indexed by the Video Indexer service.

Just as before, to our new class, we:

- add some using statements

- make the class inherit from the CodeActivity class and give it a public access modifier

- add functionality to the class by adding an Execute method

We define our input and output parameters for our custom activity:

- viAccountId as an input parameter: this is the Account ID of our Video Indexer API account, retrieved in our prerequisites

- viApiKey as an input parameter: this is the Primary Key for our Video Indexer API account

- viApiLocationString as an input parameter: this is the Location parameter for our Video Indexer API account

- videoId as an input parameter: this is the ID of the media that we are retrieving insights for

- keywords as an output parameter: a comma-separated list of keywords from the Video Indexer insights

- negativeSentimentRatio as an output parameter: a value representing the percentage of the call recording in which negative sentiment was detected in the insights

- positiveSentimentRatio as an output parameter: a value representing the percentage of the call recording in which positive sentiment was detected in the insights

- neutralSentimentRatio as an output parameter: a value representing the percentage of the call recording in which neutral sentiment was detected in the insights

- durationInMinutes as an output parameter: the duration of the call recording, in minutes

- processStatus as an output parameter: this is an indicator of the success of the video upload

In our Execute method, we:

- Create our Tracing service for debugging, and our Context

- Obtain our input parameters

- Prepare and issue an HTTP request to the Video Indexer Authorization API, with our API Key, to obtain a ‘Video Level’ Access Token

- Prepare and issue an HTTP request to the Video Indexer Operations API, with our Access Token, to retrieve the indexed insights about our phone call recording

- Read the response, serialize it into a GetVideoIndexResponse object, and retrieve the various insight components from our phone call

- Set our various output parameters

[snippet slug=getvideoindex-cs-custom-action line_numbers=false lang=c-sharp]

Adding to Our C# Code - GetVideoToken.cs

Just as with our previous custom actions, we Add another new Class to the project, which we name GetVideoToken.cs. This custom action will be responsible for simply retrieving a Video Access Token. In our next post, we will use this custom action to help us embed further insights into Dynamics 365.

Just as before, to our new class, we:

- add some using statements

- make the class inherit from the CodeActivity class and give it a public access modifier

- add functionality to the class by adding an Execute method

We define our input and output parameters for our custom activity:

- viAccountId as an input parameter: this is the Account ID of our Video Indexer API account, retrieved in our prerequisites

- viApiKey as an input parameter: this is the Primary Key for our Video Indexer API account

- viApiLocationString as an input parameter: this is the Location parameter for our Video Indexer API account

- videoId as an input parameter: this is the ID of the media that we are retrieving insights for

- videoAccessToken as an output parameter: this is the Video Access Token, valid for 1 hour

- viAccountIdOut as an output parameter: this is the same Account ID, output for use in conjunction with the Access Token

- processStatus as an output parameter: this is an indicator of the success of the video upload

In our Execute method, we:

- Create our Tracing service for debugging, and our Context

- Obtain our input parameters

- Prepare and issue an HTTP request to the Video Indexer Authorization API, with our API Key, to obtain a ‘Video Level’ Access Token

- Set our various output parameters

[snippet slug=getvideotoken-cs line_numbers=false lang=c-sharp]

Before compiling our assembly, we sign it. In the project properties, under the Signing tab, we select Sign the assembly and provide a key file name.

We are now ready to compile the assembly by Building the solution.

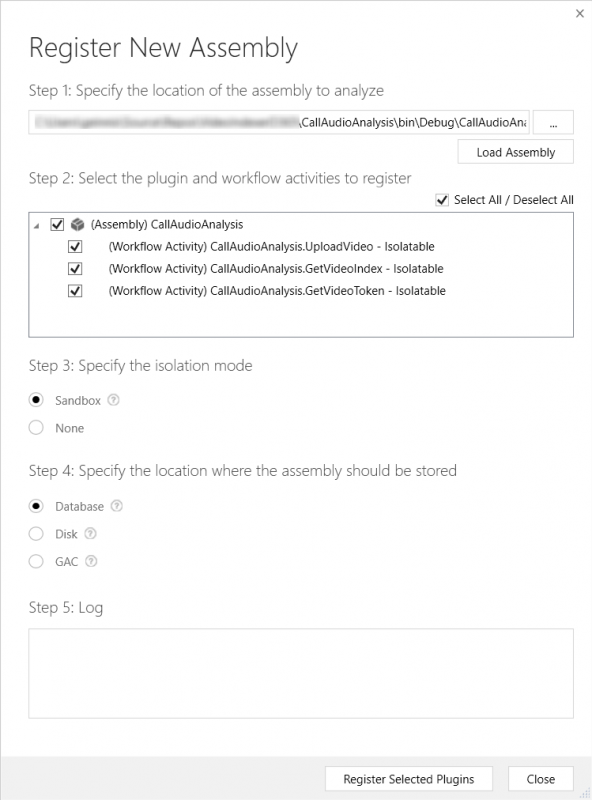

Registering our Assembly

We now need to register our custom workflow activity assembly on our Dynamics 365 Online instance. To do that, we will use the Plug-in Registration Tool. This tool is available in the Dynamics 365 Developer Tools.

Following the instructions in the documentation, we launch the tool, and authenticate using our administrator credentials for D365. We then select Register New Assembly from the Register menu.

In the resulting dialog box, we choose the location of our compiled assembly (which should be in the CallAudioAnalysis\bin\Debug folder). We select our assembly and our workflow activity for registration. We specify Sandbox as the isolation mode, and Database as the storage location. Finally, we click Register Selected Plugins:

We now have a custom workflow activity that will allow us to interact with the Video Indexer API to derive insights from call recordings.

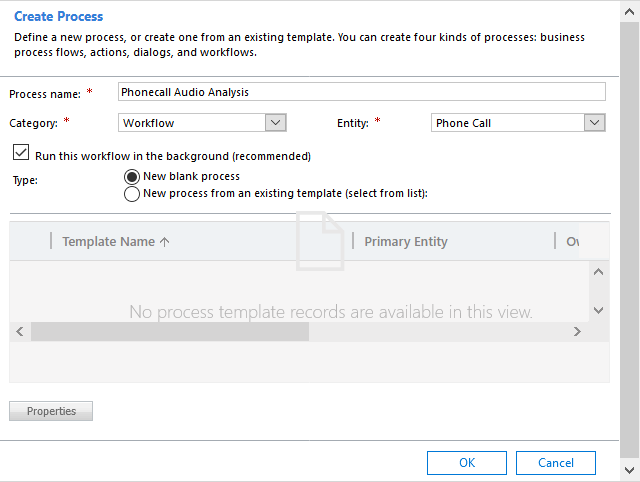

Creating our Workflow

We will now create a workflow that will make use of two of our custom actions, to upload our call recording to the service, wait until it has completed processing, then retrieve the indexed insights and update our Phone Call record.

Logged in to the Dynamics 365 web client with our administrator credentials, we navigate to Settings > Customizations > Customize the System. We choose Processes from the left navigation, and choose New.

We specify that we are creating a Workflow type of process that is applicable to the Phone Call entity, running in the background, and started when a record is created, or when the Call Recording URL field is updated:

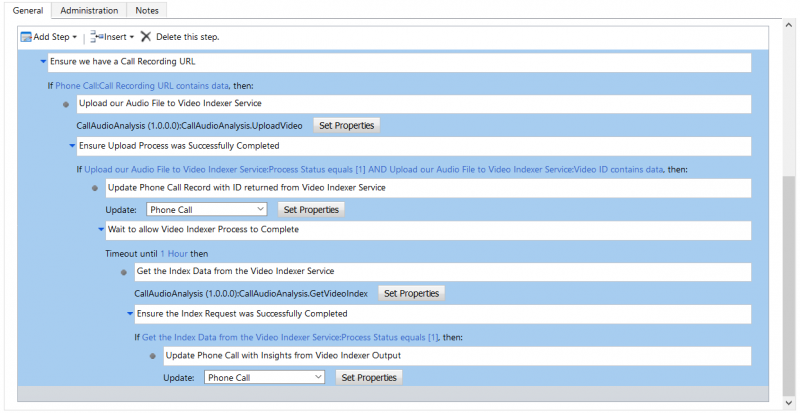

Our workflow will include the following logic:

- we check to ensure we have a Call Recording URL, and if so:

- we use our UploadVideo custom action to upload our video

- we check to ensure our request to upload the video was successful, and if so:

- we update our Phone Call record with the ID from the Video Indexer Service

- we wait a nominal duration of 1 hour for our audio to complete processing; this logic could be extended with recursive requests until indexing was complete, to expedite retrieving of the insights, and to avoid problems if processing takes longer than 1 hour

- after our wait time, we use our GetVideoIndex custom action to retrieve our insights

- we check to ensure our request for the insights was successful, and if so:

- we update the phone call record with the insights

The complete workflow logic looks like this:

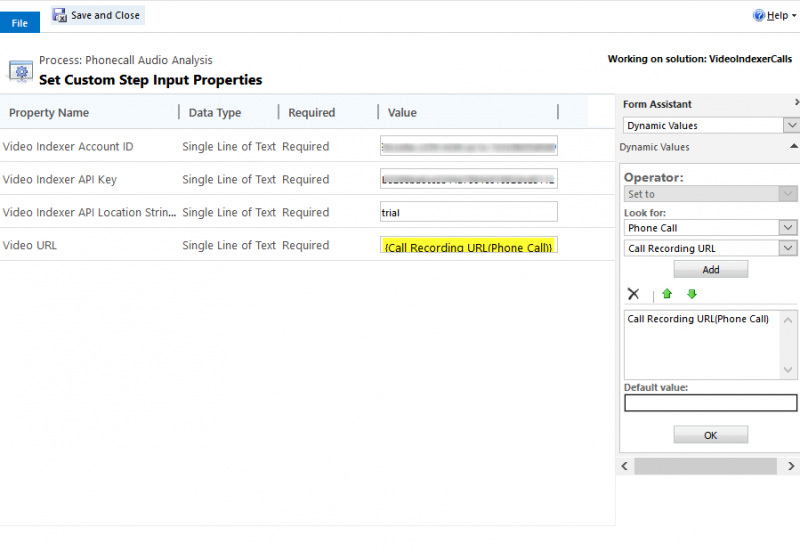

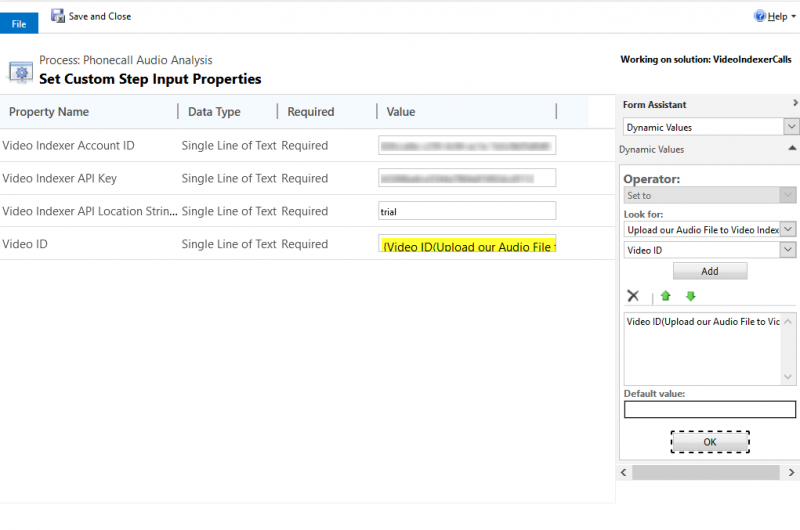

We set the input parameters to the UploadVideo action like so:

We set the input parameters to the GetVideoIndex action like so:

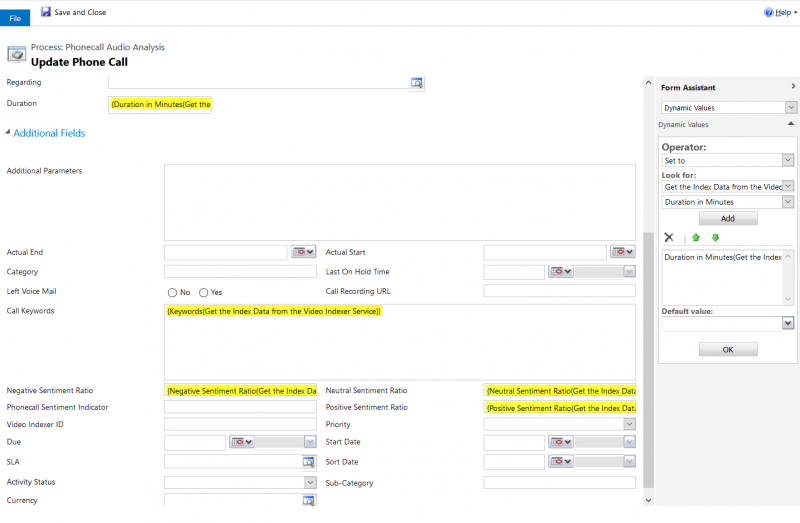

We update our phone call with insights like so:

After we Save and Activate our process, we are now ready to test it.

We can test the indexing process by creating a Phone Call record and setting the Call Recording URL attribute to a value containing the URL to a call recording. In this example, we are using a .M4A audio file, hosted in Azure Blob Storage. The recording contains a simulated phone call regarding a problem connecting an Xbox One to a network.

We can track the progress of our custom actions using the Plug-in Trace Logging feature of Dynamics. After one hour, we should be able to view insights about our phone call in Dynamics 365. This screenshot shows an updated Phone Call form, containing our newly-created custom fields:

Note that the Video Indexer service can provide a wealth of additional insights about our phone call, including the full transcript, the language being spoken, brands mentioned, instances of banned words, and much more. The custom activities and the workflow can be further extended to bring this additional metadata into Dynamics 365, if desired.

In our next post, we will build upon this one, bringing interactive Insights, Transcript, and Call Audio widgets directly into our Phone Call record in Dynamics.