Hands-On with Cognitive API - Text Language Detection & Translation

Microsoft Cognitive Services (formerly Project Oxford) are a set of APIs, SDKs and services available to developers to make their applications more intelligent, engaging and discoverable. Microsoft Cognitive Services expands on Microsoft’s evolving portfolio of machine learning APIs and enables developers to easily add intelligent features – such as emotion and video detection; facial, speech and vision recognition; and speech and language understanding – into their applications. Our vision is for more personal computing experiences and enhanced productivity aided by systems that increasingly can see, hear, speak, understand and even begin to reason. -- Courtesy - Microsoft

In simple terms, Cognitive API's are those that assist the users to perform an operation in a better way !

This site will assist you in getting started with Cognitive Service in Azure. Primarily the API's are hosted in azure and consumption is through the cloud service. Hence the users can view more granular details on usage and analytics.

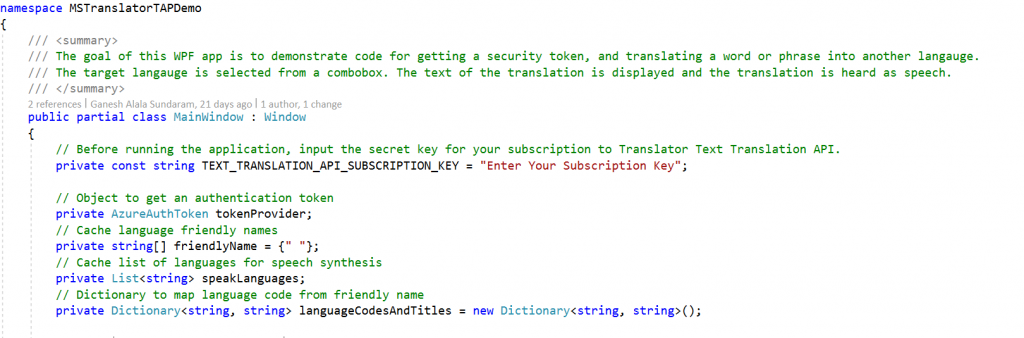

To start with, we will use the text and speech API to create an app. I just took an app from https://github.com/MicrosoftTranslator/CSharp-WPF-Example and wrote a new method to detect the language. This is implemented by calling the cognitive api by parsing the input text. API to be referred "https://api.microsofttranslator.com/v2/Http.svc/Detect?text="

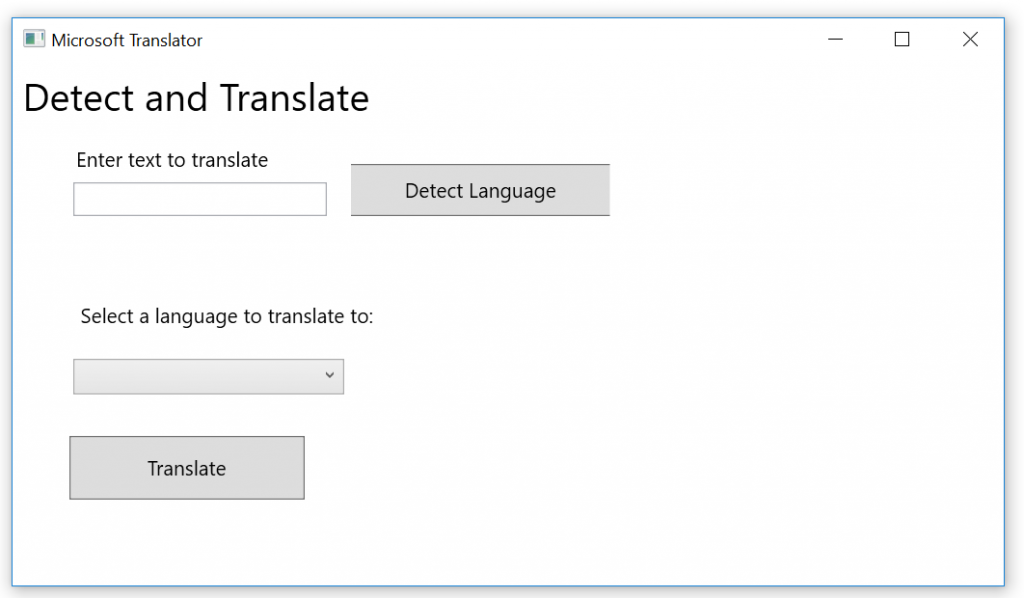

Steps to follow:

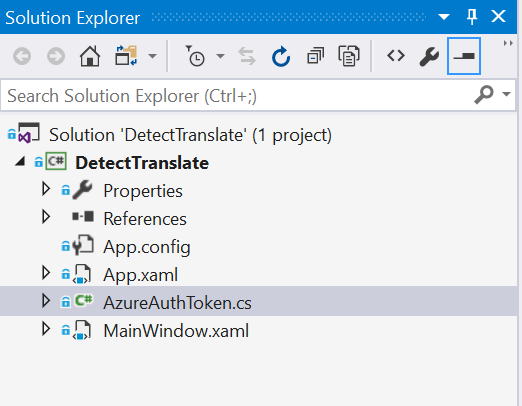

- Download the You can my sample app from https://github.com/ganesh-alalasundaram/PlugnPlay/tree/master/CognitiveApi\_Sample

- Open the Solution

3. Enter the subscription key that you obtain from Azure.(You can refer here to create the subscription)

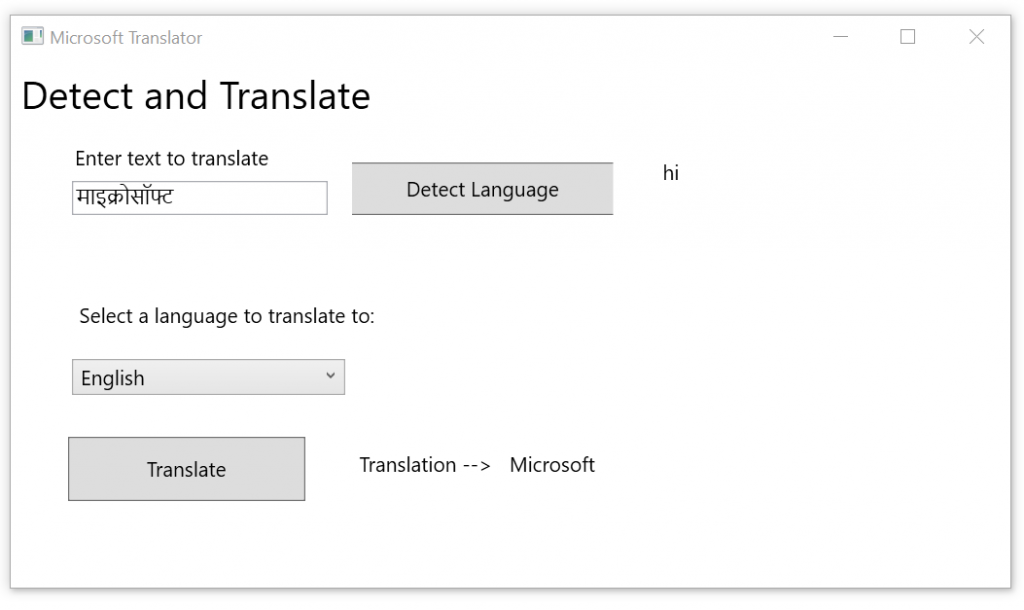

4. Run the App. Enter the text and click detect language - that gives you the language code.

Select a value from the dropdown and click translate button. This will translate the entered text to the target language.