Implementing System Quality Attributes

I recently participated in an IASA initiative to document skills needed by Software Architects. I wrote an article on Implementing System Quality Attributes - see below.

Here's the location of the article on IASA: https://www.iasahome.org/c/portal/layout?p_l_id=PUB.1.269&p_p_id=20&p_p_action=1&p_p_state=exclusive&p_p_col_id=null&p_p_col_pos=3&p_p_col_count=4&_20_struts_action=%2Fdocument_library%2Fget_file&_20_folderId=61&_20_name=01-Implementing.pdf

Here's the location of the IASA initiative: https://www.iasahome.org/web/home/skillset

Here's the article on Skyscrapr: https://msdn2.microsoft.com/en-us/architecture/bb402962.aspx

What do you think?

Implementing System Quality Attributes

In this article I aim to describe how to implement system quality attributes in software solutions with the expected benefit of improving return on investment from IT systems

Gabriel Morgan

Implementing Quality Attributes

When I was a teenager my friend’s father gave him the family spare station wagon as his first car. It was an old, decrepit Ford LTD station wagon that wasn’t exactly the type of car one at our age would flaunt to attract the type of attention we were after. But it was the only car either of us had, so it was just fine…of course, once we could fix it up a bit that is. We decided to turn it into a something more to the aesthetics of a roadster by raising the rear wheels, replacing the carburetor with a set of after-market dual-carburetors, replacing the aged seats with 5-point harness racing bucket seats and giving it a new coat of paint.

During that experience, I remember leafing through loads of catalogs containing descriptions of parts from various after-market manufacturers which all matched the published Ford LTD station wagon specifications for the 454 Cleveland V8 engine, interior seat mounts and rear suspension brackets needed for the car’s conversion. We chose what we could afford and installed them ourselves following simple installation instructions. We didn’t need to rebuild anything, nor force-fit any of the parts, nor fabricate any custom mounts to reuse that old station wagon for our new purposes. At the time, this event meant nothing to me other than a bit of fun over a couple of weeks during our summer break.

Now, let me tell you a very different story surrounding a very similar event. I once delivered a software solution for a customer who was a market leader in the online retail industry focusing primarily on selling computer goods. The solution consisted of a set of systems which automated order acceptance through fulfillment business processes. The customer was thrilled the day the solution went into production as it meant to him that he could reduce the costs by automating much of the manual activities for these processes and direct his focus on empowering his marketing group.

Wahoo, drinks for everyone…until a couple of years later.

The industry became increasingly more competitive and in order to survive, my customer needed to diversify into several lines of retail goods, he needed to outsource billing services to lower costs, lower his prices by comparing multiple suppliers’ price offerings and improve customer satisfaction by committing to delivery dates by partnering with multiple shipping partners. He hoped to win new customers and to improve customer satisfaction. The systems I developed were not built to easily support this type of change in the business nor were they well designed to be compatible to the flurry of IT packages that hit the market which provided specialized components for system integration, customer care management, faster supplier enablement, marketing campaign management, etc. I ultimately had to redesign parts of the architecture. This took considerably more time than it would have taken if I had initially designed them for change in the first place. In the end, the systems required a major overhaul to meet the needs the business.

Let’s go back to my story of converting my friend’s station wagon and repurposing it for the needs of two teenagers versus my experience converting my customer’s online retail systems to support multi-store channels, multi-supplier relationships, and provide for more sophisticated functionality. The two experiences were conceptually similar in their scenario but far different in their execution. A few years ago I began to think about this and ask myself some questions. Would it have been possible for my original online retail systems to have been more resilient to change in the business processes as well as changes in IT technologies? Is it possible to design systems to become more resilient to change and improve the chances for not having to perform major overhauls? I believe it is.

I’m not suggesting we software architects are the sole contributor to reach the level of efficiency the automobile industry has today, as this takes experience, industrialization, collaboration between car manufacturers, healthy competitive market of part suppliers, adherence to automobile industry standards and quality control, which we in the software industry salivate for. What I am suggesting is that we should consider learning from the design concepts that make the automobile industry unbelievably successful and see how we go.

I propose that Solution Architects, in addition to contributing to the delivery of a working system to customers, are also responsible for ensuring system quality with the express intention of building systems to be resilient to change. In this article, I will describe an approach for building systems designed to sustain changes in business processes as well as changes in technology platforms for Solution Architects to leverage and reap the long-term benefits of improving customer’s return on investment.

Plan for implementing system quality

To implement system quality, it’s best to start with a plan. Software quality, by definition, is the degree to which software possesses a desired combination of attributes (IEEE 1992). Therefor, in order to improve system quality, we need to focus our attention on software quality attributes. Ultimately, there are only a few system quality attribute primitives that all system qualities can map to. In my experience I’m quite fond of the system quality attributes listed in Appendix A: System Quality Attributes which include Agility, Flexibility, Reusability, Performance, Security, etc. Note that these are by no means the definitive set of system quality attributes nor are the definitions the only acceptable definitions to consider. I’ve used these and have been relatively successful with them. The important thing to remember is to come up with a set so that you can clearly communicate with stakeholders the system quality impacts of your architecture decisions.

Back to my story regarding the station wagon conversion…I was pleased with the amount of effort it took to modify and extend the Ford LTD station wagon. The designers of the Ford LTD station wagon predicted that there would be a need for owners wanting to replace parts with after-market components and designed the car to be maintained, in my case upgraded, to be compatible with parts from other car part vendors.

There are a few system qualities that contributed to this positive experience such as Maintainability and Testability but there is one system quality which stands out – system Flexibility. The Ford LTD station wagon designers specifically implemented the Ford LTD design for Flexibility. Maybe not in these exact terms, but I do think they thought about making the design of the automobile to be composed of other automobile part manufacturers other than what Ford produced. Of course, the primary driver for the Ford designers to optimize for Flexibility was probably not for benefit of the after-market car part manufacturers but rather to give Ford the benefit of choosing from several bidding car part manufacturers and choose the right balance of quality and cost for their production model. As a consequence, car part manufacturers benefitted from after-market sales by providing upgrade replacement parts to the same flexible design specification.

Someone smart once stated "You can't manage what you can't measure", therefore you need to think about the measures of system quality to make sure you can plan how to go about monitoring them throughout the software lifecycle. You will need to plot out just what you will expect the system designers to build so that it is optimized for system quality. A good approach that you can leverage to plan how to implement system quality is the IEEE Software Quality Metrics Methodology (IEEE 1992). Basically, this methodology suggests following these steps to define and monitor system quality metrics:

1. Establish software quality requirements

2. Identify software quality metrics

3. Implement software quality metrics

4. Analyze results of these metrics, and finally

5. Validate the metrics

Prioritizing system quality attributes

The IEEE Software Quality Metrics Methodology (IEEE 1992) is more of a framework than a strict methodology. You need to add the details yourself to make it work.

First, prioritize the system quality attributes before spending time identifying software quality metrics so as to speed up this process. That is, what’s the point of spending time identifying software quality metrics for system quality attributes you are not going to monitor because they are deemed not a priority?

Second, don’t strictly follow the serial process regarding steps 4 and 5, “Analyzing results of the metrics” and “Validate the metrics” respectively. Because it is good practice to monitor for the existence of well-engineered system quality requirements as part of the overall solution requirements, it is a good idea to monitor for them. As Karl Wiegers notes “software quality attributes or quality factors, are part of the system’s nonfunctional (also called nonbehavioral) requirements.” (Wiegers 2003) The assumption here is that including system quality requirements into the solution’s project requirements improves the chances for a high-quality solution. For this reason, don’t assume that you have to complete each step before moving to the next and find yourself missing the boat on an opportunity to improve system quality. For example, you may just find yourself well into the design phase of your project by the time you get around to completing steps 1 through 3. As you monitor and analyze the metrics regarding system quality requirements, you recognize that you’re too late to go back and inject missing system quality requirements for the project and now you’re left with a bit more risk for a poor-quality solution being developed.

Now that you have a set of defined system quality attributes to draw upon, the next step is to prioritize them for your particular solution and think about how you will go about monitoring for them throughout the software development lifecycle with the aim of determining if system quality is being implemented.

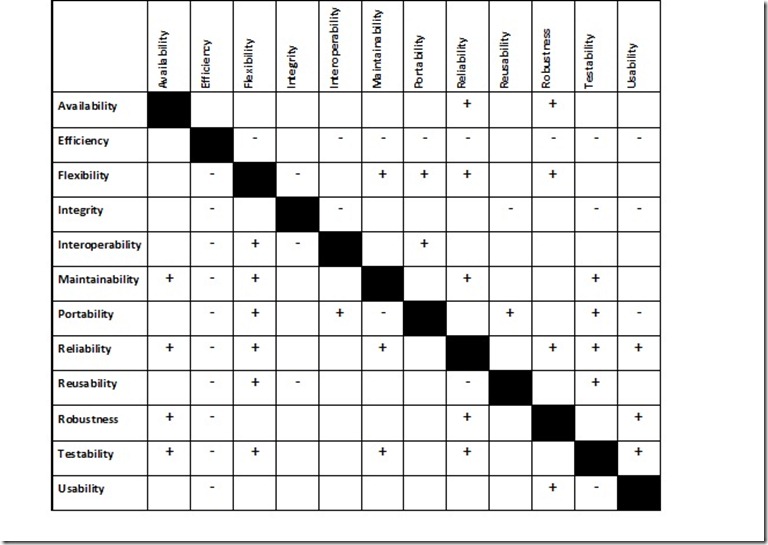

Ideally, you would want to optimize for all quality attributes, but in fact this is nearly impossible because any given system has Tradeoff Points (Clements 2002) which prevent this. A Tradeoff Point is a property that affects one or more attributes. Essentially, changing one quality attribute often forces a change in another quality attribute…either positively or negatively. This is important because knowing the prioritized system quality attributes and their Tradeoff Points aids the decision-making process during design activities.

The graph below is taken from the book Software Requirements Second Edition (Wiegers 2003) and describes an example set of system quality attributes and their Tradeoff Points.

Table 1: System Quality Tradeoff Points

Here’s a quick example to illustrate Tradeoff Points. Let’s say that there is a need for a system service which aims to provide a means for processing banking transactions. The business has noted in the requirements that it must be fast. Assuming that the system designer has not read this article, she immediately begins to optimize the service for Performance. In this hypothetical case, the system designer might opt to build a high-performance application by building a system which

· Captures system requests directly from UDP communications port

· Uses proprietary message communication semantics

· Accepts system requests and processes application logic for system requests in a single process space.

· Embeds application logic in procedural code

· Persists data in local memory space for quick put/get operations.

· Sends responses to system calls in real-time to a high-performance receiving application preferably as close to the hardware level as possible using a proprietary binary communication transport.

Such a system has a number of Tradeoff Points. The three that stick out for me are:

· Interoperability. It will be difficult to interoperate due to the unstructured, unreliable UDP communication protocol as well as unsupported message formats not based on industry standards.

· Flexibility. The application’s decomposition doesn’t lend itself to be changed and reused for other purposes due to the proprietary protocols, coupled messaging and application logic as well as propriety memory store. The application also lacks application decomposition with encapsulated boundaries preventing the ability to plug other components into the system.

· Reliability. UDP communication packets are unreliable as packets could be lost, in-memory data is not persisted to disk and could be lost if the memory is released via a process fault, reboot, etc.

It may be that these sacrifices are intentional and accepted in order to achieve a high performance system…but maybe not. The business and IT owners may actually want the system to be flexible enough to withstand changes to the business processes it supports or technology changes that are inevitable. The point is that tradeoffs are made when optimizing system quality attributes and that if systems are designed with this understanding upfront, then there are less surprises down the road.

There are several processes which describe how to prioritize system quality attributes to derive system architecture such as Software Engineering Institutes (SEI) Attribute-Driven Design (Kazman 2000). In addition, there are some fairly well documented Tradeoff Points from SEI’s ArchitectureTradeoff Analysis Method (ATAM). ATAM is a very sophisticated method for determining Tradeoff Points through attribute characterizations which use the Stimuli-Architectural Decision-Responses construct (Kazman 2000).

ATAM is focused on the evaluation of software and includes in the method techniques for identifying and prioritizing system quality attributes. There are several other techniques/methods for identifying system quality Tradeoff Points in the industry. Which to use and how much to use from these industry practices depends on a number of things such as budget, resource competency, time to deliver, and solution size and complexity.

I often tend to simply adopt key concepts from these, infer from the solution requirements a set of likely important system quality attributes and collaborate with the key stakeholders to decide on three high-priority system quality attributes to focus the team on. So, my advice to you is to understand the approaches out there and weigh them for each solution to determine what’s best to include in your plan.

Monitor system quality attributes

We have defined a plan and are ready to define the metrics of system quality to monitor and track them throughout the software development lifecycle. The purpose of using metrics is to reduce subjectivity during monitoring activities and provide quantitative data for analysis.

So, what metrics should a Solution Architect inject into the solution to better address system quality? Any good system architecture has quality requirements, so this is a good place to start. To help explain let’s take a specific system quality attribute that I consider very important to deliver Agility to the business and IT stakeholders which is system Flexibility. Here are some Flexibility metrics to consider as an example of what to monitor for:

· Quality of Service (QoS) system requirements. The Solution Architect is responsible for the integrity of a solution and not having well-defined system requirements can lead to confusion downstream and eventuate in a poor quality solution. So, to mitigate this, ensure that system requirements include QoS requirements specifically for those which are correlated to the prioritized system quality attributes. Also, accompanying QoS requirements with Use Cases and Quality Scenarios (Bass Kazmann 1999) will further improve communicating the requirement to the project team and mitigate misinterpretation. For the purposes of the Flexibility example, here are two QoS examples:

1. “A business user who has at least six months of experience in the field shall be able to modify core business processes automated by the system without requiring a change in the system’s source code which, when done, these changes are placed into a queue to be tested, with no more than one hour of labor.” This requirement demonstrates the ability for the system to withstand changes in the business process.

2. “A software engineer can change, including effort to research, design, code, unit test and document, the Find Customer function in the solution to point from the current SAP CRM module to the Microsoft CRM provided Customer Search service interface and release the code changes to the testing environment within one day.” This requirement demonstrates the ability for the system to withstand changes IT systems.

· System patterns that improve quality. The Solution Architect should also identify system patterns which should be used to optimize for the prioritized set of system quality attributes. In my example of system Flexibility, patterns such as Façade (Gamma 1995), Adapter (Gamma 1995), Service Layer (Fowler 2003) and so on improve system Flexibility.

· System anti-patterns that degrade quality. Solution Architects should provide additional guidance on what system designers are to avoid and one method to do this is to identify the anti-patterns which negatively affect system quality. In my example of system Flexibility, anti-patterns such as Shared Database (Hohpe Woolf 2004) and Data Replication (Fuller Morgan 2006) degrade system Flexibility.

The point is that it’s up to the Solution Architect to identify metrics which are used to measure system quality. Unfortunately, no one has fully defined all measures based on scientific mathematics so this can be a challenge. Aim to define as much as you can, leverage existing metric definitions from industry practices as a starting point and build on it from your experience.

Once the system quality metrics are defined, embed them into the solution artifacts and monitor for their adoption througout the software lifecycle.Dduring the build and testing phases, review the system and find deviations from what you defined. These deviations should then be considered for correction.

Through implementing software quality into the solution using the approach described in this article, the solution is better positioned to not only give the business owner a quality solution but also improve its chances to withstand changes in the business and technology therefore maximizing the return on invesment.

Review

Let’s go through a quick summary of the three key points in this article. First, build high-quality software. While this may seem obvious I’m just pointing it out once again for the few who somehow could have missed it. J Second, understand that there are industry practices which guide Solution Architects to build high-quality software systems and they should be leveraged. And thirdly, you will need to build a plan for implementing system quality into your solution and to avoid optimizing for all quality attributes as this is nearly impossible to do. Instead, prioritize them and take the top 3 quality attributes and focus your attention on them. Ideally, if you succeed, you will have improved the chances that your software will last longer and reap the long-term benefits of improving the customer’s return on investment.

Critical Thinking Questions

1. What are the most significant system quality attributes which contribute to Agility and how would you weight them?

2. Beside Quality of Service requirements, design patterns and anti-patterns, what other measurable metrics could you monitor to track system quality?

3. What other methods beyond giving guidance to system designers could you employ to improve system quality? That is, are there methods that other team members could adopt as their responsibility to improve system quality?

Appendix A: System Quality Attributes

Quality Attribute |

Definition |

Agility |

Agility is the ability of a system to be both flexible and undergo change rapidly. (MIT ESD 2001) |

Flexibility |

Flexibility is the ease with which a system or component can be modified for use in applications or environments other than those for which it was specifically designed. (Barbacci 1995) |

Interoperability |

Interoperability is the ability of two or more systems or components to exchange information and to use the information that has been exchanged. (IEEE 1990) |

Maintainability |

Maintainability is: · The aptitude of a system to undergo repair and evolution. (Barbacci 2003) · The ease with which a software system or component can be modified to correct faults, improve performance or other attributes, or adapt to a changed environment. (2) The ease with which a hardware system or component can be retained in, or restored to, a state in which it can perform its required functions. (IEEE Std. 610.12) |

Reliability |

Reliability is the ability of the system to keep operating over time. Reliability is usually measured by mean time to failure. (Bass 1998) |

Reusability |

Reusability is the degree to which a software module or other work product can be used in more than one computing program or software system. (IEEE 1990). This is typically in the form reusing software that is an encapsulated unit of functionality. |

Supportability |

Supportability is the ease with which a software system is operationally maintained. |

Performance |

Performance is the responsiveness of the system – the time required to respond to stimuli (events) or the number of events processed in some interval of time. Performance qualities are often expressed by the number of transactions per unit time or by the amount of time it takes to complete a transaction with the system. (Bass 1998) |

Security |

Security is a measure of the system’s ability to resist unauthorized attempts at usage and denial of service while still providing its services to legitimate users. Security is categorized in terms of the types of threats that might be made to the system. (Bass 1998) |

Scalability |

Scalability is the ability to maintain or improve performance while system demand increases. |

Testability |

Testability is the degree to which a system or component facilitates the establishment of test criteria and the performance of tests to determine whether those criteria have been met (IEEE 1990). |

Usability |

Usability is: · The measure of a user’s ability to utilize a system effectively. (Clements 2002) · The ease with which a user can learn to operate, prepare inputs for, and interpret outputs of a system or component. (IEEE Std. 610.12) · A measure of how well users can take advantage of some system functionality. Usability is different from utility, a measure of whether that functionality does what is needed. (Barbacci 2003) |

Sources

- [Bachmann 2000] Bachmann, F.; Bass, L.; Chastek, G.; Donohoe, P. & Peruzzi, F. The Architecture Based Design Method (CMU/SEI-2000-TR-001 ADA375851). Pittsburgh, PA: Software Engineering Institute, Carnegie Mellon University, 2000. Available WWW: <URL: https://www.sei.cmu.edu/publications/documents/00.reports/00tr001.html\>.

- [Barbacci 1995] Barbacci, M.; Klien, M.; Longstaff, T; Weinstock, C. Quality Attributes - Technical Report CMU/SEI-95-TR-021 ESC-TR-95-021. Carnegie Mellon Software Engineering Institute, Pittsburgh, PA.

- [Barbacci 2003] Barbacci, M. Software Quality Attributes and Architecture Tradeoffs. Software Engineering Institute, Carnegie Mellon University. Pittsburgh, PA.

- [Bass 1998] Bass, L.; Clements, P.; & Kazman, R. Software Architecture in Practice. Reading, MA; Addison-Wesley.

- [Bass Kazmann 1999] Bass, L.; Clements, P.; & Kazman, R. Architecture-Based Development.

- [Fowler 2003] Martin Fowler. Patterns of Enterprise Application Architecture, Boston, MA. Addison-Wesley.

- [Fuller Morgan 2006] Tom Fuller, Shawn Morgan. Data Replication as an Enterprise SOA Antipattern. The Architecture Journal Issue 8. Microsoft Corporation. <URL: https://architecturejournal.net/2006/issue8/F4\_Data/default.aspx\>

- [Gamma 1995] Gamma, E.; Helm, R; Johnson, R.; & Vlissides, J. Design Patterns, Elements of Reusable Object-Oriented Software. Addison-Wesley. Carnegie Mellon Software Engineering Institute

- [Hohpe Woolf 2004] Gregor Hohpe and Bobby Woolf. Enterprise Integration Patterns. Boston, MA. Addison-Wesley.

- [IEEE 1990] Institute of Electrical and Electronics Engineers. IEEE Standard Computer Dictionary: A Compilation of IEEE Standard Computer Glossaries. New York, NY.

- [IEEE 1992] IEEE Std 1061-1992: IEEE Standard for a Software Quality Metrics Methodology. Los Alamitos, CA: IEEE Computer Society Press.

- [Kazman 2000] Kazman, R.; Klein, M. & Clements, P. ATAM: Method for Architecture Evaluation CMU/SEI-2000-TR-004 ADA382629. Pittsburgh, PA: Software Engineering Institute, Carnegie Mellon University. Available WWW: <URL: https://www.sei.cmu.edu/publications/documents/00.reports/00tr004.html\>

- [MIT ESD 2001] Tom Allen, Don McGowan, Joel Moses, Chris Magee, Dan Hastings, Fred Moavenzadeh, Seth Lloyd, Debbie Nightingale, John Little, Dan Roos, Dan Whitney. ESD Terms and Definitions (Version 12) ; Massachusetts Institute of Technology. Engineering Systems Division. ESD-WP-2002-01, October.

- [Wiegers 2003] Karl Wiegers. Software Requirements Second Edition; One Microsoft Way, Redmond, WA; Microsoft Press.

About The Author

Gabriel Morgan has over 18 years developing software and is currently Director of Enterprise Architecture Recreational Equipment Inc (REI).. He has extensive experience in the design, development and implementation of distributed software systems and experience solving enterprise application problems such as flexibility, performance and scalability. He has a patent-pending process and tool for performing system quality reviews called the Microsoft System Review. His current interests lie in improving the value of IT through strategic planning and enterprise architecture methods.