Windows Azure Architecture Guide – Part 2 – Saving surveys in Tailspin

As I wrote in my previous post, different sites in TailSpin have different scalability needs. The public site, where customers complete surveys, would probably have need to scale to a large number of users.

The first consequence in the design is the separation of this website into a specific web role in Windows Azure. In this way, TailSpin will have more flexibility in how to manage instances.

The “answer surveys” use case is also a great example for “delayed writes” in the backend. That is: when a customer submits answers for a specific survey we want to capture that immediately (as fast as possible), send a “Thank you” response and then take our time to capture those answers and include them in TailSpin’s data repository. All the work of inserting the responses into the data model do not have to be tied to the user response. Much less all the calculations involved in updating the summary statistics of that particular survey.

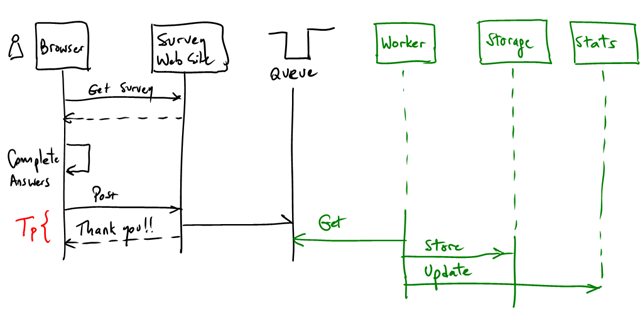

This pattern is exactly what we discussed here. Here’s an updated diagram for this particular use case:

- Customer gets a survey

- Completes answers

- Submits answers

- Web site queues the survey response

- Sends “Thank you” to the user (The goal is to make Tp as small as possible)

- A worker picks up the new survey and stores it in the backend, then updates the statistics

Nothing completely new here. There are some interesting considerations though:

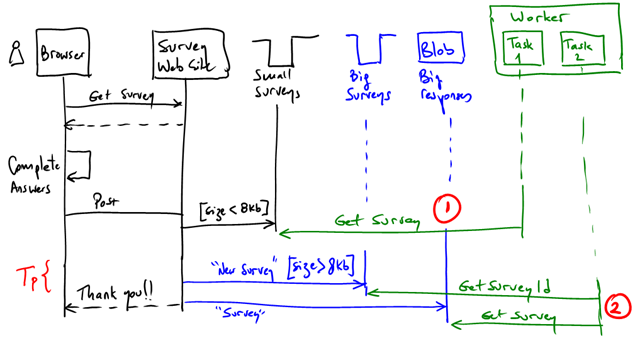

- The survey response might be larger than the max item size that a queue can hold (8Kb max, including base64 encoding, so about 6Kb effective). If that’s the case, you will have to use a blob and a queue. A possible optimization is to consider both cases:

If the response is < 8Kb then we store it completely in the queue. if it is > 8Kb we store it in a blob and we write a message to a queue to notify the workers. Then there would be a worker with 2 tasks (#1 and #2 on the diagram above). #2 will have to get the response from the blob using a handle from the queue.

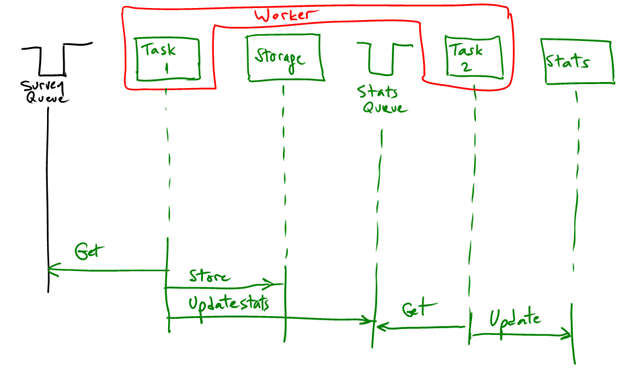

- Second consideration is the processing of the surveys response. We have to do 2 things: store the responses in the data repository and update the basic statistics of that particular survey. Basic statistics change depending on the type of questions. Currently we have 3 supported types: free text, ranges (from 1 to 5) and multiple choice.

Simple stats for these would be:

- Number of responses

- Histograms in multiple choice questions

- Calculating aggregations in the range answers (e.g. average)

These computations can be done as part of the same worker task, or can be further be delegated to another one with a queue connecting both:

As usual, this processing has to be idempotent. We don’t want to skew the statistics, double count results, etc. There are many ways of addressing this. More on this in further posts.