Windows Azure Guidance – Yet another way of writing records to store and dealing with failures

These series of posts dealt with various aspects of dealing with failures while saving information on Windows Azure Storage:

Windows Azure Guidance - Additional notes on failure recovery on Windows Azure

Windows Azure Guidance – Failure recovery and data consistency – Part II

Windows Azure Guidance – Failure recovery – Part III (Small tweak, great benefits)

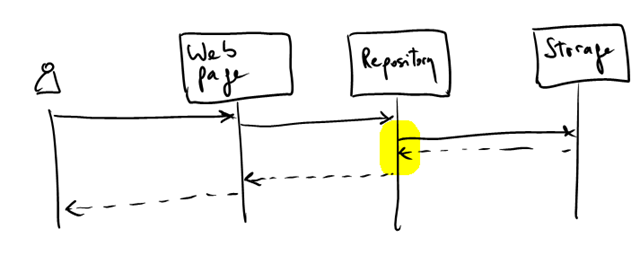

We discussed several improvements on the code to make it more resilient. In all the cases discussed, we assumed that persisting changes on the system was a synchronous operation. Even though the articles focused mainly on the lowest levels of the app (from the Repository classes and below), it is likely these are called from a web page or some other components where a user is waiting for a result:

The solutions proposed focused on the highlighted area above and are especially useful if the changes need to be reflected immediately to the user afterwards. But that’s not always the case.

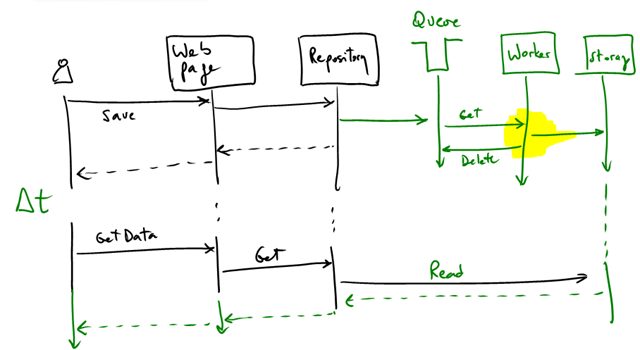

A further improvement to the system’s scalability and resilience can be achieved if instead of saving synchronously, we defer the write to the future. This should be straightforward for reader of this blog and familiar with Windows Azure:

Writing to a Windows Azure Queue is an atomic operation.

Caveats:

- Queues can only hold 8Kb of data. If your entities/records/etc fit in 1 message, great! If not, then you probably need to write the whole thing to a blob and then to the queue. Because there are no transactions between queues and blobs all considerations in the referenced articles still apply.

- The system assumes users can afford to wait the “delta T” in the diagram above (sort of an “eventual consistency”). This is true for many use cases (but not in all cases of course)

- The worker logic to save records to the storage system needs to deal with failures anyway (for example, using the Get/Delete pattern).

In general, deferred writes are a great way to improve scalability of your system. Of course, this is not new. COM+ supported this architecture. Similar solutions are available on other platforms.

I wonder how many of my readers recognize EXEC CICS READQ TD QUEUE(MYQUEUE)… :-)