Loading ANSS Earthquake Data Into SQL Server 2008

The Advanced National Seismic System (ANSS) maintains a comprehensive catalog of worldwide seismic events (typically earthquakes) which is a good source of spatial data for SQL Server 2008. The ANSS Composite Catalog (formerly called the CNSS Earthquake Catalog) provides a search capability which allows the download of seismic events, from 1898 to present, using various search terms. In this post I will cover the following topics:

Download ANSS seismic data

Prepare the downloaded ANSS data

Create a database table for the ANSS data

Load the ANSS data

Add, update and index the spatial data column

Sample spatial queries on the ANSS data

DOWNLOAD ANSS SEISMIC DATA

It should be noted that the ANSS catalog contains a huge amount of data which should be carefully subsetted in order to avoid asking for too much information and unnecessarily tying up valuable computing resources attempting to service the request.

For this exercise, I have chosen to download a small subset of the available data. To start the download process, let’s go to the catalog search page.

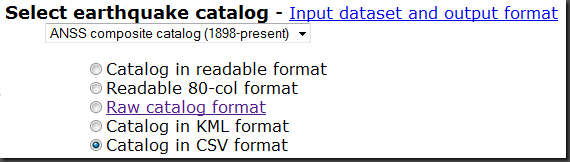

Under the “Select earthquake catalog” section make sure that the “ANSS composite catalog (1898-present)” is selected (this is the only choice, currently). Then select the radio button for “Catalog in CSV format”. This will format the output data as comma separated values.

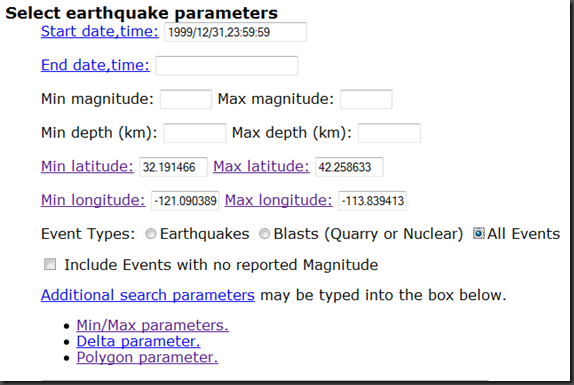

Next, go to the “Select earthquake parameters” section. In this example, I want all events from January 1, 2000 forward, so I set the Start date,time to 1 second before midnight on the day before (1999/12/31/,23:59:59). Since no “End date, time” was specified, the query will return the latest events. Likewise with the other fields which are unset (events with all magnitudes and all depths will be returned since they have not been set). The region specified by the Min/Max Latitudes/Longitudes will return the area covering all of the States of California and Nevada. Lastly, for Event Types:, I selected “All Events”.

Note that you can specify additional search parameters including a polygon within which to search. It is interesting to note that the ANSS documentation states that “[This] polygon must NOT cross the -180/180 degree longitude boundary, since the definition of the polygonal region becomes ambiguous” . Once this data is in the SQL Server Server GEOGRAPHY data type, such queries are easily supported.

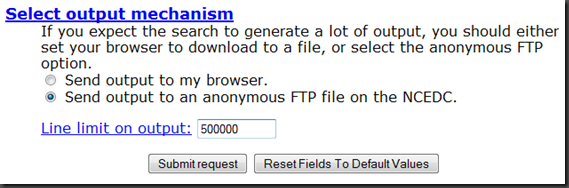

Lastly, in the “Select output mechanism”, choose the "Send output to an anonymous FTP file…” radio button. I’ve set a rather high Line limit on output (500,000 records) since I expect a large number of events to be returned. When ready, select the “Submit request” button.

When your search is completed, you will receive a response in your web browser similar to the following:

Your search parameters are:

----------------------------------------------------------------------------------------------------------------

Your search parameters are:

* catalog=ANSS

* start_time=1999/12/31,23:59:59

* end_time=2010/04/14,22:27:47

* minimum_latitude=31.910759

* maximum_latitude=42.192383

* minimum_longitude=-124.755360

* maximum_longitude=-113.17570

* event_type=AOutput has been placed in anonymous ftp.

host: www.ncedc.org

file: /outgoing/userdata/web/catsearch.15725

URL: ftp://www.ncedc.org/outgoing/userdata/web/catsearch.15725

Size: 454847 lines (34187332 bytes)

File will be automatically deleted in 2 days.

----------------------------------------------------------------------------------------------------------------

Note that the size entry returned a value (454,847 ) which is smaller than the Line limit on output - a value of 500,000. This indicates that we have retrieved all of the desired events.

To retrieve the output file (in this case called “catsearch.15725”) use your favorite ftp tool.

PREPARE THE DOWNLOADED ANSS DATA

With file containing the selected events downloaded, the first thing I like to do is to rename the file to indicate its type, in this case a “csv”. So, the file “catsearch.15725” was renamed to “catsearch.15725.csv”. I also like to save the search parameters associated with the file, so I created a file, “README_catsearch.15725.txt” and saved the search parameters from the web page. Here is a summary of the workflow, so far:

a. Rename the catsearch.15725 file to catsearch.15725.csv

b. [optional] Create a text file, README_catsearch.15725.txt, to store the search parameter metadata.

The ANSS downloaded data is now formatted as a comma-separated, UTF-8 encoded text file. Each record is terminated with newline character ('\n'). SQL Server 2008 does not support UTF-8, expecting such data to be in UTF-16 encoding with each record delimited with a carriage return, newline combination ('\r\n'). Additionally, it needs a Byte Order Marker (BOM) at the beginning of the file. For this exercise, I chose to use the Windows text editor UltraEdit Professional to perform the conversion. Here is the workflow I used with UltraEdit:

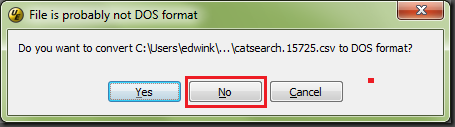

c. Open the catsearch.15725 .csv file in UltraEdit.

Click the “No” button when asked if you want to convert to DOS format:

d. Choose File –> Save As

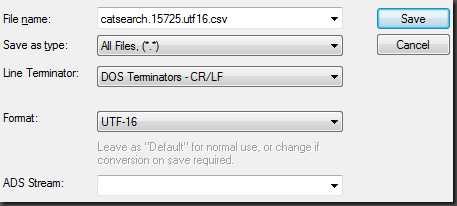

e. Set File name: to “catsearch.15725.utf16.csv”

f. Set Save as type: to All Files, (*.*)

g. Set Line Terminator: to DOS Terminators – CR/LF

h. Set Format: to UTF-16

Here are how steps e – h are shown in the Save As dialog box:

i. Choose Save

ULTRAEDIT NOTE ON LARGE FILE HANDLING: When files sizes get very large (several million records typically), it is useful to configure UltraEdit appropriately prior to editing. You may find more information on how to perform such a configuration here:

https://www.ultraedit.com/support/tutorials_power_tips/ultraedit/large_file_handling.html

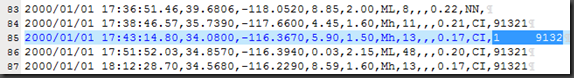

DATA NOTE: I found an occasional anomaly* in the ANSS data which will cause input rows to fail to be inserted into SQL Server. Additionally, the technique used to load this data, BULK INSERT, fails to correctly write these rows out to the specified ERRORFILE* (see Update, below). Because of this, I recommend that you locate and fix these anomalies prior to data loading.

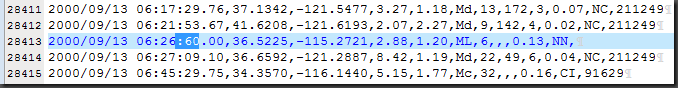

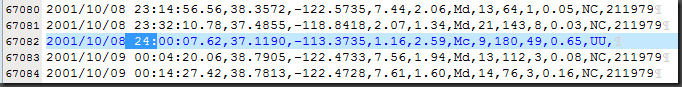

Specifically, there are instances in the DATETIME field of the input data where at least one of the components has a value of “60” in the time string. Here is an example:

SQL Server will not accept this value and rejects the row during insert. To fix this particular problem (shown in the example, above), in which the seconds component is set to 60, it is necessary to increment the minutes component by 1 and set the seconds component to 00. Since there were only 5 such occurrences out of 454,000+ records, I went ahead and made the changes in UltraEdit directly (using the search string “:60”). I will look into other techniques for handling these errors and possibly publish my findings in a future blog post.

Additionally, I found another issue with the data (1 single record) which had a value of 24 for the hours time component. This record was rejected by BULK INSERT with the following error message:

Msg 4864, Level 16, State 1, Line 1 Bulk load data conversion error (type mismatch or invalid character for the specified codepage) for row 67082, column 1 (DateTime).

You can search for the string “ 24:” to locate such data. Note that “fixing” this data entails reving the associated data to the next day.

----------------------------------------------------------------------------------------------------------------

* I talked with Bob Beauchemin about both the ANSS data time anomalies and the truncated BULK INSERT ERRORFILE entries. Bob was unable to reproduce either issue: the ANSS data he downloaded (which contained the data I downloaded) did not have any of the time faults that I found and there was not problem with the ERRORFILE records. Bob was using SQL Server 2008 R2 November CTP as I was also, so I’ll have to do a bit more research on this issue.

----------------------------------------------------------------------------------------------------------------

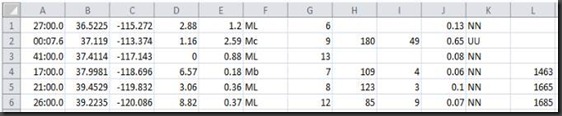

Update: I found the source of my confusion regarding BULK INSERT supposedly dropping the date component of the datetime string in the error file. When I opened the error file in Excel (it’s a .csv file, so it’s naturally associated with Excel), here is what I get:

Notice that the date component is dropped (and so is the hour component of the time string, btw). Also notice that Excel attempts to fix the time data but does fully complete the job.

When I open the same error file in a text editor, I get the expected error output:

2000/09/13 06:26:60.00,36.5225,-115.2721,2.88,1.20,ML,6,,,0.13,NN,

2001/10/08 24:00:07.62,37.1190,-113.3735,1.16,2.59,Mc,9,180,49,0.65,UU,

2002/07/10 01:40:60.00,37.4114,-117.1425,0.00,0.88,ML,13,,,0.08,NN,

2004/09/21 04:16:60.00,37.9981,-118.6963,6.57,0.18,Mb,7,109,4,0.06,NN,1463

2005/09/25 17:20:60.00,39.4529,-119.8317,3.06,0.36,ML,8,123,3,0.10,NN,1665

2005/11/07 06:25:60.00,39.2235,-120.0856,8.82,0.37,ML,12,85,9,0.07,NN,1685

So, confusion over – there is nothing wrong with BULK INSERT error files.

----------------------------------------------------------------------------------------------------------------

CREATE A DATABASE TABLE FOR THE ANSS DATA

Here is the T-SQL to create a table called Earthquakes...

CREATE TABLE Earthquakes(

DateTime DATETIME NOT NULL,

Latitude FLOAT NULL,

Longitude FLOAT NULL,

Depth FLOAT NULL,

Magnitude FLOAT NULL,

MagType VARCHAR(12) NULL,

NbStation INT NULL,

Gap FLOAT NULL,

Distance INT NULL,

RMS FLOAT NULL,

Source VARCHAR(12) NULL,

EventID VARCHAR(12) NULL

);

GO

For information on the column definitions, please see https://www.ncedc.org/ftp/pub/doc/cat1/catlist.1.

Note that the EventID column appears to be of type INT – that is until you come across a value such as the one below:

Consequently, I had no choice but to make this a character field in the CREATE TABLE DDL.

LOAD THE ANSS DATA

To load the data, you can use the following T-SQL for the BULK INSERT command:

BULK INSERT Earthquakes

FROM 'C:\Data\ANSS\catsearch.15725.utf16.csv'

WITH(

DATAFILETYPE = 'widechar',

FIRSTROW = 2,

FIELDTERMINATOR = ',',

ROWTERMINATOR = '\n',

MAXERRORS = 50,

ERRORFILE = 'C:\Data\ANSS\catsearch.15725.utf16.error.csv'

);

GO

--(454846 row(s) affected)

The DATAFILETYPE is set to ‘widechar’ to support the UTF16 data format. The first record in the file contains the names of the fields, thus we need to start the data load with the second record (FIRSTROW = 2). Since this is a comma-separated value file, the FIELDTERMINATOR must be set to ‘,’. The row terminator is ‘\r\n’ but BULK INSERT demands that ROWTERMINATOR be set to ‘\n’ (go figure). I’ve set MAXERRORS to 50 from the default of 10 and specified an ERRORFILE to hold errant rows.

ADD, UPDATE AND INDEX THE SPATIAL DATA COLUMN

In order to accommodate SQL Server 2008 spatial data, I added a column of type GEOGRAPHY (named Location) to the Earthquakes table as follows:

ALTER TABLE Earthquakes

ADD Location GEOGRAPHY NULL;

GO

With the Location column added to the table, I can now update the Location column using the Point constructor static method:

UPDATE Earthquakes

SET Location = GEOGRAPHY::Point(latitude,longitude,4326);

GO

-- (454846 row(s) affected) (00:00:16)

The value of 4326 in the Point constructor is the spatial reference identifier (SRID). This SRID indicates that the geographic coordinate system is WGS84. While not specified in the ANSS on-line documentation, this a reasonable assumption for global data such as this.

In order to create a spatial index, you must have a primary key on the table. The following T-SQL creates an identity column, ID, as a primary key:

ALTER TABLE Earthquakes

ADD ID BIGINT IDENTITY

CONSTRAINT ID_PK PRIMARY KEY(ID);

GO

With that done, a spatial index can now be created on the Location column:

CREATE SPATIAL INDEX location_hhhh1_sidx

ON Earthquakes(Location)

USING GEOGRAPHY_GRID

WITH (

GRIDS = (HIGH, HIGH, HIGH, HIGH),

CELLS_PER_OBJECT = 1,

PAD_INDEX = ON

);

GO

--Results: 00:00:35 seconds

Since this is point data, setting the GRIDS parameters all to HIGH will typically yield the best performance.

SAMPLE SPATIAL QUERYIES ON THE ANSS DATA

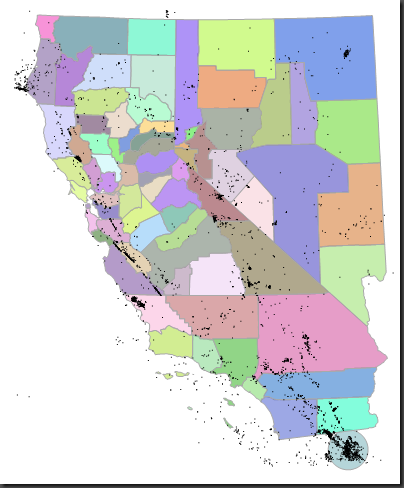

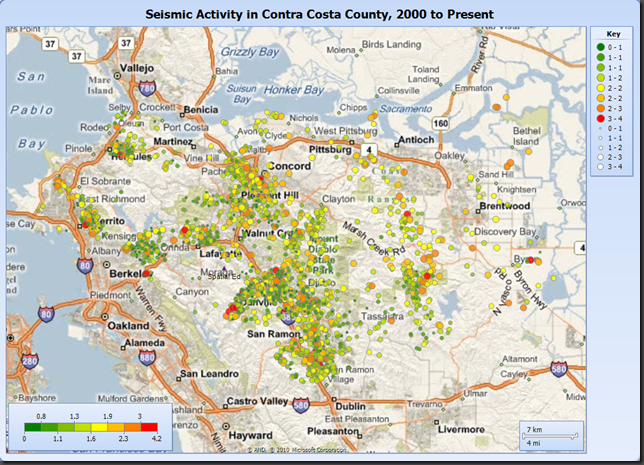

Query 1: Plot all of the earthquakes with a magnitude > 3. Highlight the large earthquakes by creating a buffer polygon around their epicenter. In this case, the earthquake data was drawn over the county polygons for States of California and Nevada.

SELECT Location FROM Earthquakes WHERE Magnitude > 3

UNION ALL

SELECT Location.STBuffer(50000) FROM Earthquakes WHERE Magnitude > 7

UNION ALL

SELECT Geog FROM Counties

WHERE NAME_1 = 'California' or NAME_1 = 'Nevada'

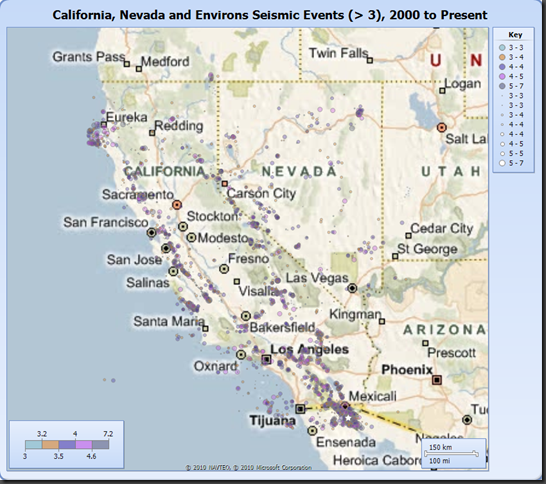

Here is the same data plotted on a Bing Maps background using the Report Builder in the SQL Server 2008 R2 November CTP:

Note that the 7.2 magnitude earthquake (the largest in this dataset), which occurred below Mexicali on April 4, 2010, is displayed with a white star symbol.

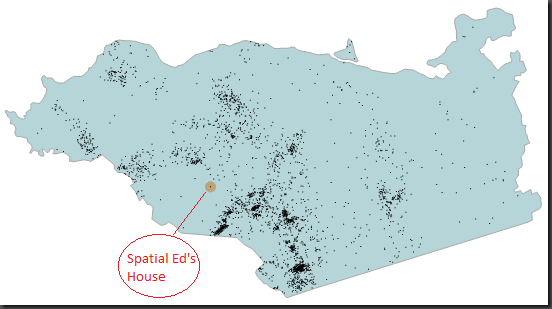

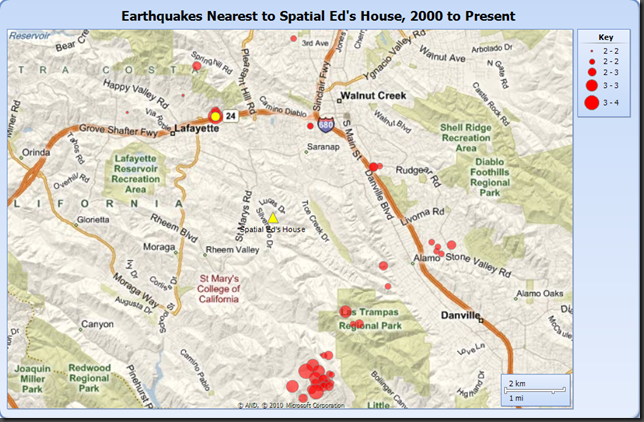

Query 2. For the next query exercise, I wanted to take a look at the earthquake distribution relative to my house in Contra Costa County, California.

DECLARE @p GEOGRAPHY = GEOGRAPHY::Point(38.12345,-121.12345,4326);

DECLARE @g GEOGRAPHY =

(SELECT Geog FROM Counties

WHERE NAME_1 = 'California' and NAME_2 = 'Contra Costa');

SELECT Location, DateTime, Magnitude, Depth

FROM Earthquakes

WHERE Location.STIntersects(@g) = 1

UNION ALL

SELECT @g, NULL, NULL, NULL -– Contra Costa Country polygon

UNION ALL

SELECT @p.STBuffer(750),NULL, NULL, NULL -– make my house location visible

UNION ALL

SELECT @p,NULL, NULL, NULL; -– plot the location of my house as a point

-- (2925 row(s) affected) (00:00:04)

Note that my house is nicely remote from most earthquake activity. Was this by clever analysis or dumb luck?

Here is the same query in visualized in SSRS map. If you look closely, you can see my house location as a small purple triangle with the label “Spatial Ed” underneath the symbol.

Query 3. Let’s perform a query that’s a little more intensive spatially. Let’s find the 50 earthquakes with a magnitude greater than 2.0 nearest to my house. Then, let’s find the nearest earthquake with a magnitude greater than 3.0.

The technique to do a nearest neighbor query in SQL Server 2008 relies upon a numbers table, so let’s create that table:

---------------------------------------------------------

--Create Numbers Table

---------------------------------------------------------

SELECT TOP 100000 IDENTITY(int,1,1) AS n INTO numbers

FROM master..spt_values a, master..spt_values b

CREATE UNIQUE CLUSTERED INDEX idx_1 ON numbers(n);

GO

Now I can query for the top 50 earthquakes nearest to my home’s location with a magnitude greater than or equal to 2.0:

-- Ed’s house (not the real location ;-)

DECLARE @input GEOGRAPHY = GEOGRAPHY::Point(38.12345,-121.12345,4326);

DECLARE @start FLOAT = 10000; -- meters

WITH NearestNeighbor AS

(

SELECT TOP 50 WITH TIES *, b.Location.STDistance(@input) AS dist

FROM NUMBERS n

JOIN Earthquakes b WITH(INDEX(location_hhhh1_sidx))

ON b.Location.STDistance(@input) < @start*POWER(CAST(2 AS FLOAT),n.n)

WHERE n <= 20 AND Magnitude >= 2.0

ORDER BY n

)

SELECT TOP 50 location, DateTime, magnitude, dist

FROM NearestNeighbor

ORDER BY n, dist

Here is the query to find the nearest earthquake to my location with a magnitude greater or equal to 3.0:

DECLARE @input GEOGRAPHY = GEOGRAPHY::Point(38.12345,-121.12345,4326);

DECLARE @start FLOAT = 10000; -- meters

WITH NearestNeighbor AS

(

SELECT TOP 1 WITH TIES *, b.Location.STDistance(@input) AS dist

FROM NUMBERS n

JOIN Earthquakes b WITH(INDEX(location_hhhh1_sidx))

ON b.Location.STDistance(@input) < @start*POWER(CAST(2 AS FLOAT),n.n)

WHERE n <= 20 AND Magnitude >= 3.0

ORDER BY n

)

SELECT 1 location, DateTime, magnitude, dist

FROM NearestNeighbor

ORDER BY n, dist

This returns the following:

Location: POINT(-122.1113 37.8965) DateTime: 2007-03-02 04:40:00.750 Magnitude: 4.2 Distance: 4084.75488358986

The map, below, is symbolized as follows:

- Yellow triangle (in center of map): Spatial Ed’s house

- Yellow circle (near top of map): earthquake >= 3.0 nearest house location

- Red circles: 50 nearest earthquakes >= 2.0 nearest house location

Technorati Tags: SQL Server 2008,R2,Spatial Data,ANSS,CNSS,seismic,earthquake,bulk insert,T-SQL,Reporting Services,Report Builder,nearest neighbor,UltraEdit,numbers table