What’s In Your Database?

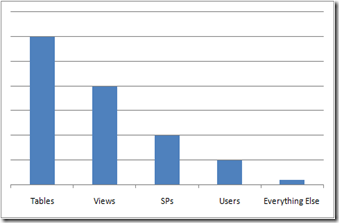

How many different database objects are there? I don’t know the answer either but off the top of my head I’d guess somewhere between 50 and 100, depending on how you count them. Yes, I could go count them but I’m writing this on a Saturday and between you and me I’m just too lazy to go do it. If you profiled (not as in SQL Server Profile) your databases what do you think your Pareto chart of objects would look like. I bet it would look something like the following:

The scale isn’t important, what is interesting is what objects are most in number and what objects are least in number. If you aggregated this data for all of your database the picture probably wouldn’t change all that much. However, we tend to become obsessed with the right-hand side of the chart. The objects that make up the right-hand side (e.g. triggers, types, etc) are important but sometimes we spend far too much time talking about them at the cost of creating solutions that are simple and easy to use. BTW: I purposefully left off indexes – those will likely out number tables. My point here is we should create tools that make it extremely easy to work with the objects that are in abundance. This includes everything from defining them, deploying them, and managing them after they’ve been deployed.

Everyone likely has a few databases that don’t match this relative distribution but I believe you have far more – at least an order of magnitude if not two – that do. Those database are important as they likely house your most valuable applications; your mission and business critical apps. And we need tools that support those apps and objects as well. But in the process of building tools we shouldn’t make the stuff that should be easy hard. The hard stuff should be possible and the easy stuff should, well, be easy.

In general this approach was taken with IntelliSense in SQL2K8. Yes, there are some things that IntelliSense doesn’t cover. But so what. I know, you’re thinking that’s the stuff we should have covered since you don’t do it all that often and that’s when you need help. if we took that approach – the reverse approach – then you wouldn’t have had IntelliSense for something like table. How lame is that? You’d criticize us for not covering the basic objects. Ok, well, you can probably say we should cover it all. And you’re right. We should. But that’s not always practical. Remember we’re constrained by time and resources just like you are. So we have to make trade-offs. This takes us back to my main point – when we have to make choices we should error on the side of covering the common stuff first. We’re getting better at this but I think we have some major work to do on the common stuff (think table designer in SSMS).

Oh, while you’re profiling your databases don’t forget to count filegroups and files. We recently profiled over 1,000 customer databases that had been consolidated or were on the list to be consolidated. Think of these as departmental apps (they’re not Mission or Business Critical). Only 2-3 databases had multiple filegroups and/or multiple files. This shocked me. But given the average size of these databases was less than 2GBs I shouldn’t be surprised as there was no driver for multiple files.

If you decide to profile some of your databases let me know what you find.

Addendum:

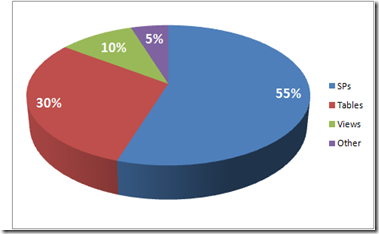

We now have customer data on over 1,000 databases. These are DBs that have been consolidated or are candidates for consolidation. Here consolidation means increasing the density of DBs per instance; not increasing the number of apps per DB. Excluding indexes and constraints the average distribution of item types looks like this:

This data aligns more closely to what Adam’s (see comments below) has seen in the wild. Adam, thanks for replying and “forcing” me to provide a more real world example of the distribution. :-)