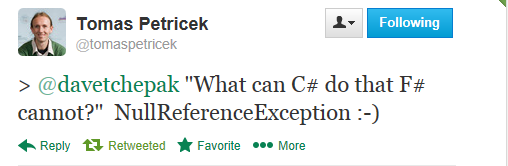

Quote of the Week: "What can C# do that F# cannot?"

The F# community quote of the week was from Tomas Petricek in answer to a question on Twitter, see the pic on the right.

What Tomas says is not 100% technically accurate: you can get NullReferenceException (NRE) in F# if you use C# libraries. C#-defined-types+the "null" literal, or some backdoors like Unchecked.defaultof<_>.

However what Tomas says does match people’s actual experience with the two languages, and it is certainly true that NREs are absent from routine F#-only coding.

For example, this comparison records that one C# project had 3036 explicit null checks, where a functionally similar F# project had 27, a reduction of 112x in the total number of null checks. The other statistics in the comparison shown are also compelling, particularly the “defects since go live”. The two statistics are not unrelated.

After a recent F# meetup I grilled one of the guys who wrote this comparison to learn more about it. I can confirm that they are not making these stats up: these projects are both ETL (Extract, Transform, Load) systems which are broadly speaking in the same zone in terms of functionality, or if anything the F# implements more features. The F# project really did have a very, very low bug rate. The size difference is not due only to language differences; there are also differences in design methodology, with the C# characterized by the overuse of OO design patterns that is often seen in C# and Java projects. Common symptoms include the presence of elaborate, unnecessary and buggy "internal design pattern frameworks" inside the OO code. If you want to learn more about Functional-First design, the guys involved are members of NOOO (Not Only OO).

Sometimes it bewilders me that a massive reduction in explicit null reference checks in comparable programs doesn't shake the computing industry a little more. When other industries see such productivity gains, change happens rapidly. But regardless of the social dynamics, I strongly believe that for the sake of our children we need to abandon the hell that is trying to write robust software in a language where nulls pervade the code like a disease. Nulls were famously called the billion dollar mistake - but they probably cost the industry $1B every year by now, and rising. They are also a drain on human energy and talent. Luckily for those who get to use it, F# offers one way through this.

Unfortunately a sort of stasis, or lack-of-will seems to be gripping the industry at the moment. People stick with their existing languages, though occasionally make noises about needing non-null types (thus making C# and Java even more complex, when we should be ridding ourselves of this curse altogether). Even worse, language designers duly trot out new typed language designs (e.g. Dart, TypeScript, and even Scala) which allow nulls everywhere, for all reference types. That said, I know there are reasons these languages have nulls, given their design constraints, and some languages offer constructs which help make nulls abnormal, and that is some progress. But that still does not satisfy me - we didn't take the "easy" path of pervasive nulls for F#, despite living in the world of .NET. And F# users reap pervasive benefits from the simplicity this brings.

In practice F# (and OCaml, and a few others) shows that highly practical programming and software design is possible in a language where nulls do not pervade regular code. I'd encourage people to think hard about the huge time savings that came with that 112x reduction. And for those interested in language design, look carefully at the F# features which collectively eliminate nulls in routine programming (many come from ML, but not all) . Around the edges we make some compromises. But for the industry the hell of nulls should be diminishing, not increasing. People should be paying attention, and language designers should be held to account. Is the future full of nulls, or not? Can I trust a value to be what it says it is, or might it not be there at all?

Don