Windows Azure Back End

After recently watching Manuvir Das's video on Channel 9, Introducing Windows Azure I have decided to put a quick blog post together to share my thoughts about the back end workings of Azure.

Overview

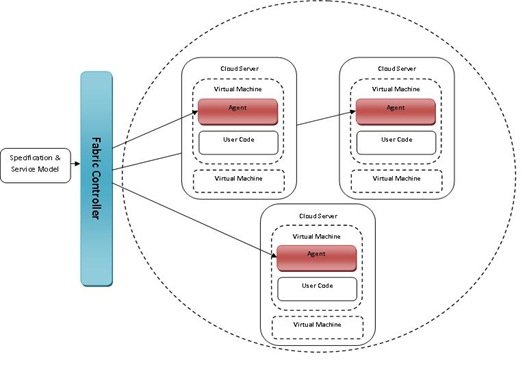

I have provided below a jpg to show a simplified overview of the interactions that happen within the Windows Azure platform.

Specification & Service Model

The specification and service model is user generated application code, definitions and configurations.

These have can be created by a user with the Azure SDK, whereby the user will create the application that will be run over the cloud. Cloud applications are split down into two two areas. Code that runs in the background, these are called the Worker Roles which are not visually displayed to the end user but can be used to perform processing for the service. Along with these are Web Roles that can be visually displayed using ASP.NET code and can therefore accept HTTP and HTTPS input.

The service itself is defined and configured using Service Definition and Service Configuration files, programmed using XML and specific service schemas. Within these XML files you can tell the service the different types of Roles (web/worker) that may be used, along with how many instances that we are wanting to create within the cloud.

Fabric Controller

The fabric controller seems to act in a number of roles, firstly it seems to be used to be able to deploy each of roles (and there many instances) to various locations within the cloud. This could be done by checking where the free processes are and assigning sections of code to the free resources.

Once the service is up and running out in the cloud, the fabric controller acts much like the Observer in the Observer design pattern. It will constantly poll each of the agents that the service is deployed on to make sure it is still healthy.

When the fabric controller find a unhealthy process it can do a number of things such as try to restart the agent (observable) operating system within a server, or in the worst case facilitate a handover to a new machine and securely delete the old processing environment.

Cloud Servers

The cloud servers (think massive data centres full of server racks) that are currently being used have individual virtual machines set up for each of the code instances that they will be hosting, making the deployment environment much more secure both in a personal data security point of view as well as a development sandbox perspective. Each of these servers can hold multiple VM's each running individual roles.

The cloud servers (think massive data centres full of server racks) that are currently being used have individual virtual machines set up for each of the code instances that they will be hosting, making the deployment environment much more secure both in a personal data security point of view as well as a development sandbox perspective. Each of these servers can hold multiple VM's each running individual roles.

The number of VM's that are being used will currently depend on what has been specified in the configuration files of the service.

Agents

Each of these virtual machines then contain an Agent (observable) which as stated before is being constantly pinged by the controller. The Agent then in turn constantly observes the user code that has been deployed to that specific VM, this user code could be either a web or worker role.

Summary

In this brief blog post I have shared my personal understanding of how the Azure back end is currently working. Using a combination of distributed servers, that contain multiple VM's each running a given section of user code (role). This user code is monitored by agents, which in tern are also monitored by the fabric controller which initially received the published service from a developer.

Technorati Tags: Azure,Windows Azure,Cloud Computing,Cloud