Automatic Decompression in WCF

WCF services that are hosted in IIS can take advantage of compression without making any special encoder changes. In Windows Server 2008 R2, IIS compression is actually turned on by default and WCF as of .Net 4.0 supports decompression by default. So if you've got a WCF web-hosted service on a W2K8R2 server using an HTTP transport, then you don't have to do anything to take advantage of compression. If your goal is to have compression on your services and you meet those criteria, then you can kick back now and don't even have to read the rest of this post.

For the rest of us though, there are many interesting details to consider. I figured a question and answer format would work best to explain the nuances.

Q: I'm using Silverlight. How can I take advantage of compression?

A: The good news is that you don't have to do much. Silverlight itself is not responsible for decompressing the messages from a service. The browser will do that for you. Since all requests are essentially going through the browser, the browser can stick in the Accept-Encoding header in the HTTP message. That signals the web server that the client can handle compressed messages and the web server can then decide to turn compression on or off.

Q: If compression is on by default in W2K8R2, how does that affect performance?

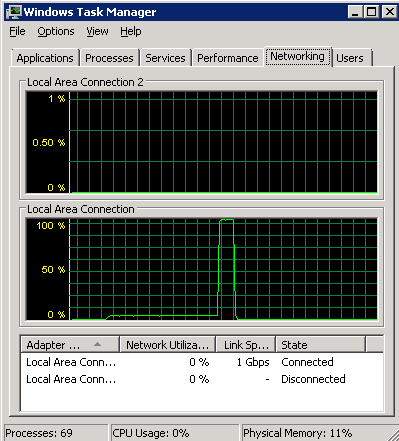

A: With IIS, compression is not just always on or off. IIS can choose to use compression based on a number of parameters. For instance, if the CPU usage on the web server is below a minimum threshold or above a maximum threshold, then compression will be turned off. To keep the response times from being too erratic, IIS only checks every 15 seconds or so (not sure on the exact number). So if you're running a performance test you might get some weird results. For instance, this is a 20 second test where the compression was on at first and the bottleneck was the CPU, so the network usage was low. Then IIS decided to turn off compression because the CPU usage was above the threshold and that sent the network usage to 100%.

So if you're running performance tests on your application, you have to be aware of the compression settings. Instructions on how to configure those settings are available here:

https://www.iis.net/ConfigReference/system.webServer/httpCompression

Q: Does automatic decompression work for anything besides HTTP transports?

A: No. What automatic decompression essentially does is add the Accept-Encoding header to the header of an HTTP request message. It then recognizes the gzip or deflate Content-Encoding on the response.

Q: Who is actually doing the decompression?

A: In the case of Silverlight, the browser is performing the decompression. In the case of a WCF client, the decompression happens in the System.Net layer, before the message even reaches WCF.

Q: Can I compress HTTP requests?

A: No. While it's normal to put Accept-Encoding in the header for a request, it's not expected to have Content-Encoding set to gzip or deflate in there. I believe this has more to do with how HTTP was intended to be used. Therefore, when System.Net automatic decompression was implemented, they only provided for decompression of response messages and not request messages. WCF does not do any decompression on its own, so wouldn't be able to handle it. Although I haven't verified it, I don't believe IIS would handle it either.

Q: Do I need to be using .Net 4.0 to get compression?

A: This depends on your situation. Your web-hosted service does not need to be using .Net 4.0 since IIS is the one doing the compression. For Silverlight, it's really up to the browser if you can handle compressed messages. For WCF clients, that is the case where you'd need .Net 4.0 because prior to 4.0, WCF was not adding the Accept-Encoding header.

Q: Can I turn off decompression support from the WCF client?

A: Yes, you can explicitly set the DecompressionEnabled property to false on the HTTP binding. It's set to true by default.

Q: How does the performance of the GZipMessageEncoder compare to WCF?

A: Note that this is more of a comparison of the compression library in the .Net framework to that in IIS. The framework uses a compression library that was tuned to be faster but have a lower compression ratio. There are a number of open source compression libraries for .Net out there that have similar speed but higher compression ratios. There is no simple answer to this question because it's going to depend on the network limitations and the size and content of the messages being transferred. But my rule of thumb would be that if your bottleneck is network bandwidth, IIS will do better because it compresses better in general. However, there has been quite some work done in the framework's compression library that will likely be visible in the next .Net version (post-4.0).