Azure Key Vault and AzureML

By Philip Reilly, Solution Architect

During the development of an application it is common to need to connect to external resources, such as databases or web services.

When connecting to external resources it is usually necessary to authenticate and authorize whoever is connecting. There are a number of approaches that the application designer can take to presenting credentials to the external resource for the purposes of authentication and authorization. For example, the application can choose to flow the credentials of the user to the external resource, or they may choose to establish an identity for the application itself, and use this identify for authentication and authorization.

The appropriate approach will vary by application and scenario, but in the case of an application that doesn't have an end user interacting with it, then it's necessary for the application to present an identify of its own. For example, a batch application or windows service will need the ability to present the credentials of an identity of their own to access an external resource. A windows service could use a service account or service identity for this purpose.

Now if we consider the scenario of accessing an external resource from the perspective of an AzureML experiment, then the common approach to achieving this is via the reader and writer modules. The reader and writer modules support connecting to a number of different data sources. They also support providing credentials that can be used by the underlying data source to authenticate and authorize the attempted access.

If, however, the data source you are attempting to access isn't supported by the reader or writer modules what can you do? Well there are a number of options. For example, you could write an external application, such as a custom Azure Data Factory activity to retrieve the data from the external data source and write the data to a data store that the reader module can access.

Another option, would be to write custom R or Python logic to connect to the external data source, retrieve the data and then pass the data to another AzureML module within the same experiment.

Recently, I had the opportunity to work with a customer that had such a requirement. They were looking to perform anomaly detection using data retrieved from a third party data provider. As the third party had provided an R package that could be used to retrieve data from their service, we decided to simply upload the R package to AzureML and then call it from within an execute R module.

The R package required a connection string to be provided that included credential information that could be used to authenticate and authorize whoever was attempting to retrieve data. The challenge we faced was how could we protect this connection string information to avoid it being leaked to unauthorized parties.

Simply hardcoding the connection string into the R script was not considered acceptable by the customer and their security team. The solution that we ultimately decided upon was to use the new Azure Key Vault service. The Key Vault is designed support exactly this type of scenario. That is, rather than hardcoding passwords and connection strings into applications, you can use the Key Vault to encrypt and securely store the information. Then at runtime you can access the Key Vault via its REST APIs to retrieve the sensitive information for use within the application.

The Key Vault provides a number of services, including encryption and decryption, as well as the storage of secrets and keys. The Key Vault is also integrated with Azure Active Directory (AD). In order to be able to interact with the Key Vault you need to firstly authenticate with Azure AD.

This article provides a detailed walkthrough on the setup and use of the Key Vault. After having setup and configured Azure AD and Key Vault we then used the Key Vault's REST API to store the connection string within the Key Vault.

The Key Vault stores the connection string in an encrypted state and will only provide access to the connection string to an entity that has been granted permission to read it. The entity being defined as a user or application within the Azure AD tenant associated with the Key Vault instance.

For our purposes we registered an application identity within Azure AD to represent our AzureML tenant. When you register an application in Azure AD you are provided with a client id and an application key then can be used to authenticate to Azure AD.

With the connection string now protected we then write code, within an execute Python module, to retrieve the connection string from the Key Vault.

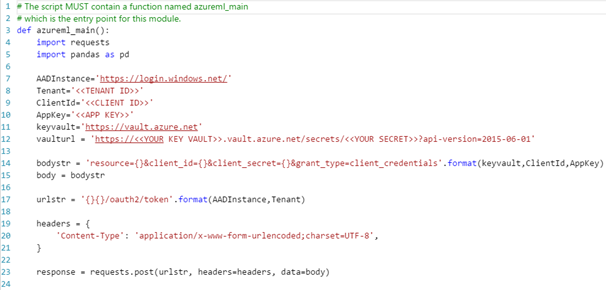

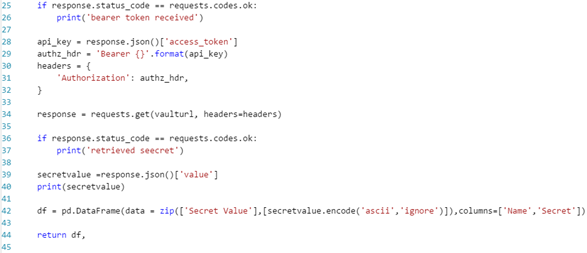

The Python code performs the following activities…

- Calls the Azure AD OAuth2 endpoint to request a bearer token to access the Key Vault. The code uses the client id and application key, which were obtained during the registration process, described in the previous article, to authenticate to Azure AD.

- Retrieves the bearer token from the response provided by Azure AD

- Calls the Key Vault, using the bearer token as a means of authentication, to retrieve the connection string (secret)

- Retrieves the connection string from the response and writes it to a data frame that can be used to pass the connection string to the next module