Capturing and Analysing SQL Queries in CRM Implementations

Our CRM MVP guest blogger for today is David Jennaway who is the technical director at Excitation.

I’ve recently been involved in analyzing and resolving performance issues in several Dynamics CRM implementations. Different issues have had different root causes; this post is focused on those where the root causes lie with SQL Server. My intentions in this post are to introduce the SQL Server tools that can be used for performance analysis, and to describe some techniques to help you apply them effectively in a CRM implementation.

Here I’m considering scenarios where general CRM performance is absolutely fine, but specific queries (i.e. views) within CRM are slow. If only specific queries have a problem, then SQL Server is where I’d look first.

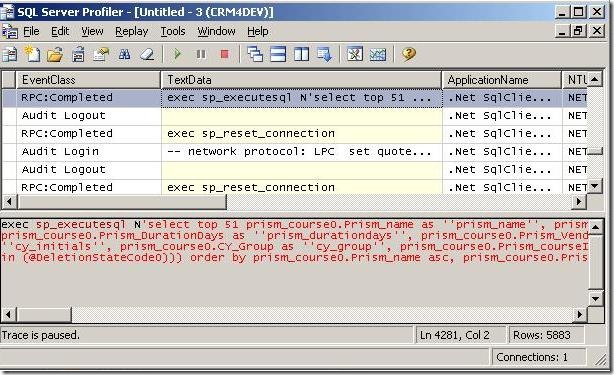

The first part of the investigation is to identify what the SQL Server receives from CRM. The SQL Profiler tool can give you this information by capturing the requests submitted to the SQL Server. The screenshot below shows a capture in two panes; the upper pane displays each request, and the lower pane has the SQL statement submitted – in this case from a CRM view.

Using SQL Profiler to capture requests – setting the filter

A trace is used to capture data. The trace definition includes a filter to specify which requests you want to capture, along with which events and columns you want to capture. SQL Profiler has several templates you can use for this, and the ‘Standard (default)’ template will give you all the information you need about a specific query. One issue you’ll quickly see when using this template on a live CRM implementation is that a lot of activity is captured, and it can be difficult to find the query you want. Amongst the specific queries for CRM views, CRM will submit queries to access CRM metadata, and the CRM Asynchronous Service will continually monitor various tables.

Careful use of column filters will allow you to filter out most of this extraneous information. This is not as easy in a CRM implementation compared to some other applications, as the CRM platform submits all requests on behalf of a user, but here are some useful techniques:

- Filtering on the MSCRM database

- Filtering on the TextData, which contains the SQL statement. An effective way to filter out most metadata requests is to only capture requests where the TextData is not like ‘%CustomizationLevel%’

- Excluding requests from the CRM Asynchronous Service. If this service runs on a dedicated server then you can exclude this via the HostName column. Another option is to filter requests where TextData is not like ‘%AsyncOperationBase%’, but you can only put one filter per column. Another filter I use is where RowCounts >= 1, as a lot of these requests are only monitoring the tables

- Filtering on Duration. As it’s likely you’re only concerned with queries that take a relatively long time, then this can be a useful filter

Once you have built appropriate filters in your trace, you can then save it as a template for future use.

Testing the captured SQL query – performance metrics

The following is a simple example of a captured SQL query – in this case it returns the names of all active accounts:

exec sp_executesql N’select top 51 account0.Name as ”name”, account0.AccountId as ”accountid” from Account as account0 where (account0.StateCode = @StateCode0 and (account0.DeletionStateCode in (@DeletionStateCode0))) order by account0.Name asc, account0.AccountId asc’,N’@StateCode0 int,@DeletionStateCode0 int’,@StateCode0=0,@DeletionStateCode0=0

You can directly copy this into SQL Management Studio and run the query to see the results. This can give some immediate performance information (number of records and overall execution time), but you can get a lot more information.

One set of information is how long it took to parse and compile the query, and how long it took to execute. Another is how much data needed to be accessed, and whether it came from memory or disk. Inserting the following two SQL statements provide this respective information:

set statistics time on

set statistics io on

go

If you rerun the query, the Messages tab will show output like the following:

SQL Server parse and compile time:

CPU time = 0 ms, elapsed time = 1 ms.Table ‘AccountBase’. Scan count 1, logical reads 4, physical reads 0, read-ahead reads 0, lob logical reads 0, lob physical reads 0, lob read-ahead reads 0.

SQL Server Execution Times:

CPU time = 50 ms, elapsed time = 44 ms.

I don’t intend to go into detailed analysis of this information here, but here are a couple of examples where I’ve gained useful insight from this information.

- A very high amount of time to parse and compile the query – this can be an indication that the query is very complex, though I know this wasn’t the case. The actual issue was that SQL 2008 attempted to generate data statistics during compilation, which took an excess amount of time. Changing the statistics options fixed the problem

- A large number of physical reads; this indicates SQL has to retrieve data from disk, not memory, and also that it is doing it a page at a time, which is not efficient (by contrast, read-ahead reads are also from disk, but are efficient), and a symptom of excess index fragmentation. The performance issue was fixed by rebuilding the fragmented index

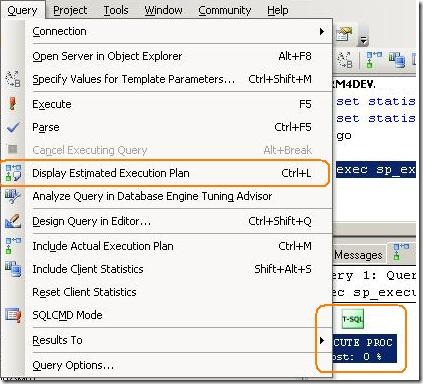

Analysing the SQL query – how it executes

Something else that I am often interested in is how SQL Server executes a given query, and specifically which indexes it uses. SQL Management Studio will show this information via the Display Estimated Execution Plan button or menu command:

Unfortunately, this isn’t very useful yet, as CRM always uses the sp_executesql stored procedure. This can give performance benefits, but doesn’t yield a useful execution plan. To get the execution plan, you need to convert the above query into a format like the following:

select top 51 account0.Name as ‘name’, account0.AccountId as ‘accountid’

from Account as account0

where (account0.StateCode = 0 and (account0.DeletionStateCode in (0)))

order by account0.Name asc, account0.AccountId asc

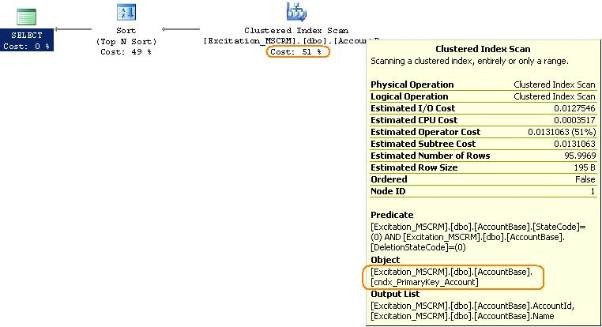

The execution plan for the above query yields the following execution plan. I’ve picked a very simple query for reasons of space, but highlighted some useful information you can gather:

The tooltip displays information about a particular step, and I have highlighted the specific index that was used. The other highlight indicates the cost of each step relative to the overall query, so you can identify which steps may cause performance problems.

Summary

This post has necessarily only scratched the surface of SQL performance analysis. A lot more information is available in the SQL Books Online documentation – a good starting point is Analysing Queries.