Step by Step how to predict the future with Machine Learning

Ever wondered how machine learning works? How exactly do you use historical data to predict the future? Well here’s a tutorial that will help you learn the basics by creating your own machine learning experiment. We will even show you how you can create a web service based on your experiment!

This is NOT an exhaustive introduction to Machine Learning, it is intended to introduce Machine Learning to those interested in the topic, or to introduce the Azure ML studio tool to those with existing data science experience.

Pre-requisites

- You will need an Azure account to do the complete workshop, if you don’t have an Azure account you can sign up for a free trial, or if you use the Guest Access in Azure Machine Learning Studio, you can complete the experiment but you won’t be able to deploy your experiment as a web service.

Time to complete

- 15-30 minutes

Creating a Machine Learning Workspace

NOTE: If you are using the Azure ML Studio guest access, you can skip this step. If you are using an Azure account, you will need to complete this step.

To use Azure Machine Learning Studio from your Azure account, you need a Machine Learning workspace.

To create a workspace, sign-in to your Microsoft Azure account and navigate to the portal (portal.azure.com)

Select +NEW |Data & Analytics | Machine Learning. You will be redirected the classic Azure portal (as of the time of this post, Azure Machine Learning is still completed in the classic portal)

In the Microsoft Azure services panel, select MACHINE LEARNING.

Select +NEW at the bottom of the window.

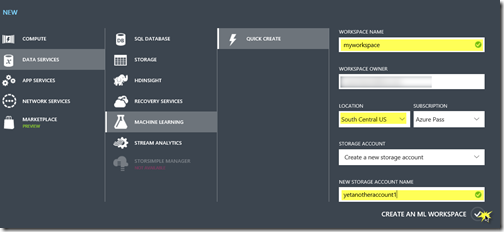

Select DATA SERVICES | MACHINE LEARNING | QUICK CREATE.

Enter a WORKSPACE NAME for your workspace and specify the WORKSPACE OWNER. The workspace owner must be a valid Microsoft account (e.g. name@outlook.com).

NOTE: Later, you can share the experiments you're working on by inviting others to your workspace. You can do this in Machine Learning Studio on the SETTINGS page. You just need the Microsoft account or organizational account for each user.

Specify the Azure LOCATION, then enter an existing Azure STORAGE ACCOUNT or select Create a new storage account to create a new one.

Select CREATE AN ML WORKSPACE to create your workspace.

Accessing Azure Machine Learning Studio

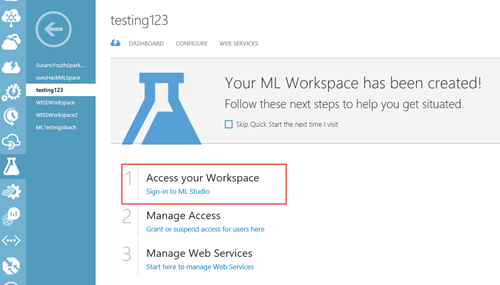

After your Machine Learning workspace is created, you will see it listed on the portal under MACHINE LEARNING.

Select your workspace from the list and then select Sign-in to ML Studio to access the Machine Learning Studio so you can create your first experiment!

When prompted to take a tour select Not Now. You may want to take a tour later when you are exploring this tool on your own.

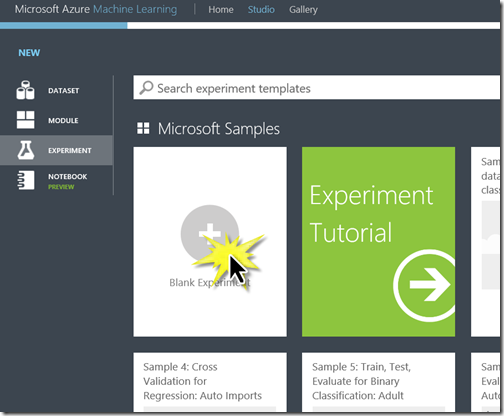

Start a new experiment. At the bottom of the screen select +NEW

Select +Blank Experiment

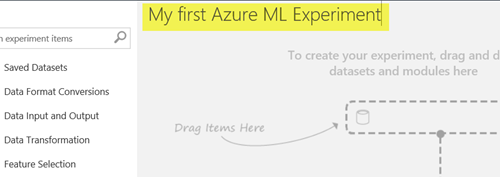

Change the title at the top of the experiment to read “My first Azure ML experiment” – the title you specify here is actually the name for the experiment you will see in the menu.

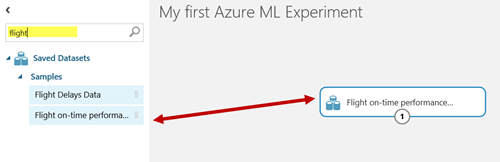

Type “flight” into the search bar and drag the Flight on-time performance Dataset to the workspace. This is one of many sample datasets built into Azure Machine Learning Studio designed to help you learn and explore the tool.

Right click on the dataset on your worksheet and select dataset | visualize from the pop-up menu, explore the dataset by clicking on different columns. It’s essential when you do machine learning to know as much as you can about your data. This dataset provides information about flights and whether or not they arrived on time.

- Click on the Carrier column. There are 7 unique values so we know we have data for multiple airlines.

- Click on the Year column. There is only 1 unique value, the same is true for the Quarter and Month columns, so it’s not worth using those columns to try and make our predictions. All our records are from one specify month in one specific year

- Click on ArrDel15. We are going to use Machine Learning to create a model that predicts whether a given flight will be late. The ArrDel15 column is a 1/0 column 1 indicates the flight was more than 15 minutes late, 0 indicates the flight was not 15 minutes late (i.e. on time)

- Explore some of the other columns in the dataset

Take note of the columns DepTimeBlock and CRSDepTime. DepTimeBlk wasn’t in the original dataset. It was calculated based on CRSDepTime to help make the data more useful for predictions. A big part of machine learning is getting the data in a format suitable for machine learning. Analyzing whether a flight at 7:31 vs 7:32 is more likely to be late, is less likely to be helpful than checking if a flight that left between 7-8 is more likely to be late than a flight between 3-4.

Select the columns you think will help make predictions

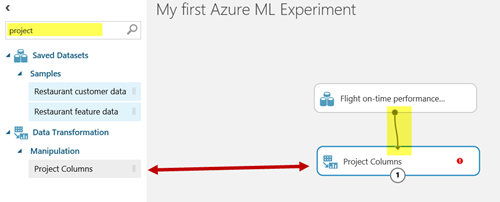

Type “project” into the search bar and drag the project columns task to the workspace. Connect the output of your dataset to the project columns task input

The project columns task allows you to specify which columns in the data set you think are significant to a prediction. You need to look at the data in the dataset and decide which columns represent data that you think will affect whether or not a flight is delayed. You also need to select the column you want to predict (ArrDel15 for our experiment)

Click on the Project columns task. On the properties pane on the right hand side, select Launch column selector

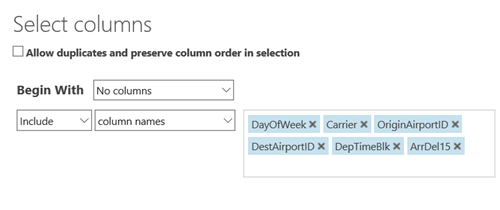

Select the columns you think affect whether or not a flight is delayed as well as the column we want to predict: DayOfWeek, Carrier (airline), OriginAirportID, DestAirportID, DepTimeBlk and ArrDel15 might be good candidates. You don’t have to pick the same columns I list here.

Divide our historical data in two

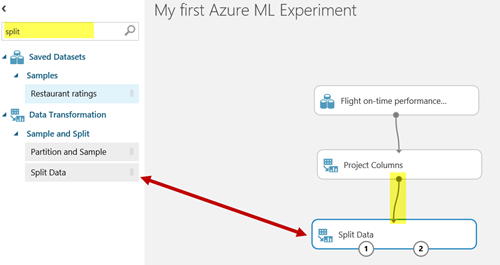

Type “split” into the search bar and drag the Split Data task to the workspace. Connect the output of Project Columns task to the input of the Split Data task.

The Split Data task allows us to divide up our data.

You use the bulk of your records to look for patterns. Our algorithm will search through and analyze the records to try and find patters that can be used to create a model that predicts based on our project columns whether or not a flight will be late.

The rest of the records we need to save for testing! Once we have a model that can predict outcomes based on our project columns, we can use the rest of our data to test that model. Can it successfully predict the outcome for this set of records for which we know the actual outcome? This will determine the accuracy of our model.

Traditionally you will split the data 80/20 or 70/30.

Click on the Split Data task to bring up properties, specify .8 as the Fraction of rows in the first output to send 80 % of your data to the first output, and 20% or your data to the second output.

Analyze your data and train your predictive model

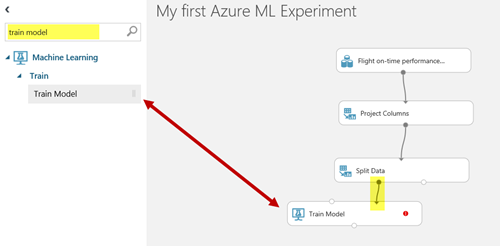

Type “train model” into the search bar. Drag the train model task to the workspace. Connect the first output (the one on the left) of the Split Data task to the rightmost input of the Train model task. This will take 80 % of our data and use it to train/teach our model to make predictions.

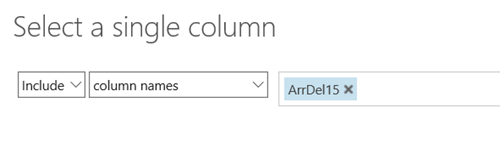

Now we need to tell the train model task which column we are trying to predict with our model. In our case we are trying to predict the value of the column ArrDel15 which indicates if a flight arrival time was delayed by more than 15 minutes.

Click on the Train Model task. In the properties window select Launch Column Selector. Select the column ArrDel15.

Selecting a model

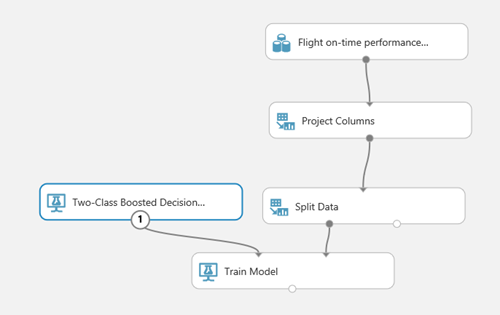

If you are a data scientist who creates their own algorithms, you could now import your own R code to try and analyze the patterns. But, we can also use one of the existing built-in algorithms. Type “two-class” into the search bar. You will see a number of different classification algorithms listed. Each of the two-class algorithms is designed to predict a yes/no outcome for a column. Each algorithm has its advantages and disadvantages. You can find a Cheat sheet for the Azure Machine Learning Studio Algorithms here.

Select Two-Class Boosted Decision Tree and drag it to the workspace (feel free to try a different two-class algorithm if you want).

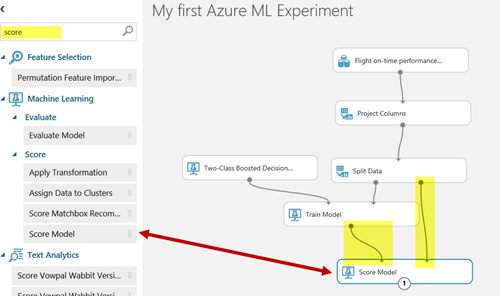

Connect the output of the Two-Class Boosted Decision Tree to the leftmost input of the train model task. When you are done, your experiment should look something like this:

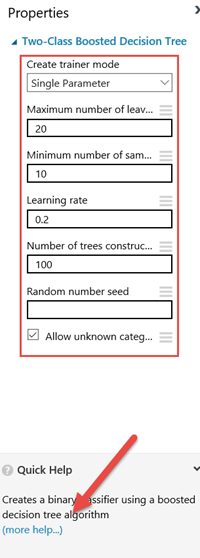

Go to the properties window for your Two-Class Boosted Decision Tree algorithm. There are a number of properties you can change to affect how the algorithm completes the data analysis. Not sure what those all do? At the bottom of the properties window, show your audience how you can bring up documentation on the selected algorithm which includes an explanation of the properties. (of course the more you know about statistical analysis and machine learning the more this will make sense to you)

Check accuracy of your model

After the model is trained, we need to see how well it predicts delayed flights, so we need to score the model by having it test against the 20% of the data we split to our second output using the Split Data task.

Type “score” into the search bar and drag the Score Model task to the workspace. Connect the output of Train Model to the left input of the Score model task. Connect the right output of the Split Data task to the right input of the Score Model task as shown in the following screenshot.

Now we need to get a report to show us how well our model performed.

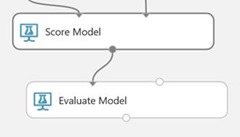

Type “evaluate” into the search bar and drag the Evaluate Model task to the bottom of the workspace. Connect the output of the Score model task to the left input of the Evaluate Model task.

Run your experiment

Press Run on the bottom toolbar. You will see green checkmarks appear on each task as it completes.

It will take a while to execute the train model step. How long it takes will depend on which algoritm you selected and the number of records being processed. The train model and score model tasks will take the longest to complete. It may take as long as 15 minutes to run this model depending on the algorithm you selected. While you are waiting, check out the documentation on Machine Learning so you can learn more about any of the steps you have completed so far.

How to interpret your results

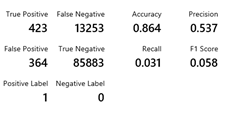

When the entire experiment is completed right click on the evaluate model task and select “ Evaluation results | Visualize” to see how well your model predicted delayed flights.

The closer the graph is to a straight diagonal line the more your model is guessing randomly. You want your line to get as close to the upper left corner as possible.

If you scroll down you can see the accuracy – Higher accuracy is good!

You can also see the number of false and true positive and negative predictions

- True positives are how often your model correctly predicted a flight would be late

- False positives are how often your model predicted a flight would be late, when the flight was actually on time (your model predicted incorrectly)

- True negatives indicate how often your model correctly predicted a flight would be on time

- False negatives indicate how often your model predicted a flight would be on time, when in fact it was delayed (your model predicted incorrectly)

You want higher values for True positives and True negatives, you want low values for False Positives and False negatives.

Our model predicted

- 423 flights would be delayed and were actually delayed (True Positive)

- 364 flights would be delayed, which actually landed on time (False Positive)

- 13253 flights would be on time, which were actually delayed (False Negative)

- 85883 flights would be on time, which were actually on time (True Negative)

Improving your accuracy

Not happy with the accuracy? well they do call it an experiment for a reason, you need to experiment to get better accuracy.

What can you do to get better results?

- More records - If you have more records to analyze you may get a more accurate model, what if we had 3 years of data instead of one year? What if we had data across different months? What if we had data from more airlines and more airports?

- More columns - If you have additional columns of data you can get more accurate results. What if we had a duration of flight column? Perhaps longer flights able to make up the time when they leave late? What if we knew whether a flight was travelling West to East or East to West?

- Cleaner data – sometimes data sets have missing values or incorrect values that skew the results

- More data sets – What if we had a weather data set we could import and join with our flight data?

- Algorithms - Maybe you should use a different algorithm to analyze the data, check out the Azure Machine Learning Cheat sheet for suggestions

- Algorithm parameters - Maybe you should change some of the analysis parameters of your algorithm, look up the documentation for the algorithm you are using for details on what parameters you can change and the effect on results and experiment time.

Creating a web service for your trained model

NOTE: If you are using the Azure ML Studio guest sign in to do this tutorial, you will not be able to create a web service. Creating a web service can only be completed from an Azure account.

Once you have trained a model with a satisfactory level of accuracy, how do you use it? One of the great things about Azure Machine Learning Studio is how easy it is to take your model and deploy it as a web service. Then you can simply have a website or app call the web service, pass in a set of values for the project columns and the web service will return the predicted value and confidence of the result.

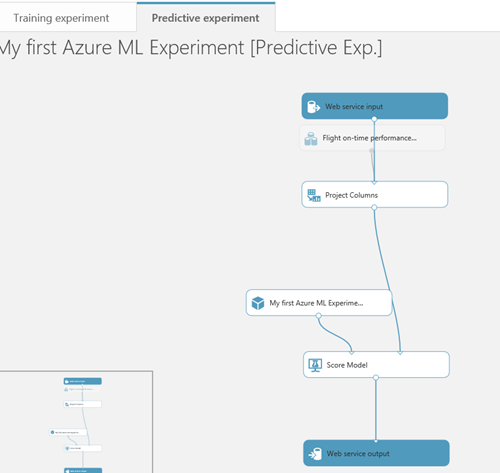

Convert the training experiment to a predictive experiment

Once you've trained your model, you're ready to use it to make predictions for new data. To do this, you convert your training experiment into a predictive experiment. By converting to a predictive experiment, you're getting your trained model ready to be deployed as a web service. Users of the web service will send input data to your model and your model will send back the prediction results.

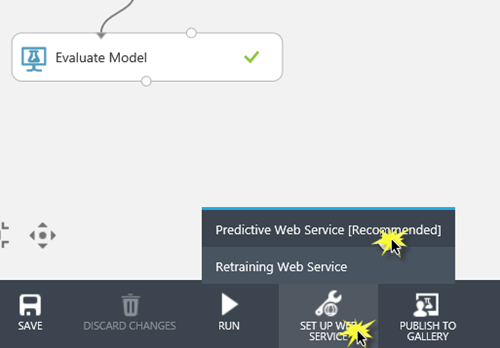

To convert your training experiment to a predictive experiment select Set Up Web Service, then select Predictive Web Service.

This will create a new predictive experiment for your web service. The predictive model doesn’t have as many components as your original experiment, you will notice a few differences:

- You don’t need the data set because when someone calls the web services they will pass in the data to use for the prediction.

- You still need to identify which Project columns will used for predictions if you pass in a full record of data.

- Your algorithm and Train Model tasks have now become a single trained model which will be used to analyze the data passed in and make a prediction

- We don’t need to evaluate the model to test it’s accuracy. All we need is a Score model to return a result from our trained model.

- Two new tasks are added to indicate how the data from the web service is input to the experiment, and how the data from the experiment is returned to the web service.

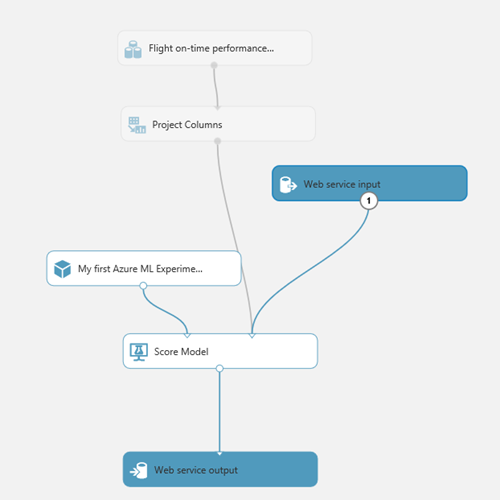

Delete the connection from the Web input to Project columns and redraw the connection from the Web input to the Score Model. If you leave the web input connected to project columns, the web service will prompt you for values for all the data columns. If you have the web input connected to the score model directly, the web service will only expect the data columns used for making predictions.

For more details on how to do this conversion, see Convert a Machine Learning training experiment to a predictive experiment

Deploy the predictive experiment as a web service

Now that the predictive experiment has been sufficiently prepared, you can deploy it as an Azure web service. Using the web service, users can send data to your model and the model will return its predictions.

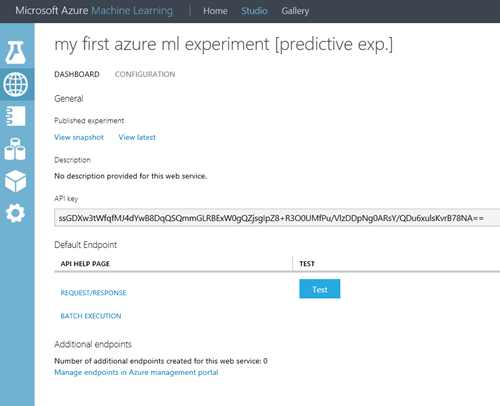

To deploy your predictive experiment, select Run at the bottom of the experiment canvas. After you run the experiment, select Deploy Web Service. The web service is set up and you are placed in the web service dashboard.

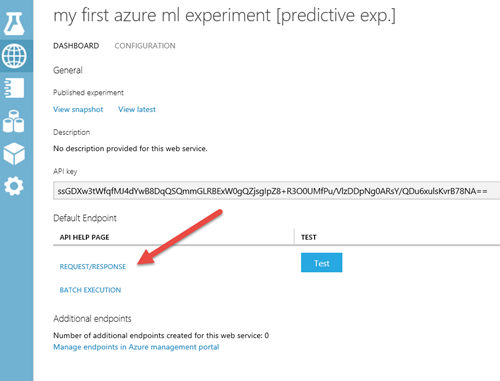

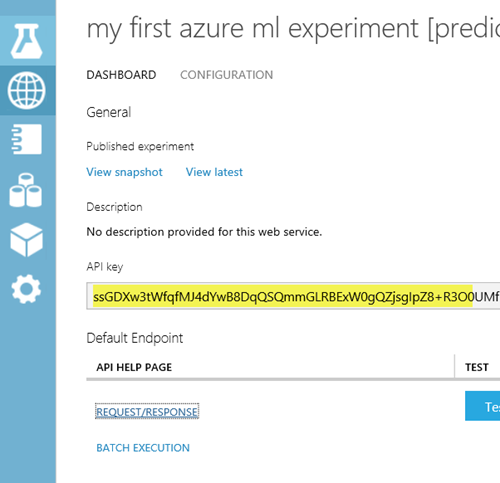

After the web service is created you are redirected to the Web service dashboard page for your new web service.

Test the web service

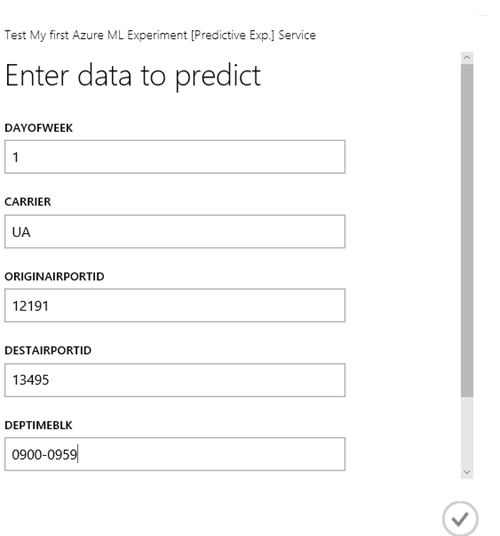

Click the Test link on the web service dashboard. A dialog pops up to ask you for the input data for the service. These are the columns expected by the scoring experiment.

Enter a set of data and then click OK. The results generated by the web service are displayed at the bottom of the dashboard.

DayofWeek should be a number from 1-7

Carriers can be WN, DL, US, XE, AA, OO, UA, CO

DepTimeBlk should be in the format 0900-0959 , 1400-1459, 2300-2359

Airport IDs include 12191, 13495, 12889, 13204, 10800, 13796

Leave ArrDel15 as 0

The results of the test will appear at the bottom of the screen, you will see the record you entered followed by the predicted output (scored label) and the probability (scored probability). In the screenshot below there is a .08 probability the flight will be on time (a value of 0). The value you see returned will vary depending on the data you specified (Tip: changing time of day is a good way to get different results if you want to try entering different values. Flights around 6 PM are more likely to be late than a flight at 2 PM)

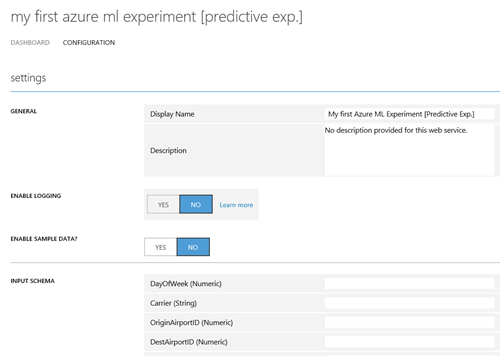

On the CONFIGURATION tab you can change the display name of the service and give it a description. The name and description is displayed in the Azure Classic Portal where you manage your web services. You can provide a description for your input data, output data, and web service parameters by entering a string for each column under INPUT SCHEMA, OUTPUT SCHEMA, and WEB SERVICE PARAMETER. These descriptions are used in the sample code documentation provided for the web service. You can also enable logging to diagnose any failures that you're seeing when your web service is accessed.

Calling the web service

Once you deploy your web service from Machine Learning Studio, you can send data to the service and receive responses programmatically.

The dashboard provides all the information you need to access your web service. For example, the API key is provided to allow authorized access to the service, and API help pages are provided to help you get started writing your code. Select Request/Response if you are going to call the web service passing one record at a time. Select Batch Execution if you are going to pass multiple records to the web service at a time.

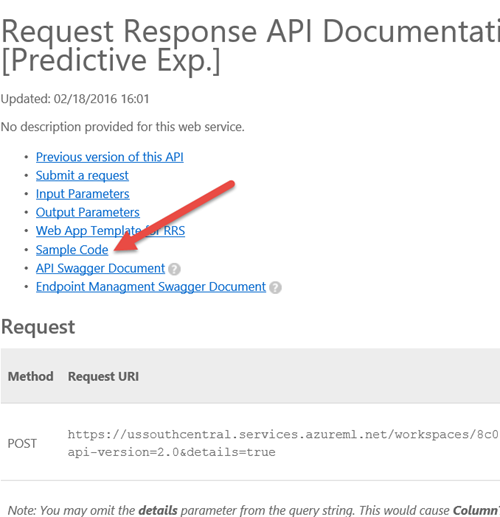

On the API help page select Sample code

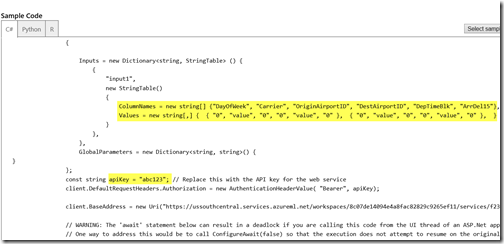

You will be presented with code samples for calling the web service from C#, Python and R

Replace the apiKey of abc123 with the API key displayed in the dashboard of your web service.

Replace the values with the values you wish to pass into the web service and you can now call the web service from your code to retrieve predictions!

For more information about accessing a Machine Learning web service, see How to consume a deployed Azure Machine Learning web service.

You can even publish your Azure Machine Learning Web service to the Azure Marketplace!

Wondering what it costs?

There is a pricing calculator you can use to get a sense of the cost to use Azure services including Azure Machine Learning. The price depends on the number of users (seats) and the number of experiment hours. Check out the Pricing calculator.

This is cool, want to learn more?

Congratulations you have just completed a machine learning experiment and deployed a web service that can use your predictive model. But you can go so much further with machine learning and with Azure ML studio.

Here’s a few resources to help you get going

- Getting started with Microsoft Azure Machine Learning

- Data Science and Machine Learning Essentials

- Machine Learning Tutorials

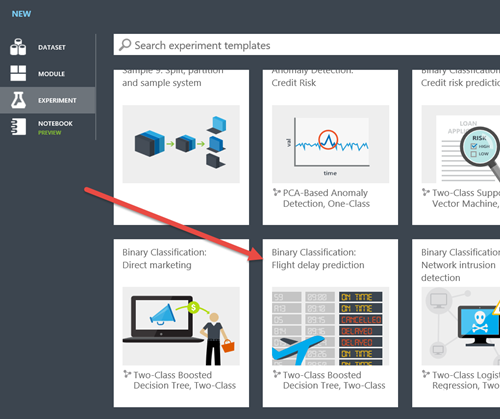

You may also want to check out a much more complex experiment using the flight data to get an idea of just how far you can go with Azure ML studio!

Create a new experiment and scroll through the Microsoft samples and check out the sample “Binary Classification Flight Delay Prediction” or check out any of the other samples!

If you want another interesting dataset to try for creating your own experiment, check out the titanic dataset, see if you can predict who would survive!