Pricing and Billing Model for Federations in SQL Azure Explained!

Note: the article has been updated with the new pricing model that went into effect in Feb 2012.

Now that Federations is live in production, lets talk about how the billing model works for federations. Good news is the model is very simple; With federations, every member can be considered a regular SQL Azure database. In fact, sys.database in master database report all federation members in the list. Federation members are marked using a special flag called is_federation_member in this view to help identify them as such.

Before I kick into explaining the federations billing model, if you are not familiar with the SQL Azure billing model, here is a quick overview. There are 3 important principles to remember as we discuss the billing model details for federation;

#1 – Charges on databases are prorated to a day. For example a single 1GB WEB EDITION database costs roughly $.33 a day.

#2 – All databases that existed in a day are charged even if they existed only for part of the day and was dropped during that day.

#3 – Only databases that are in ready state are charged. Databases that are being built are not charged. Charging is only done after these databases become accessible, that is you can connect and work with them.

Ok lets get started…

Creating Federations

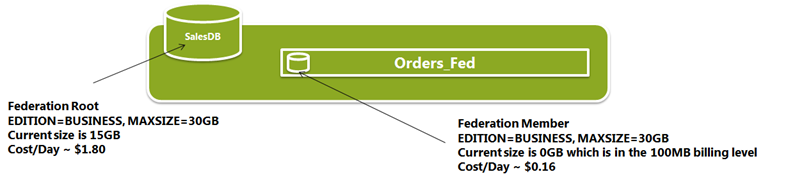

The first federation member is created when the federation is created using the CREATE FEDERATION statement. The member inherit its EDITION and MAXSIZE from the root database. However like a new database. this new federation members contain no data so is an empty database.

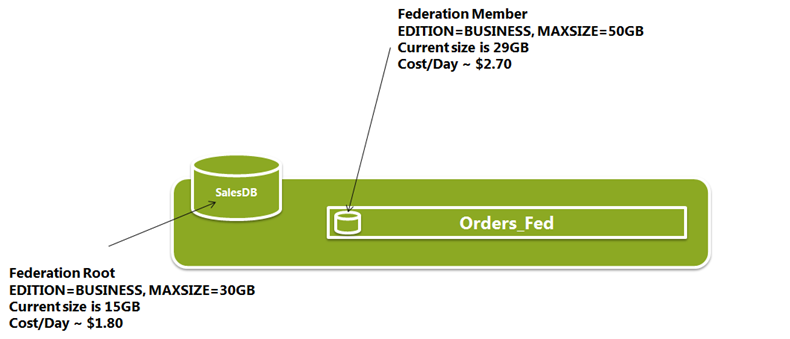

Here is an example; Lets assume you have a user database with 25GB of data currently and is set to BUSINESS edition and has a MAXSIZE of 30GB. You decide to scale out the database and create a federation. Your first member created with this federation will have the same EDITION and MAXSIZE properties. However given that the member has no data yet, it will only cost you as much as the smallest BUSINESS edition database – that is 10GB.

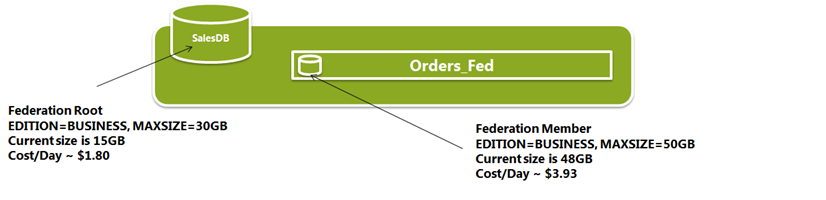

Here is SalesDB with federation Orders_Fed after the first member has been modified to a MAXSIZE of 50GB and current data size grew to 48GBs after some data loading to the member.

Repartitioning Operations

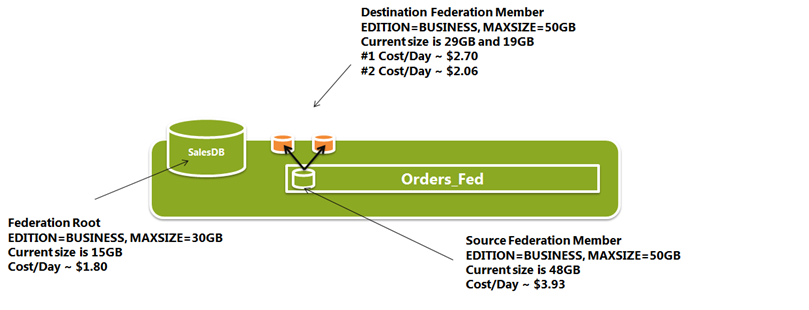

Some more time passes and as you ALTER FEDERATION with SPLIT to scale out your database further, new members are created. These new federation members also inherit their properties from the source federation member that is being SPLIT. You can find the details of the SPLIT operation in this article but the important thing to remember is that the SPLIT operation does not reuse an existing database and always creates new databases to keep the operation online. From a billing standpoint, you can think of a SPLIT as two CREATE DATABASE statements to create the 2 new destination members, combined with a single DROP DATABASE to drop the source federation member.

First remember principle #3 above on SQL Azure billing: Only databases that are accessible are charged. So during the SPLIT operation, you don’t pay for the destination members that are created yet. You only pay for these new members after the operation completes.

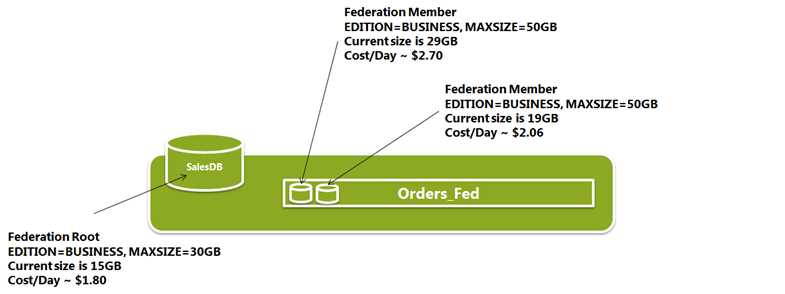

Also remember principle #1 and #2 above; Billing is prorated to a day and every database is counted even if it existed only for the part of a day. That means the day of the SPLIT you pay for both the source and destination federation members. However the day after the SPLIT, source database is no longer charged to you since it is dropped. So you only pay for the 2 new members that are in place.

Typically the size of the destination members shrink given data will be filtered at the split point specified by the SPLIT operation. So the 2 new members are charged based on their size after the SPLIT.

Lets continue walking through our example; Imagine the federation member with a current size of 48GB is split into a 29GB and a 19GB federation members, your bill should look like this the day of the SPLIT;

…And should no longer include the source member the day after the SPLIT;

With ALTER FEDERATION to DROP members, we do reuse an existing database so form a billing standpoint, it equates to a DROP DATABASE statement dropping one of the members. In SQL Azure, databases are billed even if they existed only for a part of the day. So the day you run ALTER FEDERATION … DROP you still pay for both members. However here is what the bill will include the day after we run DROP AT (HIGH id=…)

Modifying Federation Member Billing Properties

It is also important to remember that federations are available at all editions; BUSINESS and WEB. You can mix and match EDITION and MAXSIZE setting with root and members. Federation root and each federation member can be altered using the ALTER DATABASE statement with the following options.

ALTER DATABASE database_name {

MODIFY (<edition_options> [, ..n])

}<edition_options> ::= {

(MAXSIZE = {1|5|10|20|30|40|50|100|150} GB)

| (EDITION = {'web' | 'business'})

} [;]

You will need the member database name to use ALTER DATABASE. For the member database name. You can figure this out simply using db_name() function after switching to the member using the USE FEDERATION statement. You can follow along this article to figure out a federation members database name and other metadata on members.

Best Practices for Cost Optimizing Your Federations

As the final few words, I wanted to touch on an important topic. How do you cost optimize federations? Given the flexible nature of federation member setting on MAXSIZE and EDITION, I also get a lot of question on how to configure federation members. Should one have larger and fewer federation members OR smaller but many federation members?

With the updated pricing model on Feb 2012, SQL Azure now optimizes for consolidating data to larger databases. In Feb 2012, a single 50GB database roughly $4.06 a day. 50x1GB databases would cost $16.11. Both setups have access to the same storage capacity, however clearly 50x1GB databases have access to 50x more cores, memory and IOPS capacity as well as tempdbs and log files. Thus for cost conscious systems, you should choose to consolidate storage to fewer members, save on cost but risk higher latency for queries. However for mission critical workloads should invest more money and spread to many smaller members for better parallelism and performance. Given every applications workload characteristics are different, there isn't a declared balance-point for your app that I can declare but you can discover that by testing your workload and measure the query performance and cost under various setups with federations.

One thing is clear, in this new world with the updated pricing model in SQL Azure, it becomes even more important to build systems that are elastic. Apps that can adapt to changes in workload and, expand and in future shrink in time to handle the peaks gain great edge with the new pricing model. That makes federation a very valuable technology for apps that want to catch the best price performance. By the way if you'd like to shrink and consolidate data today, you can refer to this post; https://blogs.msdn.com/b/cbiyikoglu/archive/2012/02/20/implementing-alter-federation-merge-at-command-using-sql-azure-migration-wizard-by-gihuey.aspx

As always, love to hear feedback on your experience with federations and the billing model. you can reach me through the blog or at twitter @cihangirb.