BizTalk Performance Lab Delivery Guide - A Process for Conducting Performance Labs

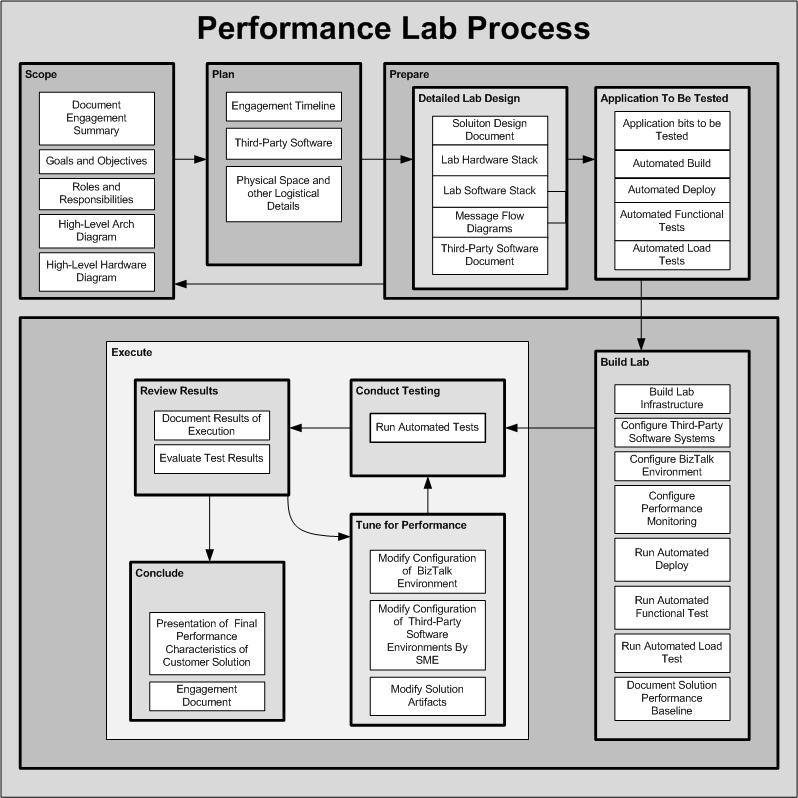

The diagram below reflects the current process that the Microsoft BizTalk Ranger Team uses to conduct performance labs. If this diagram looks somewhat familiar, you may have seen an earlier incarnation that was part of my contribution to Doug Girard's excellent MSDN Article "Managing a Successful Performance Lab" which can be found at this link https://msdn2.microsoft.com/en-us/library/aa972201.aspx

This process is simply a recommendation, certainly not an edict. Be that as it may, this process has consistently resulted in successful Performance Lab Engagements over the past year. Many of the sections that follow in this guide will echo this process. If users decide to use a different or modified process, the content from related sections in the guide should be modified or adopted accordingly.

Click this image to the left if you want other sizes of the image.

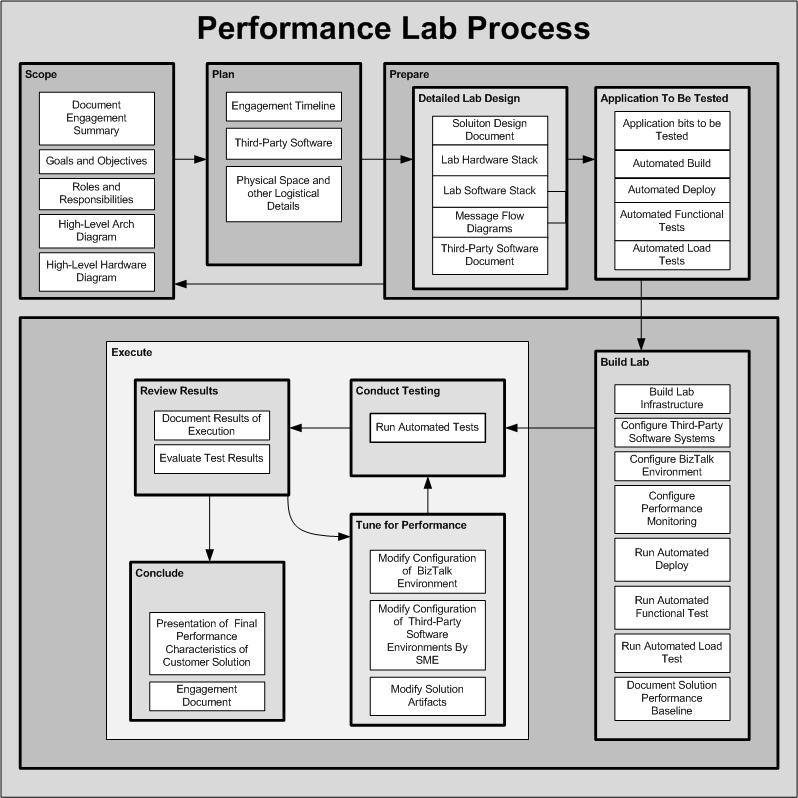

Click this image to the left if you want other sizes of the image.

The Performance Lab Process in the above diagram outlines five distinct phases that a Performance Lab Engagement follows, which are as follows:

Scope -> Plan -> Prepare -> Build Lab -> Execute

As with any process that a team might attempt to follow, it is critically important for all parties involved to know, understand and agree upon the steps and tasks in the process. To aid with communicating this process to other stakeholders, a PowerPoint slide with this diagram is included with the Performance Lab Bits. The Visio for this diagram is also included in the Performance Lab Bits to use as a starting point in case the reader wants to customize the process for a specific Performance Lab Engagement.

Click the links below to Understand the Lab Process at a deeper level