Enabling large disks and large sectors in Windows 8

One of the most basic services provided by an OS is the file system, and Windows has one of the most advanced file systems of any operating system used broadly. In Windows 7 we improved things substantially in terms of reliability, management, and robustness (for example, automating completely the antiquated notion of "defrag"). In Windows 8 we build on this work by focusing on scale and capacity. Bryan Matthew, a program manager on the Storage & File System team, authored this post.

--Steven

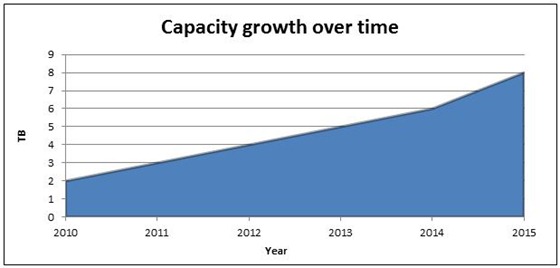

Our digital collections keep growing at an ever increasing rate – high resolution digital photography, high-definition home movies, and large music collections contribute significantly to this growth. Hard disk vendors have responded to this challenge by delivering very large capacity hard disk drives – a recent IDC market research report estimates that the maximum capacity of a single hard disk drive will increase to 8TB by 2015.

Maximum capacity growth over time for single-disk drives

(Source: IDC Study# 228266, Worldwide Hard Disk Drive 2011–2015 Forecast:

Transformational Times, May 2011)

In this blog entry, I’ll discuss how Windows 8 has evolved in conjunction with offerings from industry partners to enable you to more efficiently and fully utilize these very large capacity drives.

The challenges of very large capacity hard disk drives

To start you out with a little bit of context, we will define “very large capacity” disk drives as sizes > 2.2TB (per disk drive). The current architecture in Windows has some limits that makes these drives somewhat tricky to deal with in some scenarios.

Even as hard disk drive vendors innovated to deliver very large capacity drives, two key challenges required focused attention:

- Ensuring that the entire available capacity is addressable, so as to enable full utilization

- Supporting the hard disk drive vendors in their effort to deliver more efficiently managed physical disks – 4K (large) sector sizes

Let’s discuss both of these in more detail.

Addressing all available capacity

To fully understand the challenges with addressing all available capacity on very large disks, we need to delve into the following concepts:

- The addressing method

- The disk partitioning scheme

- The firmware implementation in the PC – whether BIOS or UEFI

The addressing method

Initially, disks were addressed using the CHS (Cylinder-Head-Sector) method, where you could pinpoint a specific block of data on the disk by specifying which Cylinder, Head, and Sector it was on. I remember in 2001 (when I was still in junior high!) we saw the introduction of a 160GB disk, which marked the limit of the CHS method of addressing (at around 137GB), and systems needed to be redesigned to support larger disks. [Editor’s note: my first hard drive was 5MB, and was the size of a tower PC. --Steven]

The new addressing method was called Logical Block Addressing (LBA) – instead of referring to sectors using discrete geometry, a sector number (logical block address) was used to refer to a specific block of data on the disk. Windows was updated to utilize this new mechanism of addressing available capacity on hard disk drives. With the LBA scheme, each sector has a predefined size (until recently, 512 bytes per sector), and sectors are addressed in monotonically increasing order, beginning with sector 0 and going on to sector n where:

n = (total capacity in bytes)/ (sector size in bytes)

The disk partitioning scheme

While LBA addressing theoretically allows arbitrarily large capacities to be accessed, in practice, the largest value of “n” can be limited by the associated disk partitioning scheme.

The notion of disk partitioning can be traced back to the early 1980s - at the time, system implementers identified the need to divide a disk drive into several partitions (i.e. sub-portions), which could then be individually formatted with a file system, and subsequently used to store data. The Master Boot Record partition table (MBR) scheme was invented at the time, which allowed for up to 32-bits of information to represent the maximum capacity of the disk. Simple math informs us that the largest addressable byte represented via 32 bits is 232 or 2.2TB. Of course, in the 1980s, this seemed a perfectly legitimate practical limitation to impose, considering that the largest consumer disk available then was a whopping 5MB and cost well over $1500!

As early as in the late 1990s, system implementers recognized the need to enable addressing greater than the 2.2TB limit (among other requirements). A group of companies collaborated to develop a scalable partitioning scheme called the GUID Partition Table (GPT), as part of the Unified Extensible Firmware Interface (UEFI) specification. GPT allows for up to 64-bits of information to store the number that represents the maximum size of a disk, which in turn allows for up to a theoretical maximum of 9.4 ZettaByte (1 ZB = 1,000,000,000,000,000,000,000 bytes).

Beginning with Windows Vista 64-bit, Windows has supported the ability to boot from a GPT partitioned hard disk drive with one key requirement – the system firmware must be UEFI. We've already talked about UEFI, so you know it can be enabled as a new feature of Windows 8 PCs. This leads us to the topic of firmware.

Firmware implementation in the PC – BIOS or UEFI

PC vendors include firmware that is responsible for basic hardware initialization (among other things) before control is handed over to the operating system (Windows). The venerable BIOS (Basic Input Output System) firmware implementations have been around since the PC was invented i.e. circa 1980. Given the very significant evolution in PCs over the decades, the UEFI specification was developed as a replacement for BIOS and implementations have existed since the late 1990s. UEFI was designed from the ground up to work with very large capacity drives by utilizing the GUID partition table, or GPT – although some BIOS implementations have attempted to prolong their own relevance and utility by using workarounds for large capacity drives (e.g. a hybrid MBR-GPT partitioning scheme). These mechanisms can be quite fragile, and can place data at risk. Therefore, Windows has consistently required modern UEFI firmware to be used in conjunction with the GPT scheme for boot disks.

Beginning with Windows 8, multiple new capabilities within Windows will necessitate UEFI. The combination of UEFI firmware + GPT partitioning + LBA allows Windows to fully address very large capacity disks with ease.

Our partners are working hard to deliver Windows 8 based systems that use UEFI to help enable these innovative Windows 8 features and scenarios (e.g. Secure Boot, Encrypted Drive, and Fast Start-up). You can expect that when Windows 8 is released, new systems will support installing Windows 8 to, and booting from, a 3TB or bigger disk. Here’s a preview:

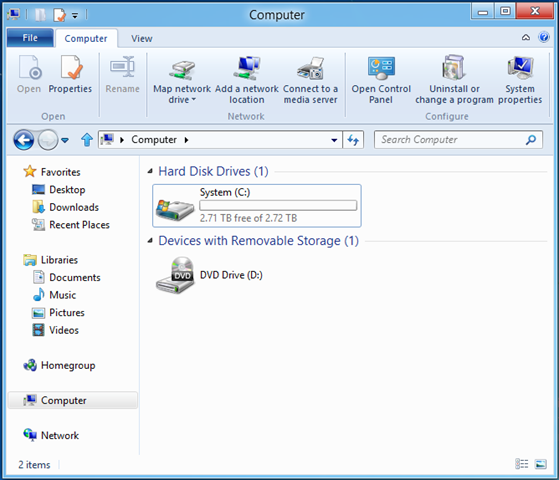

Windows 8 booted from a 3 TB SATA drive with a UEFI system

4KB (large) sector sizes

All hard disk drives include some form of built-in error correction information and logic – this enables hard disk drive vendors to automatically deal with the Signal-to-Noise Ratio (SNR) when reading from the disk platters. As disk capacity increases, bits on the disk get packed closer and closer together; and as they do, the SNR of reading from the disk decreases. To compensate for decreasing SNR, individual sectors on the disk need to store more Error Correction Codes (ECC) to help compensate for errors in reading the sector. Modern disks are now at the point where the current method of storing ECCs is no longer an efficient use of space, – that is, a lot of the space in the current 512-byte sector is being used to store ECC information instead of being available for you to store your data. This, among other things, has led to the introduction of larger sector sizes.

Larger sector sizes – “Advanced Format” media

With a larger sector size, a different scheme can be used to encode the ECC; this is more efficient at correcting for errors, and uses less space overall. This efficiency helps to enable even larger capacities for the future. Hard disk manufacturers agreed to use a sector size of 4KB, which they call “Advanced Format (AF),” and they introduced the first AF drive to the market in late 2009. Since then, hard disk manufacturers have rapidly transitioned their product lines to AF media, with the expectation that all future storage devices will use this format.

Read-Modify-Write

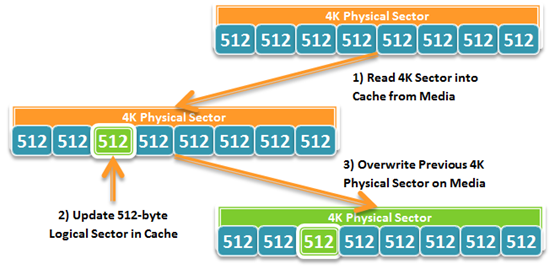

With an AF disk, the layout of data on the media is physically arranged in 4KB blocks. Updates to the media can only occur at that granularity, and so, to enable logical block addressing in smaller units, the disk needs to do some special work. Writes done in units of the physical sector size do not need this special work, so you can think of the physical sector size as the unit of atomicity for the media.

As illustrated below, a 4KB physical sector can be logically addressed with 512-byte logical sectors. In order to write to a single logical sector, the disk cannot simply move the disk head over that section of the physical sector and start writing. Instead, it needs to read the entire 4KB physical sector into a cache, modify the 512-byte logical sector in the cache, and then write the entire 4KB physical sector back to the media (replacing the old block). This is called Read-Modify-Write.

Disks with this emulation layer to support unaligned writes are called 4K with 512-byte emulation, or “512e” for short. Disks without this emulation layer are called “4K Native.”

As a result of Read-Modify-Write, performance can potentially suffer in applications and workloads that issue large amounts of unaligned writes. To provide support for this type of media, Windows needs to ensure that applications can retrieve the physical sector size of the device, and applications (both Windows applications and 3rd party applications) need to ensure that they align I/O to the reported physical sector size.

Designing for large sector disks

Learning from some issues identified with prior versions of Windows, AF disks have been a key design point for new features and technologies in Windows 8; as a result, Windows 8 is the first OS with full support for both types of AF disks – 512e and 4K Native.

To make this happen, we identified which features and technology areas were most vulnerable to the potential issues described above, and reached out to the teams developing those features to provide guidance and help them test hardware for these scenarios.

Issues we addressed included the following:

- Introduce new and enhance existing API to better enable applications to query for the physical sector size of a disk

- Enhancing large-sector awareness within the NTFS file system, including ensuring appropriate sector padding when performing extending writes (writing to the end of the file)

- Incorporating large-sector awareness in the new VHDx file format used by Hyper-V to fully support both types of AF disks

- Enhancing the Windows boot code to work correctly when booting from 4K native disks

This is just a small cross section of the amount of work done to enable across-the-board support for both types of AF disks in Windows 8. We are also working with other product teams within Microsoft and across the industry (e.g. database application developers) to ensure efficient and correct behavior with AF disks.

In closing

NTFS in Windows 8 fully leverages capabilities delivered by our industry partners to efficiently support very large capacity disks. You can rest assured that your large-capacity storage needs will be well handled beginning with Windows 8 and NTFS!

/Bryan