Directly store streaming data into Azure Data Lake with Azure Event Hubs Capture Provider

Azure Data Lake (ADL) customers use Azure Event Hubs extensively for ingesting streaming data - but up to now it was difficult for them to store raw and unprocessed events for an extended period for later batch processing. With the new Capture capability in Azure Event Hubs, you can easily store raw events into Azure Data Lake Store (ADLS) and run rich batch analysis on this stored event data, extracting more value than ever before.

Customers wanting to batch process event data have been using a variety of their own, often costly and resource-heavy, mechanisms to ingest data from Event Hubs into Data Lake. Azure Event Hubs Capture Provider makes it easy to ingest raw data from those real-time sources into the Data Lake. With this addition, you can now easily manage the ingestion process for the most critical data sources for your Data Lake - real-time, batch, relational. Once you have ingested the data you need from different sources into ADLS, you can focus on running a variety analytics jobs across all your data to get valuable insights. Leveraging the security capabilities provided by ADLS, you can set appropriate permissions on the raw event data just like for the other types of ingested data, thereby maintaining integrity of your single source of truth.

Getting more value out of your near real-time data

The new Azure Data Lake Store Capture Provider in combination with ADL enhances existing customer scenarios and enables new ones. Customers get more incisive insights from the event data being streamed and stored in their Data Lakes, for instance:

- enriching raw events with newer or different information and re-analyzing the data

- re-running event-processing algorithms with advancements on older data

- detecting events with errors

- simply processing the event data most elastically in a batched fashion after ingestion rather than in a real-time in-line way

Get started today

Try Azure Event Hubs Capture for Azure Data Lake Store by following the Getting Started guide here and see how you can reap the benefits that other customers have already seen in their solutions.

Here is how it works

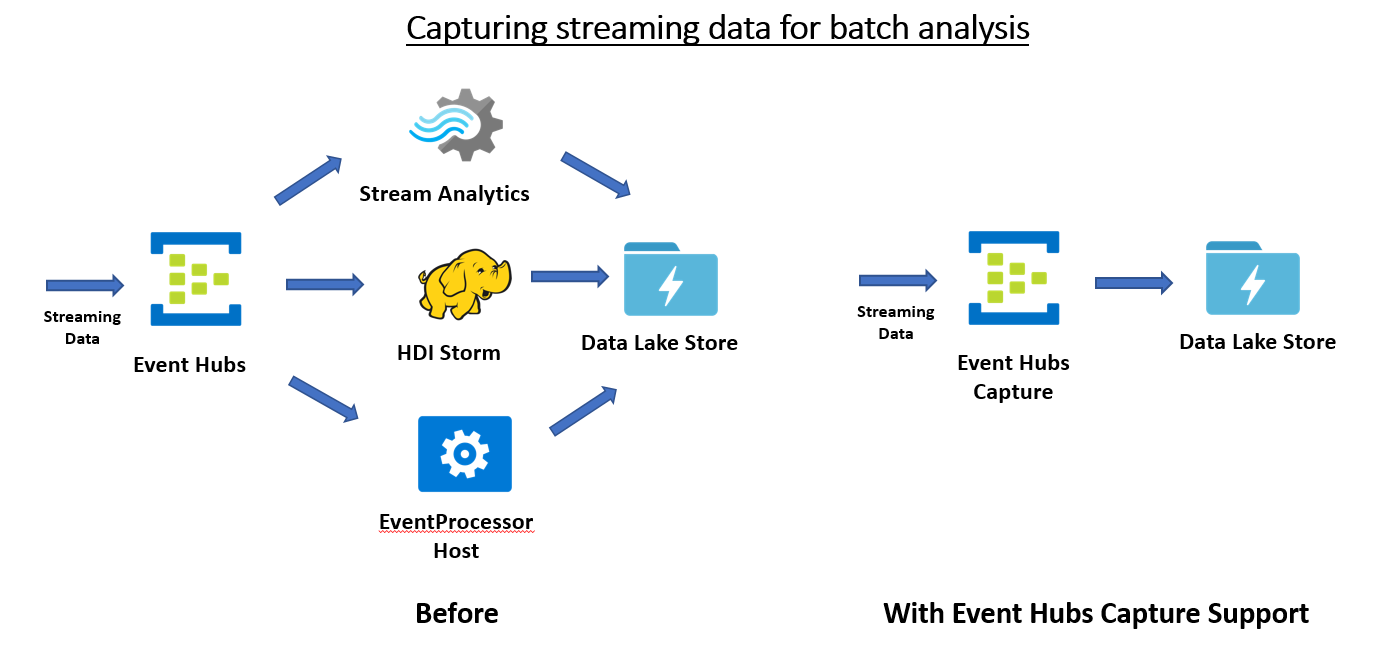

Before Event Hubs Capture

If you wanted to retain your event data, you had to use your own mechanism to copy that data out of Event Hubs to ADLS. Here are some ways customers did that:

- Build, deploy, manage custom Event Hubs consumers in Azure VMs; this provided the most control.

- Use Storm on Azure HDInsight where you would read event data from Event Hubs and write out to ADLS; provided lesser level of control.

- Use Azure Stream Analytics to read from Event Hubs and write out to an ADLS sink; provided fully-managed solution.

All these solutions worked. However, if your primary scenario did not involve analyzing the streaming data in near-real-time, you would have incurred tradeoffs such as compute costs, having to manage resources etc.

With the new Event Hubs Capture Provider capability

Event Hubs Capture enables you to automatically deliver streaming data in Event Hubs into the ADLS account of your choice with the added flexibility of specifying a time or size interval when this data is delivered. This capability is fully-managed and integrated with ADLS, so streaming data from Event Hubs is available in ADLS in a minimal amount of time. More details about Azure Event Hubs Capture and how it works are available here.

When using Event Hubs Capture, you configure it to define how often and how much you want to write events to the store. Data will be stored in the form of Avro files. Once you have this data available in ADLS, you can process this raw data in a variety of analytics engines. You can use Azure Data Lake Analytics (ADLA), Azure HDInsight (HDI), or 3rd party offerings. The advantage of using ADLA in this scenario is that you run the ADLA job only when you need to process the event data, thereby optimizing your analytics costs. The number of times you process the raw data can be done when you have right amount of data available or on based on some other parameters like time-of-day. Event Hubs Capture helps you to separate out event data capture from the analysis portion and optimize your overall costs.

For more information

See these resources: Azure Event Hubs Capture and the Getting Started guide here.