Setting Amazon S3 Storage as data source (s3n://) in Hadoop on Azure (hadooponazure.com) portal

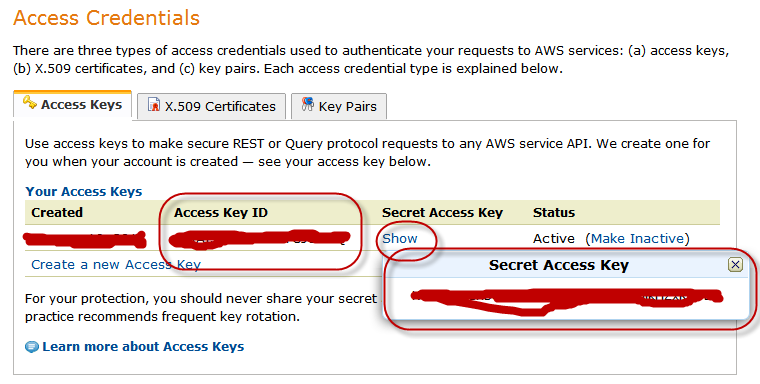

To get your Amazon S3 account setup with Apache Hadoop cluster on Windows Azure you just need you AWS security credentials which pretty much look like as below:

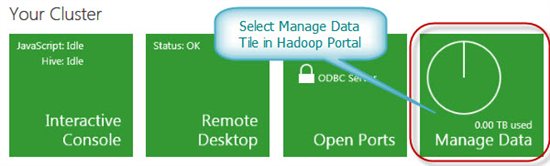

After you completed creating Hadoop cluster in Windows Azure, you can log into your Hadoop Portal. In the portal, you can select “Manage Data” tile as below:

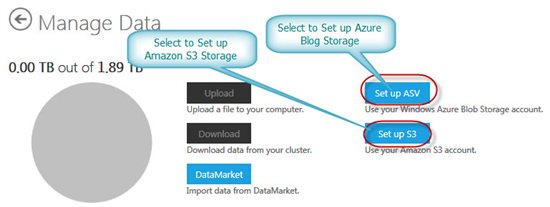

On the next screen you can select:

- “Set up ASV” to set your Windows Azure Blob Storage as data source

- “Set up S3” to set your Amazon S3 Storage as data source

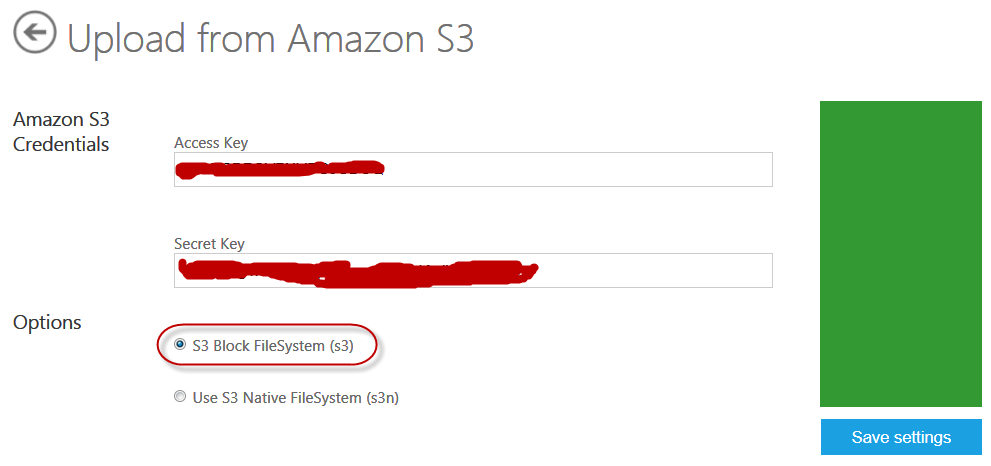

When you select “Set up S3”, in the next screen you would need to enter your Amazon S3 credentials as below:

After you select “Save Settings”, if you Amazon S3 Storage credentials are correct you will get the following message:

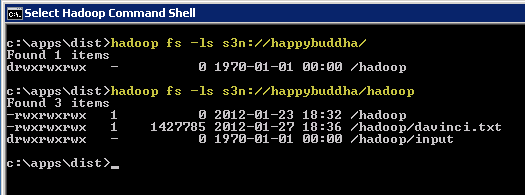

Now your Amazon S3 Storage is set up to use with Interactive JavaScript shell or you can remote into your cluster to access from there as well. You can test it directly at from Hadoop Command shell in your cluster as below:

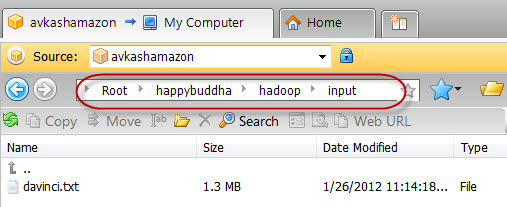

Just to verify you can look your Amazon S3 storage in any S3 explorer tool as below:

Note: If you really want to know how Amazon S3 Storage was configured with Hadoop cluster, it was done by adding proper Amazon S3 Storage credentials into core-site.xml as below (Open C:\Apps\Dist\conf\core-site.xml you will see the following parameters related with Amazon S3 Storage access from Hadoop Cluster):

<?xml-stylesheet type="text/xsl" href="configuration.xsl"?> <!-- Put site-specific property overrides in this file. --> <configuration> <property> <name>hadoop.tmp.dir</name> <value>/hdfs/tmp</value> <description>A base for other temporary directories.</description> </property> <property> <name>fs.default.name</name> <value>hdfs://10.26.104.57:9000</value> </property> <property> <name>io.file.buffer.size</name> <value>131072</value> </property> <property> <name>fs.s3n.awsAccessKeyId</name> <value>YOUR_S3_ACCESS_KEY</value> </property> <property> <name>fs.s3n.awsSecretAccessKey</name> <value> YOUR_S3_SECRET_KEY </value> </property> </configuration> |

Resources:

- Apache Hadoop on Windows Azure Technet WiKi

- Keywords: Apache Hadoop, Windows Azure, BigData, Cloud, MapReduce