Multi-touch in WPF 4 Part 3 – Manipulation Events

One of the most common usage of multi-touch input is for panning, zooming and rotation. With WPF4, the easiest way to implement these gestures is by using the Manipulation events on UIElements. Manipulation events also support simple inertial physics for a more fluid user experience.

You may well wonder why we call these ‘manipulation’ events rather than gestures like Win32’s WM_GESTURE messages. With manipulation events, we interpret the multi-touch input to simulate directly manipulating the on screen elements, as if you are using your fingers to move, rotate and stretch physical objects. Manipulation events reports translation, rotation and scaling transformations simultaneously, whereas with WM_GESTURES, you can only get one of these transformations components at one time. Thus with WM_GESTURE, you cannot pan and zoom at the same time.

There are typically 2 ways of using manipulation events: 1) to interpret the events for panning/zooming/rotating content such as maps and images, 2) to interpret these events for manipulating onscreen elements such as organizing a deck of cards, moving puzzle pieces etc. Depending on your scenario, you will decide which elements to enable manipulation on and which element you use as the manipulation container.

Manipulation Events

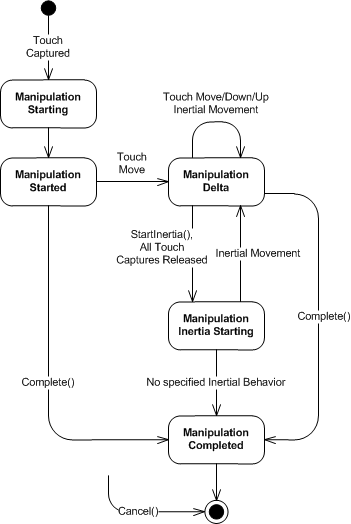

Manipulation events on the UIElement is a state machine for the interaction sequence.

Getting Started

There are 3 simple concepts that you need to understand to get started with manipulations.

First, you need to enable the IsManipulationEnabled boolean property on elements that are to be manipulated. Setting this property to true on an UIElement will start hit-testing of touch events on the element and raising manipulation events when you drag the element with one or more touch contacts.

Second, you need to handle the ManipulationStarting event and specify the ManipulationContainer for the interaction. The ManipulationStarting event is a routed event that is raised from an UIElement with IsManipulationEnabled set to true. This event is raised after the first touch down on the UIElement and before any other manipulation events. This event is used to configure the manipulation processing logic.

The ManipulationContainer is an element that act as the frame of reference for the manipulation transformation calculations. You can imagine that the manipulation container is the physical surface that the manipulated elements are moving against. The manipulation container cannot be changed during the interaction sequence between ManipulationStarting and ManipulationCompleted.

Last, you need to handle at least the ManipulationDelta event to respond to the transformations calculated from the finger movements. The ManipulationDelta event reports pan, zoom, and rotate as separate components to the transformation. The transformation is reported both as delta since the last event (DeltaManipulation) and since the the start of the manipulation (CumulativeManipulation).

Basic example

In this example, we enable multi-touch manipulation of a red rectangle element on a canvas. You can move the rectangle with 1 finger and rotate and zoom with 2 or more fingers. The following XAML snippet shows hooking the manipulation events and enabling manipulation on the rectangle.

<Canvas x:Name="_canvas"

ManipulationStarting="_canvas_ManipulationStarting"

ManipulationDelta="_canvas_ManipulationDelta">

<Rectangle IsManipulationEnabled="True"

Fill="Red" Width="100" Height="100"/>

</Canvas>

We will be applying render transformation on the rectangle, the transformation is relative to the canvas so we will use the canvas as the manipulation container.

private void _canvas_ManipulationStarting(object sender,

ManipulationStartingEventArgs e)

{

e.ManipulationContainer = _canvas;

e.Handled = true;

}

We handle the ManipulationDelta event to apply the render transformation to the rectangle. First we retrieve the rectangle as the original source of the manipulation event. Then we extract the current render transformation as a MatrixTransform.

To calculate the effects of the manipulation, we apply the scale, rotation and translation transformation components from the event argument to the current render transform. In matrix calculations, the order of transformation (multiplication) is important. We need to apply scaling and rotation first centered at the manipulation origin before moving the rectangle based on the translation component.

private void _canvas_ManipulationDelta(object sender,

ManipulationDeltaEventArgs e)

{

var element = e.OriginalSource as UIElement;

var transformation = element.RenderTransform

as MatrixTransform;

var matrix = transformation == null ? Matrix.Identity :

transformation.Matrix;

matrix.ScaleAt(e.DeltaManipulation.Scale.X,

e.DeltaManipulation.Scale.Y,

e.ManipulationOrigin.X,

e.ManipulationOrigin.Y);

matrix.RotateAt(e.DeltaManipulation.Rotation,

e.ManipulationOrigin.X,

e.ManipulationOrigin.Y);

matrix.Translate(e.DeltaManipulation.Translation.X,

e.DeltaManipulation.Translation.Y);

element.RenderTransform = new MatrixTransform(matrix);

e.Handled = true;

}

That’s it!

In the next parts of the series, I’ll talk about inertial movement and single finger rotation with manipulation pivot.