Tracking changes to tables in your data warehouse using snapshot-based versioning

Today I will explain a way to track historic changes in a data warehouse and share a little program I wrote that will help you do this in minutes. It’s based on a method I’ve use many times and which is generic enough to cover many different scenarios. I’ll use an example to clarify how this works. In this example, I have a data warehouse database called MyDW, and this database contains a table called ImportTable which I’ll assume to be a table which is imported from another database by the data warehouse’s ETL process. For the purpose of this example I’ve kept thus table very simple:

CREATE TABLE [dbo].[ImportTable](

[ID] [int] NULL,

[Name] [nvarchar](100) NULL,

[Country] [nvarchar](100) NULL

) ON [PRIMARY]

It’s important to understand that the ETL process periodically refreshes this table by fetching fresh data from an external database. Records might be added, removed or changed. The ETL process might simply update it by truncating the existing table and reloading it entirely. The ID field is the primary key which is imported from the external database (for this example we don’t add our own ID in the data warehouse).

I called this method of tracking history “snapshot-based versioning”. It does not rely on database triggers or on CDC. While those features might be useful for other scenarios, I’ve found them too limiting for data warehouse scenarios. For example, these features don’t work well if your ETL process refreshes the data by truncating the existing table and then reloading it.

Snapshot-based versioning instead relies on TSQL’s MERGE statement. The MERGE statement is supported in SQL Server 2008 or higher. A limitation is that you cannot track changes to fields of type image, ntext and text. This is usually not a blocker because in real-life scenarios most tables have a “modified” field that is updated whenever a change to the record is changed.

The idea is to have a second table called H_ImportTable which has the same fields as the original ImportTable. This second table, which I’ll refer to as the “history table”, has a versioned copy of the data in H_ImportTable. In other words, it works like a log which appends a row for any change to ImportTable. Obviously, if the table in the external database has changed multiple times between two ETL runs, this will appear only as one change in H_ImportTable, hence the name “snapshot-based versioning”.

You can create H_ImportTable as follows:

CREATE TABLE [dbo].[H_ImportTable] (

[_ACTION] [char](1) NOT NULL,

[_KEY] [int] IDENTITY(1,1) NOT NULL,

[_EFF_FROM] [datetime] NOT NULL,

[_EFF_TO] [datetime] NOT NULL,

[ID] [int] NULL,

[Name] [nvarchar](100) NULL,

[Country] [nvarchar](100) NULL

)

As you see there are four additional fields in this table:

- _ACTION indicates whether a row has been added (‘A’), changed (‘C’), deleted (‘D’) or undeleted (‘U’).

- _KEY is a unique row identifier. This field is only useful in specific scenarios

- _EFF_FROM indicates the date/time from which this row is effective

- _EFF_TO indicates the date/time until which this row is active. In case the row is effective now, this field is set to 9999-12-31.

After refreshing ImportTable, the ETL process should update H_ImportTable using the following SQL statement:

INSERT INTO [dbo].[H_ImportTable]

SELECT _ACTION, SYSDATETIME() AS _EFF_FROM, '9999-12-31' AS _EFF_TO,[ID],[Name],[Country]

FROM

(

MERGE [dbo].[H_ImportTable] AS T

USING [dbo].[ImportTable] AS S

ON (S.[ID]=T.[ID] AND T._EFF_TO='9999-12-31')

WHEN NOT MATCHED BY TARGET THEN

INSERT(_ACTION,_EFF_FROM,_EFF_TO,[ID],[Name],[Country])

VALUES('A',SYSDATETIME(),'9999-12-31',[S].[ID],[S].[Name],[S].[Country])

WHEN MATCHED AND T._EFF_TO='9999-12-31' AND (T._ACTION='D' OR ([S].[Name]<>[T].[Name] OR [S].[Country]<>[T].[Country])) THEN

UPDATE SET T._EFF_TO=SYSDATETIME()

WHEN NOT MATCHED BY SOURCE AND T._EFF_TO='9999-12-31' AND T._ACTION<>'D' THEN

UPDATE SET T._EFF_TO=SYSDATETIME()

OUTPUT $Action Action_Out

,CASE WHEN S.[ID] IS NULL THEN 'D' WHEN Inserted._ACTION='D' THEN 'U' ELSE 'C' END AS _ACTION

,ISNULL(S.[ID],Deleted.[ID]) AS ID,[S].[Name],[S].[Country]

) AS MERGE_OUT

WHERE MERGE_OUT.Action_Out = 'UPDATE'

The statement above does all of the heavy lifting to update the history table H_ImportTable. Because MERGE is just a single SQL statement, it is very efficient compared to using multiple SELECT, INSERT and UPDATE statements to accomplish the same. The statement makes the assumption that the ID field is unique in the source table and you’re advised to create a unique index on that field in ImportTable. Obviously, in the history table H_ImportTable this field will no longer be unique.

For performance you’ll want to create the following indexes on the history table H_ImprotTable: ID, _ACTION, _EFF_FROM and _EFF_TO.

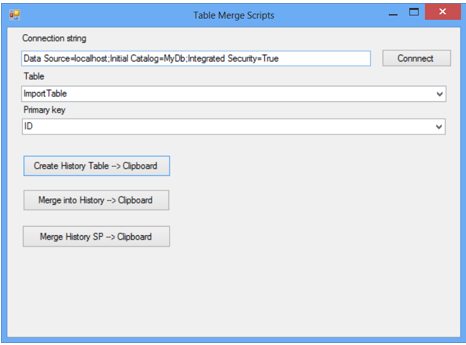

You can adjust this SQL code to work with the tables in your data warehouse. To do so, adjust all the parts that are underlined. If you need to do this for many tables, making these changes manually is laborious and error-prone, This is why I wrote the program “Table Merge Scripts” which you can download here. This program generates the SQL code based on an existing table in the database. You can see the UI below:

First, edit the connection string by entering the correct server name and database name. Then click Connect.

Next select the table and the field that is the primary key.

The first button in the bottom half of the screen copies the SQL code to create the history table to the clipboard. The new table will have the same name as the selected source table, prefixed with “H_”. The code also creates indexes for _ACTION, _EFF_FROM, _EFF_TO, _KEY and the field which you designated as the primary key in the source table.

The next button copies the SQL code to update the history table to the clipboard. This consists of a MERGE command similar to the example shown above.

The final button copies the same SQL code as the second table, but wrapped in a stored procedure.

Good luck!