Should you use a data warehouse with a tabular cube?

With SQL Server 2012, Microsoft introduced SSAS “tabular mode”. Tabular mode essentially is a server-hosted version of PowerPivot. In fact, it’s very easy to take an Excel workbook with a PowerPivot model and import that to SSAS as a tabular cube.

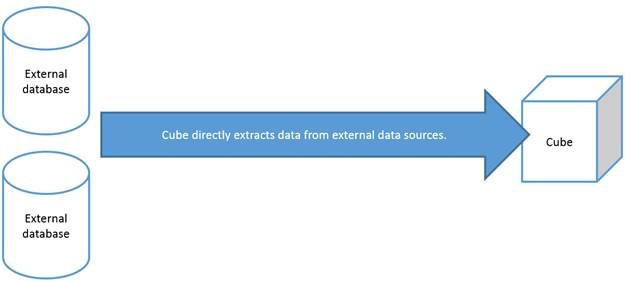

When you convert a PowerPivot model to a tabular cube, the new cube fetches data from external data sources just like the original PowerPivot model in Excel did. In other words, you don’t need to have a data warehouse database “in front” of your tabular cube. The question I’ll address below is whether it’s a good idea to have a data warehouse in combination with a tabular cube anyway.

You can build your BI solution with varying degrees of sophistication. More sophisticated is not necessarily better, and you should determine which best fits your needs. I’ll describe the three main scenarios as I see them below.

Scenario 1: No data warehouse

As I mentioned above, you can have a tabular cube without having a data warehouse database. A benefit of this approach is that the only skills you need to learn is how to deploy a PowerPivot model to a server-based tabular cube, and how to create a job that will process this cube according to a certain time schedule. Another advantage is that you can continue developing your solution in Excel, without needing to learn and install the Visual Studio-based tools.

The nice thing of a server-based tabular cube over using PowerPivot in Excel is that you no longer get gigantic Excel files, sometimes larger than 100MB. Your Excel files can have all of the PowerPivot functionality, but since the raw data is stored in the cube instead of the Excel files, your workbooks become very small, often smaller than 100KB.

You can also more easily share the Excel files with peers. As long as you grant them access, they can refresh the Excel file by clicking Refresh All in the data ribbon. Furthermore, the tabular cube enables web-based reports.

The bottom line is that there are lots of benefits of converting your PowerPivot solution to tabular cubes when you need to share or publish the data.

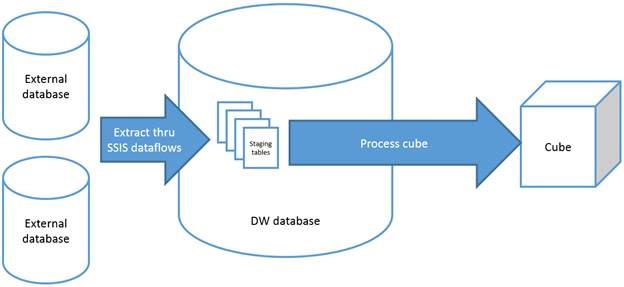

Scenario 2: Data warehouse as staging area only

The next step up the ladder of sophistication is to maintain a data warehouse database for staging data. Using a simple ETL process, presumably built using an Integration Services project in Visual Studio, the external data is retrieved from the external data sources and stored unmodified in staging tables in the data warehouse. The cube is reconfigured to pull data from the staging tables instead of the external data sources.

If you’re a business user without BI development experience building this can challenging. You’ll need to learn how to create a SQL database for the data warehouse with the required staging tables, and how to develop an ETL process that copies data from the external sources into the staging tables. This will require you to learn how to use SQL Server Management Studio and some of the BI design tools in Visual Studio.

One of the main benefits over having no data warehouse is that you’re now no longer limited to the types of external data sources supported by PowerPivot. Also, you can make your BI system more resilient whereby the cube can be processed even though some of the external data sources cannot be refreshed, for example because they are offline.

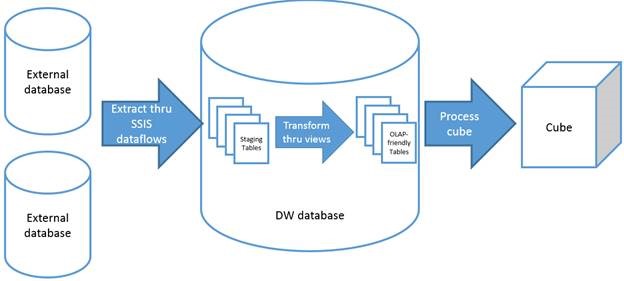

Scenario 3: Data warehouse for staging and transforming data

The most supplicated approach I’ll discuss here, the one I commonly use in my BI projects, is to use the data warehouse for both staging raw data as well as transforming that data to an OLAP-friendly data model. This is what I called the “using staging tables” approach to data warehousing in my blog post Designing an ETL process with SSIS: two approaches to extracting and transforming data. The cube gets data from the pre-transformed tables/views in the data warehouse database instead of pulling it from the staging tables as in the previous scenario. Now that you use views and stored procedures to model the data, you no longer need to do those transformations in the tabular cube by renaming and filtering columns etc.

The OLAP-friendly data model provides an extra layer of abstraction which is beneficial for many reasons. When changes are made to the data sources (and therefore also the staging tables), breaking changes can be shielded from the cube and from the reports that depend of them. Also, business users can access the same data they know from the cube using SQL queries, which can be very helpful in certain scenarios. Finally, the transformations in data warehouse can be much more powerful than what it possible in the cube. For example, you can combine multiple tables into one using the SQL UNION statement, which would be impossible to do inside the tabular cube.

Hence, this scenario enables very powerful solutions.

Which scenario to choose?

If you’re a business analyst and not a software developer, the “No Data warehouse” method will be achievable and you’ll be able to better share and publish reports.

If you are a software/BI developer, building a data warehouse will make your solutions more robust and powerful. The benefits of including transformations in the data warehouse (3rd scenario) outweighs the additional amount of work compared to the 2nd scenario, which is why I always choose include transformations in the data warehouse.