Troubleshooting Internal Load Balancer Listener Connectivity in Azure

Problem Unable to Connect to Azure availability group listener

Creating an availability group in order to make your application highly available when running on Azure virtual machines (IaaS) is very popular. The availability group listener requires special configuration steps in Azure as opposed to running on premise.

For more information on step by step configuration of Azure availability group listener, see the following article for Azure resource managed availability group.

Configure an internal load balancer for an Always On availability group in Azure

The following article will assist in troubleshooting the failure to connect to the availability group listener.

NOTE This troubleshooter focuses on an availability group listener configured with resource managed internal load balancer, which is the most popular type of listener currently deployed.

Testing the Azure availability group listener

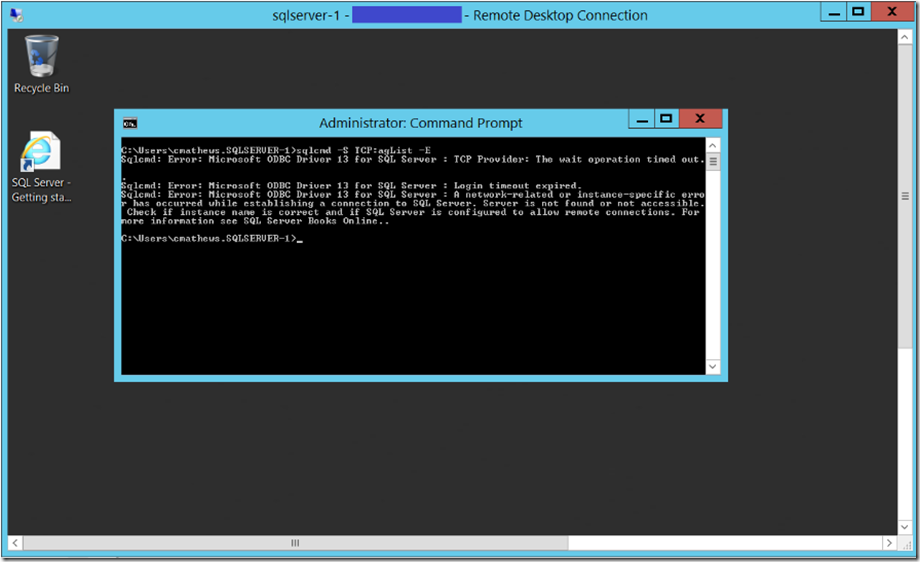

To test an Azure availability group listener, do not test the listener connectivity on the primary replica. Instead, test listener connectivity to the primary replica using the listener, from the secondary replica. We know the secondary replica already has certain connectivity with the primary and that there are SQL Server client tools installed on it, that can be used for testing connectivity to the listener. Use a tool like sqlcmd for connectivity testing.

Troubleshooting Failed Azure listener connectivity

Familiarizing yourself with the article that steps through the Azure listener creation and configuration will help you understand the major pieces of the Azure listener configuration that need to be investigated to determine root cause. The majority of all listener connection issues are a result of incorrect configuration of one of the following:

Firewall or network security group configuration

IP Clustered resource creation and configuration (See section of article 'Configure the cluster to use the load balancer IP address')

Internal load balancer creation and configuration (See section of article 'Create and configure the load balancer in the Azure portal)'

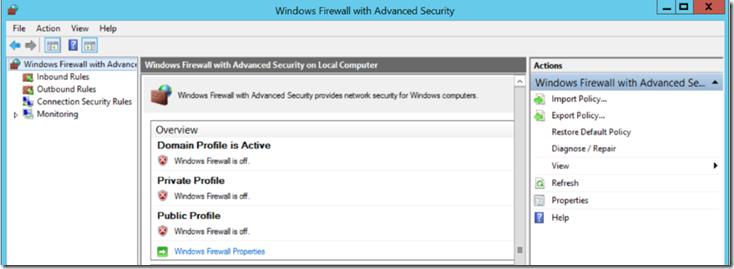

Turn off Windows Firewall on the server hosting the primary replica (Firewall and NSG configuration)

The simplest way to ensure Windows Firewall is not blocking connectivity to the listener is to turn off Windows Firewall on the Windows server hosting the primary replica of the availability group, that which you are trying to connect to with your listener connectivity test.

If you find that connectivity succeeds, turn Firewall back on, and be sure to allow inbound connection through ports 1433 (port SQL Server is listening on) and 59999 (probe port).

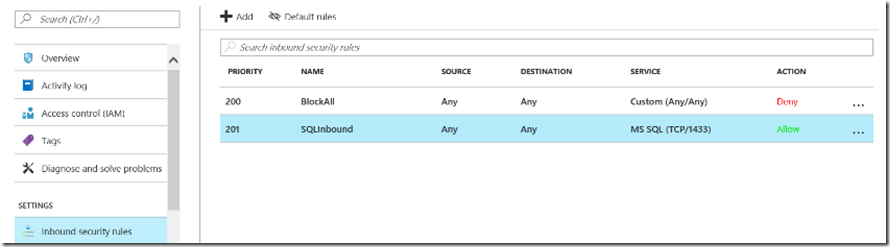

Investigate Network Security Group (NSG) for rules that block probe port or SQL Server port connectivity (Firewall and NSG configuration)

It is possible that a network security group rule is preventing connectivity to the virtual machine. For example, the following network security group has an inbound rule allowing SQL connectivity but it is overruled by a rule whose priority value is lower.

For more information review the following section 'Configure a Network Security Group inbound rule for the VM' in this article:

Connect to a SQL Server Virtual Machine on Azure (Resource Manager)

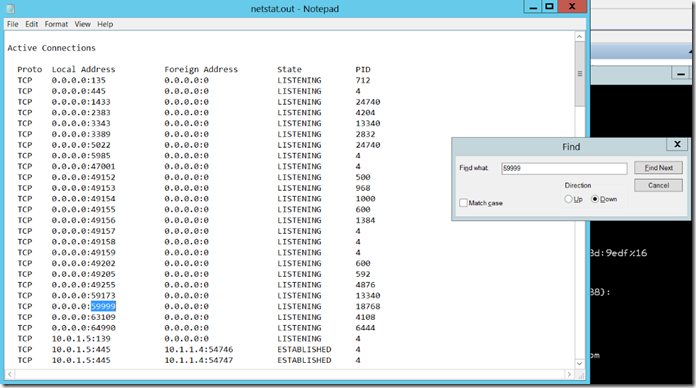

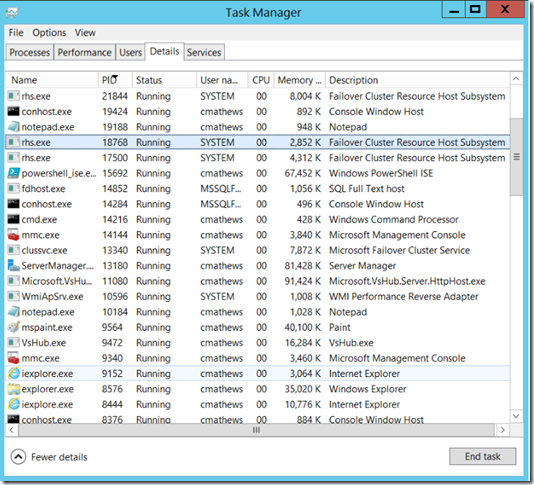

Verify RHS.EXE is listening on probe port (59999) (IP Clustered Resource creation and configuration)

On the server hosting the primary replica, verify that RHS.EXE process is listening on the probe port you configured the internal load balancer with.

View the output with notepad and search for a process listening on port 59999 (probe port):

Use Task Manager to verify that the process listening on 59999 is RHS.EXE. If some other process is already listening on port 59999, then it will be necessary to reconfigure both the IP resource's probe port and the internal load balancer probe port to a different value so there is not a conflict.

Review the internal load balancer configuration (Load Balancer creation and configuration)

Using the article on creating the Azure availability group listener, step through the configuration steps 1-4 listed below and use the Azure portal to visually verify the configuration of each step below is correct:

- Create the load balancer and configure the IP address

- Configure the backend pool

- Create a probe

- Set the load balancing rules

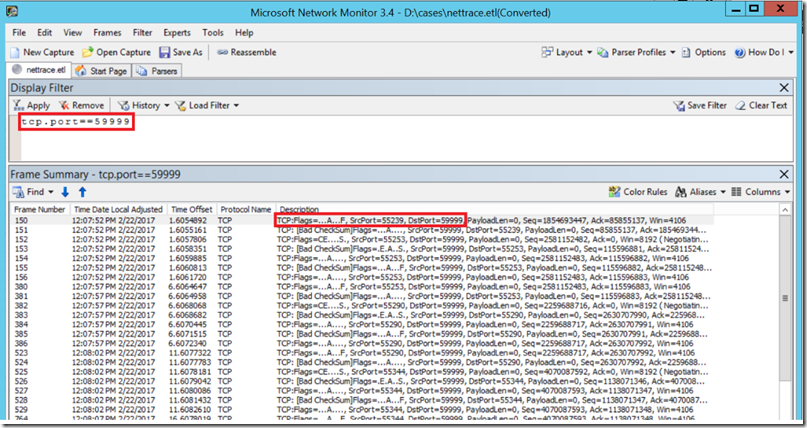

If a visual of the internal load balancer checks out, you can use network tracing to verify that the load balancer is pinging the probe port on the server hosting the primary replica. On the server hosting the primary replica, run netsh from the command line for 30 seconds:

netsh trace start capture=yes tracefile=d:\nettrace.etl maxsize=200 filemode=circular overwrite=yes report=no

To stop the trace run

netsh trace stop

Open the .ETL file using a tool like Network Monitor and filter for probe port activity. No traffic on the probe port of the virtual machine hosting the primary replica indicates one of two possibilities:

-

- The internal load balancer is not configured correctly.

- There is a network security group, firewall or corporate polcy that is interfering with the probe port traffic.

Here we can see that when we filter on port 59999 we see activity which indicates that the load balancer appears to be performing correctly.