How to Apply Transaction Logs to Secondary When it is Far Behind

Problem Large Send Queue

You discover that the log send queue for a given availability database has grown very large, which threatens your Recovery Point Objective (RPO) and the transaction log has grown very large on the primary replica, possibly threatening to fill the drive. The cause may have been for various reasons: you discover that the availability database synchronization was inadvertently suspended by an administrator, or even by the system and the problem was not identified until now.

Scenario 1 The database is not large

If it is reasonable to re-initialize the secondary replica copy of the database using a backup and restore of the database whose log file has grown very large, then you can allow synchronization to proceed to drain the log send queue or if the size of the transaction log is much larger than the database itself, remove the database from the availability group, shrink the log file and add it back into the availability group, re-seeding your secondary replicas with the newly added database.

- In SQL Server Management Studio (SSMS) remove the database from the availability group on the primary replica.

- Put the database in simple recovery mode on the primary replica. This will allow you to shrink the log file.

- Shrink the log file down to a reasonable size. To shrink the log file, in SSMS’ Object Explorer right-click the database, choose Tasks and then Shrink and then Files. For File type choose Log and click OK.

- Once log file has ben shrunk, configure the database for full recovery mode and then right-click the availability group, choose Add Database and use the wizard to add the database back and re-initialize the secondary replica with it.

Scenario 2 The database is very large, making re-initialization prohibitive to the secondary replicas

If the data portion of the database is very large combined with the remoteness of one or more secondary's, it may not be reasonable to use Scenario 1. Another option is to apply the log backups onto the database at the secondary, to 'catch up' the database at the secondary. Following are the steps.

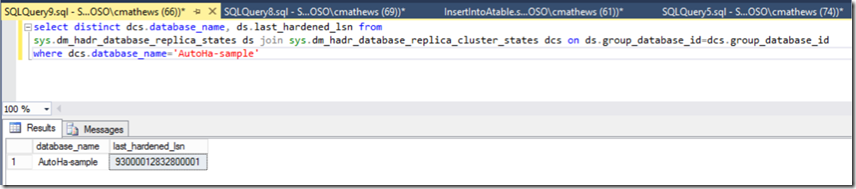

1. Determine the transaction logs that must be applied. Query the last_hardened_lsn for the database on the secondary. Connect to the secondary and run the following supplying the database name as the only predicate:

select distinct dcs.database_name, ds.last_hardened_lsn from

sys.dm_hadr_database_replica_states ds join sys.dm_hadr_database_replica_cluster_states dcs

on ds.group_database_id=dcs.group_database_id

where dcs.database_name='AutoHa-sample'

You will get results like this.

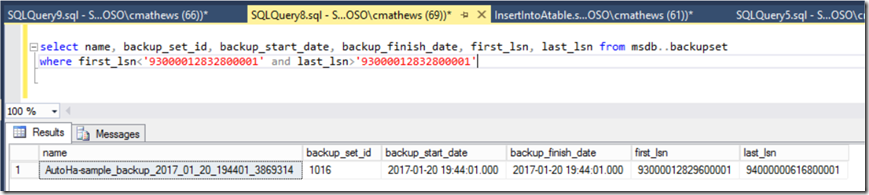

2. Query the log backups in MSDB to find what log backup the secondary LSN falls in the range of.

select name, backup_set_id, backup_start_date, backup_finish_date, first_lsn, last_lsn from msdb..backupset

where first_lsn<'93000012832800001' and last_lsn>'93000012832800001'

We see one row returned, we need to apply all logs starting with this log on the secondary replica:

Alternative to find the first transaction log backup Query sys.dm_hadr_database_replica_states.last_hardened_time for the database (same query as above). Use File Explorer to view the transaction log backup files and order by modified date. Compare the last_hardened_time with the backup modified dates to determine what transaction log backup you should start with, selecting the transaction log backup whose modified date is just beyond the last_hardened_time. Query that transaction log to verify the last_hardened_time falls in that transaction log backup:

restore headeronly from disk= N'f:\backups\AutoHa-sample_backup_2017_01_20_194401_3869314.bak’

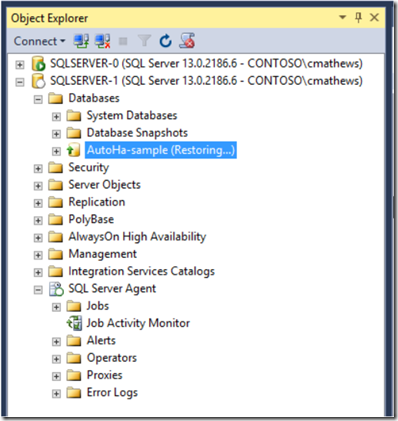

3. Take database out of the availability group on the secondary. Connect to the secondary replica and execute the following to take the database out of the secondary replica.

alter database [AutoHa-sample] set hadr off

On the secondary the database will be in Restoring and ready to apply logs to.

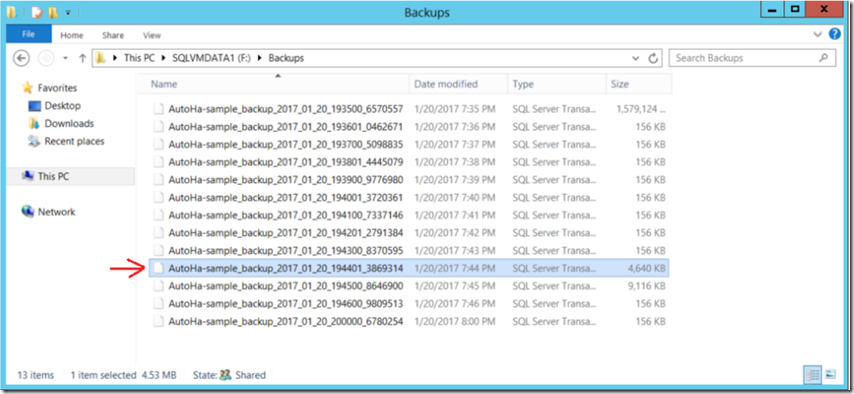

4. Apply transaction logs to database on the secondary replica Apply the transaction log backups. Here is a view of the log backup files. We identified the one called 'AutoHa-sample_backup_2017_01_20_194401_3869314' as containing our secondary database last_hardened_lsn.

Begin restoring with that log being sure to restore with 'no recovery' all the transaction log backups.

restore log [AutoHa-sample] from disk='\\sqlserver-0\Backups\AutoHa-sample_backup_2017_01_20_194401_3869314.trn' with norecovery

go

restore log [AutoHa-sample] from disk='\\sqlserver-0\Backups\AutoHa-sample_backup_2017_01_20_194500_8646900.trn' with norecovery

go

restore log [AutoHa-sample] from disk='\\sqlserver-0\Backups\AutoHa-sample_backup_2017_01_20_194600_9809513.trn' with norecovery

go

restore log [AutoHa-sample] from disk='\\sqlserver-0\Backups\AutoHa-sample_backup_2017_01_20_200000_6780254.trn' with norecovery

go

5. Add the database back into the availability group on the secondary and resume synchronization. Connect to the secondary replica and execute the following to add the database back into the availability group and resume sync.

alter database [AutoHa-sample] set hadr availability group = [contoso-ag]

go

alter database [AutoHa-sample] set hadr resume

go

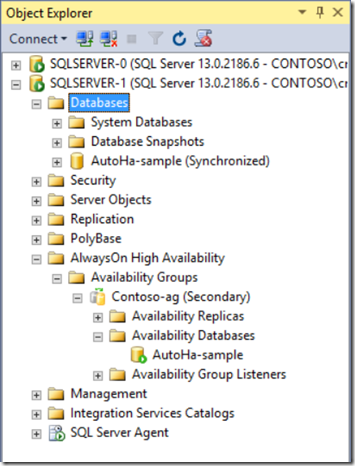

You can view the availability group database on the secondary to confirm it is added back and synchronized.