Manual Failover of Availability Group to Disaster Recovery site in Multi-Site Cluster

Setting up an Always On availability group in a multi-site cluster for disaster recovery (DR) scenario is a common practice. A common configuration is two nodes in the cluster at the primary data center with a file share or disk witness, and a third node at a remote data center. When configuring a remote node in your Windows cluster for a disaster recovery site, it is common to remove the quorum vote for that node. A normal vote configuration is for the nodes and witness in the primary datacenter to be configured with quorum votes, and the node at the DR site to not have a vote.

In the event that the primary data center is lost or expected to be offline for an extended period of time, the availability group must be brought online on the node at the DR site manually. The following steps are necessary in order to successfully bring the availability group online at the (DR) site.

Force Cluster to start at DR site node

With the failure of the primary data center, quorum will be lost. In order to bring the cluster service up on the remote node, then quorum must be forced, to start on the node at the DR site.

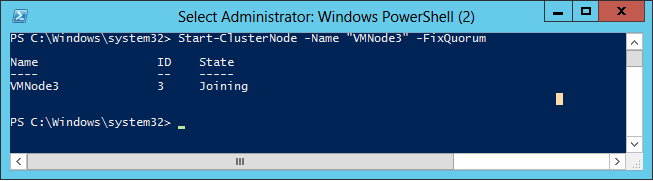

Start an elevated Windows PowerShell via Run as Administrator, and execute the following:

Start-ClusterNode –Name "<NodeName>" -FixQuorum

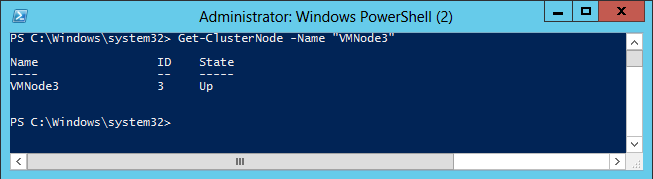

Confirm that Cluster has started on the node:

Get-ClusterNode –Name “<NodeName>”

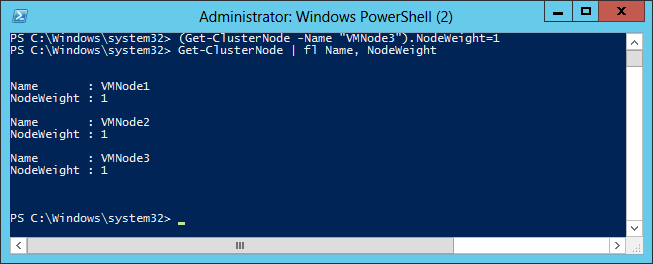

Adjusting Voting Rights for the cluster

With the cluster service started, voting rights for the nodes can be adjusted. If the remote node does not have a vote, then it will need to be configured to have a vote.

PowerShell:

(Get-ClusterNode –Name "NodeName").NodeWeight=1

Get-ClusterNode | fl Name, NodeWeight

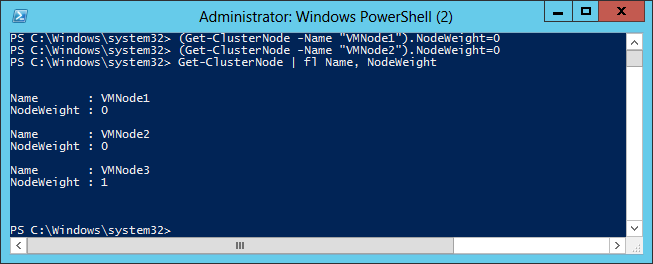

Once the remote node has been granted a vote for quorum, then remove the votes of the two nodes in the primary datacenter.

PowerShell:

(Get-ClusterNode –name "NodeName1").NodeWeight=0

(Get-ClusterNode –name "NodeName2").NodeWeight=0

Get-ClusterNode | fl Name,NodeWeight.

Bring Availability Group Resource Online

Once the cluster service on the remote node has started, the availability group will show offline in the Failover Cluster Manager, and cannot be brought online.

On the remote DR node, connect to SQL Server and issue the following query in order to bring the availability group online:

ALTER AVAILABILITY GROUP <availability group> FORCE_FAILOVER_ALLOW_DATA_LOSS

At this point, the availability replica is online in the primary role, and the availability databases should be available for production on the DR node.

IMPORTANT: When issuing the failover command, 'FORCE_FAILOVER_ALLOW_DATA_LOSS' must be issued because the cluster service was started with force quorum, even if the secondary was setup to be synchronous commit.

Attempting to failover with the command <ALTER AVAILABILITY GROUP <AVAILABLITY GROUP NAME> FAILOVER' would fail with the following message:

Msg 41142, Level 16, State 34, Line 1

The availability replica for availability group '<availability group>' on this instance of SQL Server cannot become the primary replica. One or more databases are not synchronized or have not joined the availability group, or the WSFC cluster was started in Force Quorum mode. If the cluster was started in Force Quorum mode or the availability replica uses the asynchronous-commit mode, consider performing a forced manual failover (with possible data loss).Otherwise, once all local secondary databases are joined and synchronized, you can perform a planned manual failover to this secondary replica (without dataloss). For more information, see SQL Server Books Online.

Synchronous Commit – Are My Availability Databases 'Failover Ready'?

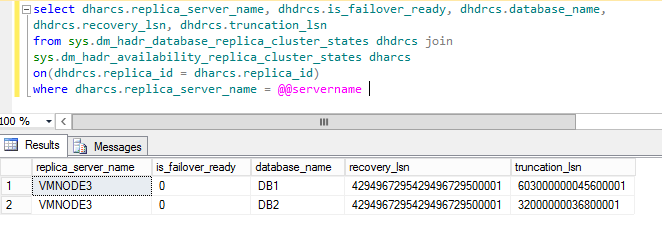

Generally, DR site availability replicas are configured for asynchronous commit, because of the performance implications caused by replicating a long distance. However, if the secondary was setup as synchronous commit, the following query will list the databases and their synchronization

status.

select dharcs.replica_server_name, dhdrcs.is_failover_ready, dhdrcs.database_name, dhdrcs.recovery_lsn, dhdrcs.truncation_lsn from sys.dm_hadr_database_replica_cluster_states dhdrcs join sys.dm_hadr_availability_replica_cluster_states dharcs on(dhdrcs.replica_id = dharcs.replica_id)where dharcs.replica_server_name = @@servername

The second column is_failover_ready indicates if the database is able to fail over without data loss (in a synchronized state). If the value of the column is 1, then the database was synchronized when the availability group went offline and can come online without any data loss. If the value of the column is 0, then the database was not synchronized when the availability group went offline, and there would be data loss if the database was brought online. If the secondary was setup as asynchronous, then the value of is_failover_ready would always be 0.

To Detect if there was data is loss when failing over to DR Site.

If this data was not captured from primary before failure, then there is no way to determine loss until the original primary is recovered. Save the the recovery_lsn and truncation_lsn from the query. When the original primary node is recovered, it will be in a suspended state. Query these values on the original primary (now in a secondary role) to determine the data loss between the original primary and the DR secondary (current primary).

NOTE: To keep from having the cluster experience a split brain scenario, the nodes in the primary datacenter should only be brought up, if the network connection between the two sites is working.

Reference Links:

https://technet.microsoft.com/en-us/library/cc730649(v=WS.10).aspx