Inspecting Solution For Performance

| | Quick Resource Box |

| My assignments included delivering performance workshops, reviewing architecture and design for performance, conducting performance code inspections, inspecting solution deployment for performance, and resolving performance incidents in production. In short, I was required to inspect the solution for performance at any phase of the development lifecycle. The ChallengeThe challenge I was constantly facing is how to efficiently and effectively communicate my recommendations to a customer. By customer I mean Business Sponsor, Project Manager, Solution Architect, Developer, Test Engineer, System Engineering, and End User. Different roles, different focus, different languages. If I am not efficient, I’d be wasting customer’s time. If I am not effective, my recommendations won’t be used. Performance LanguageWhat worked for me is establishing a common performance language across the team. First we’d agree on what performance is , and that is:

This simple definition helped when communicating a performance goals with decision makers in the inception stages of a project. Then we’d agree on what affects performance the most. I could not find any better categorization than Performance Frame:

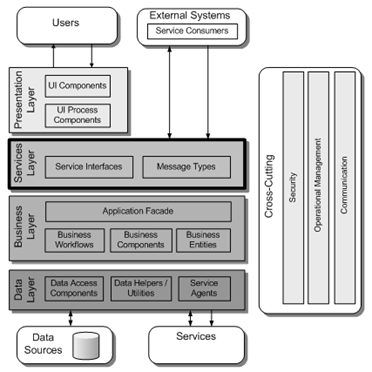

This simple frame helped during architect/design phase when working with Solution Architects on creating a blueprint of the solution. It also helped during coding phase when working with developers on improving their code for performance. During production incidents I’d usually run series of questions trying to narrow down to a specific category. Once identified, I’d go off and use specific performance scalpel to dissect the issue at hand. Following are two quick case studies of how the well established performance language helped to effectively and efficiently improve performance - one during the architecture phase, and the other when solving production performance incident. The Case Of Over-Engineered ArchitectureI was responsible for performance as part of architecture effort for one of our customers. Based on the requirements the team came up with the design that supports high level of decoupling. The reason for it was enabling future extensibility and exposure to external systems. The design looked similar to this [the image is from Chapter 9: Layers and Tiers]: The conceptual design recommended using WCF as a separate physical layer exposing the functionality to both external systems and to the application’s intrinsic UI. It also assumed DataSet as a DTO [Data Transfer Object]. Worth to note, that one of the quality attributes was very aggressive performance requirements in terms of response time. Using Performance Frame we reviewed the design for performance and identified that such design wouldn’t be optimal in regards to performance:

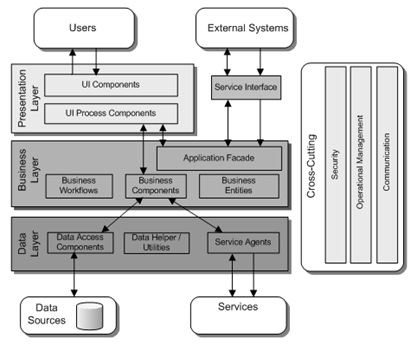

Next round of design improvements produced the conceptual design that exposed its functionality to it’s UI without going through the separate physical services layer, similar to this [the image taken from Chapter 9: Layers and Tiers]:

We all agreed that this time the design is simpler and should foster better response time for it’s native UI that account for 80% of the system’s workload. The Case Of Ever Growing CacheA customer complained that the application was throwing all active users periodically. The business was losing money as the application was responsible for getting new customers. We reviewed event logs for recycles and quickly identified that IIS was recycling and the reason is memory limits being hit. After quick interview with the team we assumed application was implementing caching in less than appropriate way or using data structures in a way that caused the recycles. We have taken few memory dumps and analyzed it using WinDBG. The culprit indeed was a datatable instantiated as a static variable serving for caching purposes. Since the datatable was instantiated as static variable it had no way to purge its values other than grow endlessly and cause the recycles. We were able to communicate our findings and recommendations back to the team using the performance language we established earlier. ConclusionPerformance like Security is never ending story, it’s work in progress. It’s never too early to start improving both. The trick is setting clear goals and frame the approach that works best for you and the rest of the team. What works best for you and your team? | |