Chain Of Responsibility Design Pattern – Focus On Security, Performance, And Operations

The pattern is also called Intercepting Filter, Pipeline, AOP, and may be few more… I am confused by the name for this design pattern.

“Life is really simple, but we insist on making it complicated.” - Confucius

No matter how they call it I like the idea of decoupling actions while processing one after another. Here is the definition from data & object factory:

Avoid coupling the sender of a request to its receiver by giving more than one object a chance to handle the request. Chain the receiving objects and pass the request along the chain until an object handles it.

Intercepting Filter visual from MSDN’s Discover the Design Patterns You're Already Using in the .NET Framework

.Net framework implements Chain Of Responsibility design pattern for many its internal mechanisms. My favorite is HttpModule. I like it so much I decided to build my own pipeline.

This post summarizes my steps I took to create my own simple implementation for this design pattern. I did not do any research purposely online and wanted to get my hands dirty without bias.

The goal

My goal was creating simple code that can be adopted and extended:

- The code should be very simple

- The code should provide pipeline infrastructure to invoke aspects/filters

- The code should allow dynamic invocation of any arbitrary number of aspects

- The code should include no optimization to stay simple

- The code should demonstrate practical idea and not be state of the art, production ready to go one.

What I needed

- Provide generic mechanism that will execute arbitrary logic – the Pipeline.

- The logic is encapsulated in decoupled components – Aspects.

- Aspects can be independently configured without rebuilding the application.

The design

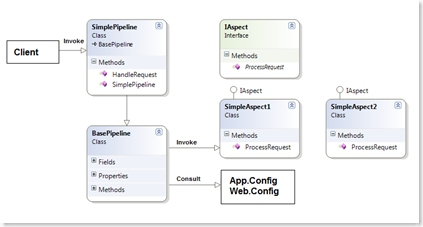

Summary of steps

- Step #1 – Create base classes and interfaces.

- Step #2 – Create concrete implementation of specific Pipeline.

- Step #3 – Modify config file.

- Step #4 – Test the solution.

Following section describes each step in details

Step #1 – Create base classes and interfaces. I needed two bases classes - BasePipeline and IAspect. BasePipeline is responsible to perform generic actions of:

- Consulting configuration file.

- Loading the configured aspects.

- And invoking them one by one.

private void LoadPipelineAspects() { aspects = new List<IAspect>(); Configuration config =ConfigurationManager.OpenExeConfiguration(ConfigurationUserLevel.None); string piplineConfig =config.AppSettings.Settings[pipelineName].Value; string[] aspectNames = piplineConfig.Split(','); for (int i = 0; i < aspectNames.Length; i++) { Type t = Type.GetType(aspectNames[i]); IAspect aspect=(IAspect)Activator.CreateInstance(t); aspects.Add(aspect); } }IAspect interface serves as a contract between BasePipline and aspect's concrete implementation. Take a look at the design diagram. When the client invokes HandleRequest method on concrete Pipeline implementation it invokes underneath generic implementation of the method of its base - BasePipeline:

public virtual bool HandleRequest(object request) { foreach (IAspect item in aspects) { item.ProcessRequest(request); } return true; }

Step #2 – Create concrete implementations. Concrete implementations of both types SimplePipeline and SimpleAspect are nothing fancy. SimplePipeline calls into its base but reserves the right to add any additions without interfering with any other concrete implementations:

public override bool HandleRequest(object request) { base.HandleRequest(request); return true; }To indicate what aspect is running I simply spit out aspect's name for aspect's ProcessRequest. That is the place where the whole logic should be performed - DB access, Web Services calls, request modifications etc :

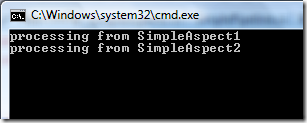

public bool ProcessRequest(object request) { Console.WriteLine("processing from SimpleAspect1"); return true; }

Step #3 – Modify config file. Config file - app.config or web.config in my case - holds the information about what aspects should be called for specific pipeline:

<appSettings> <add key = "MyPipeline.SimplePipeline" value ="MyPipeline.SimpleAspect1,MyPipeline.SimpleAspect2"/> </appSettings>The key is the type of the pipeline and the value is comma separated types of the aspects. When the concrete pipeline is instantiated by the client it already knows it type which serves to consult the config file and identify what aspects to load using reflection. This is how BasePipeline constructor looks like:

public BasePipeline() { pipelineName = this.ToString(); LoadPipelineAspects(); }See Step #1 for LoadPipelineAspects() function implementation where reflection magic happens to dynamically load the aspects.

Step #4 – Test the solution. To test the solution follow these steps:

Create Class library project and reference to the library where Pipeline base classes reside. Implement your own Pipeline while inheriting from BasePipeline.

Implement few aspects that implement IAspect interface.

Create simple Windows Console application, add reference to the libraries and add simple code similar to this to Main function:

MyPipeline.SimplePipeline sp = new MyPipeline.SimplePipeline(); sp.HandleRequest(null);In the config file add modifications as described in Step #3.

Run the application.

Done.

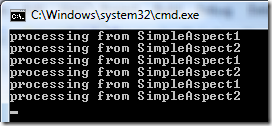

Any time one needs to change the logic, add/remove aspects - it is just a matter of tweaking config file - that is it.

I've just copied and pasted existing aspects inside the configuration file and run the application again, here is what I get:

<add key ="MyPipeline.SimplePipeline"

value ="MyPipeline.SimpleAspect1,

MyPipeline.SimpleAspect2,

MyPipeline.SimpleAspect1,

MyPipeline.SimpleAspect2,

MyPipeline.SimpleAspect1,

MyPipeline.SimpleAspect2"/>

I think it is cool.

Focus on Security

From security perspective there are few things to keep in mind. When invoking assemblies with reflection there is immediate risk of luring attacks that result in spoofed assemblies. For more information how to get protected see my related post below - .Net Assembly Spoof Attack.

Focus on Performance

Reflection is considered as slow operation. Everything is relative. In case where reflection is used in performance critical application you should consider performance optimization for reflection. One such optimization is constructor caching as described in More Provider Goodieness

Focus on Operations

From operations perspective it seems ideal case. I witnessed few times the situation where the change was not an option since it required whole rebuild of the application - something that operations team was not happy with and put veto on it.

The trick is constantly asking yourself "What I am optimizing? Security, Performance, or Operations?"

My related posts

- .Net Assembly Spoof Attack

- Basic HttpModule Sample (Plus Bonus Case Study - How HttpModule Saved Mission Critical Project's Life)

- How To Hack WCF - New Technology, Old Hacking Tricks

- AOP, Pipelines, Interceptors, and HttpModlues

- IIS 7 Great Finds - How To Setup IIS7 On Vista, Bulk Web Site Creation, ASP.NET Pipeline Integration With IIS7

Download the sample from my SkyDrive:

Have fun.