WP7: CLR Managed Object overhead

A reader contacted me over this blog to inquire about the overhead of managed objects on the Windows Phone. which means that when you use an object of size X the runtime actually uses (X + dx) where dx is the overhead of CLR’s book keeping. dx is generally fixed and hence if x is small and there are a lot of those objects it starts showing up as significant %.

There seems to be a great deal of information about the desktop CLR in this area. I hope this post will fill the gap for NETCF (the CLR for the Windows Phone 7)

All the details below are implementation detail and is provided just as guidance. Since these are not mandated by any specification (e.g. ECMA) they may and most probably will change.

General layout

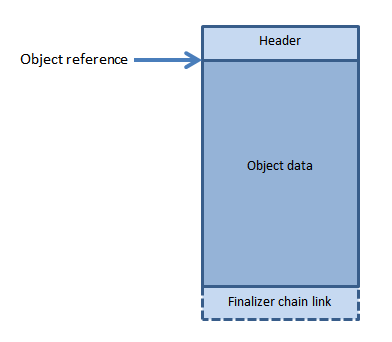

The overhead varies on the type of objects. However, in general the object layout looks something like

So internally just before the actual object there is a small object-header. If the object is a finalizable object (object with a finalizer) then there’s another 4-byte pointer at the end of the object.

So internally just before the actual object there is a small object-header. If the object is a finalizable object (object with a finalizer) then there’s another 4-byte pointer at the end of the object.

The exact size of the header and what's inside that header depends on the type of the object.

Small objects (size < 16KB)

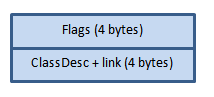

All objects smaller than 16KB has a 8 byte object header.

The 32-bits in the flag are used to store the following information

- Locking

- GC information. E.g.

- Bits to mark objects

- Generation of the object

- Whether the object is pinned

- Hashcode (for GetHashCode support)

- Size of the object

Since all objects are 4 byte aligned, the size is stored as an unit of 4 bytes and uses 11 bits. Which means that the maximum size that can be stored in that header is 16KB.

The second flag contains a 32-bit pointer to the Class-Descriptor or the type information of that object. This field is overloaded and is also used by the GC during compaction phase.

Since the header cost is fixed, the smaller the object the larger the overhead becomes in terms of % overhead.

Large Objects

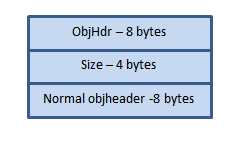

As we saw in the previous section that the standard object header supports describing 16KB objects. However, we do need to support larger objects in the runtime.

This is done using a special large-object header which is 20 bytes.

The overhead of large object comes to be at max of around 0.1%. A dedicated 32-bit size field allows the runtime to support very large objects (close to 2GB).

It’s weird to see that the normal object header is repeated twice. The reason is that very large objects are rare on the phone. Hence the runtime tries to optimize it’s various operations so that the large object doesn’t bring in additional code paths and checks. Given an object reference having the normal header just before it helps us in speeding various operations. At the same time having the header as the first data-structure of all objects helps us to be faster in other cases. Bottom line is that the additional 8 bytes or 0.04% extra space usage helps us in enough performance gains at other places so that we pay that extra overhead cost.

Pool overhead

NETCF uses 64KB object pools and then sub-allocates objects from it. So in addition to the per-object overhead it uses an additional 44-byte of object-pool-header per 64kb. This means another 0.06% overhead per 64KB. For every object, the contribution of this can be ignored.

Arrays

Arrays pan out slightly differently.

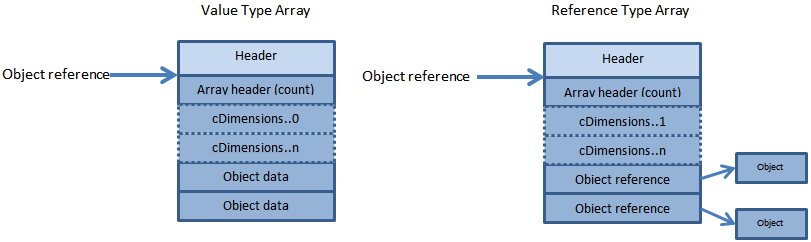

In case of value type array every member doesn’t really need to have the type information and hence the object header associated with it. The Array header is a standard object header of 8 bytes or big object header of 20bytes (based on the size of the array). Then there is a 32-bit count of the number of elements in the array. For single dimension array there is no more overhead. However, for higher dimension arrays the length in each dimension is also stored to support runtime bounds check.

The reference type arrays are similar but instead of the array contents being inlined into the array the elements are references to the objects on the managed heap and each of those objects have their standard headers.

Putting it all together

| Standard object | 8 bytes ( + 4 bytes for finalizable object) |

| Large objects (>16KB) | 20 bytes ( + 4 bytes for finalizable object) |

| Arrays | object overhead + 4 bytes array header + ( (nDimension > 1) ? nDimension x 4 bytes : 0) |